AI Sentiment Analysis Trading: What Grok Gets Right (And Wrong)

I added Grok sentiment analysis to my trading bot. Here's what AI sentiment analysis trading actually looks like — the signals that work and the noise that doesn't.

By Mike Hodgen

I built an AI trading bot from scratch late last year. Version 0.1 was pure technicals — moving averages, RSI, volume profiles, order flow data. It worked. Not spectacularly, but it was profitable on a small sample. The problem was obvious within weeks: the bot had no idea why something was moving. It could see that Bitcoin was breaking above resistance on volume, but it couldn't see that the breakout was being fueled by a rumor about a spot ETF approval spreading across X in real time. AI sentiment analysis trading was the missing layer. Not as a primary signal — I'm not naive enough to think Twitter vibes replace quantitative analysis — but as a confirmation filter that could tell me whether the narrative matched the price action. Crypto markets move on narrative faster than fundamentals. A single tweet from the right account can move a $2 billion token 8% in minutes. That's not noise. That's data. The hypothesis was simple: if I could score real-time sentiment from X — where crypto narratives break first, before they hit Bloomberg or CoinDesk — I could filter out false signals and catch momentum shifts earlier. I want to be clear upfront. This was an experiment, not a guaranteed alpha generator. I went in expecting modest improvements and a lot of tuning. That's exactly what I got. The tool choice was obvious. xAI's Grok has native access to X data. No other LLM has that. Claude can't see X. GPT can't see X. Gemini can't see X. If you want real-time social sentiment from the platform where crypto lives, Grok is the only model that doesn't require you to build and maintain a separate scraping pipeline.

How Grok Processes Real-Time Crypto Sentiment

The Data Pipeline

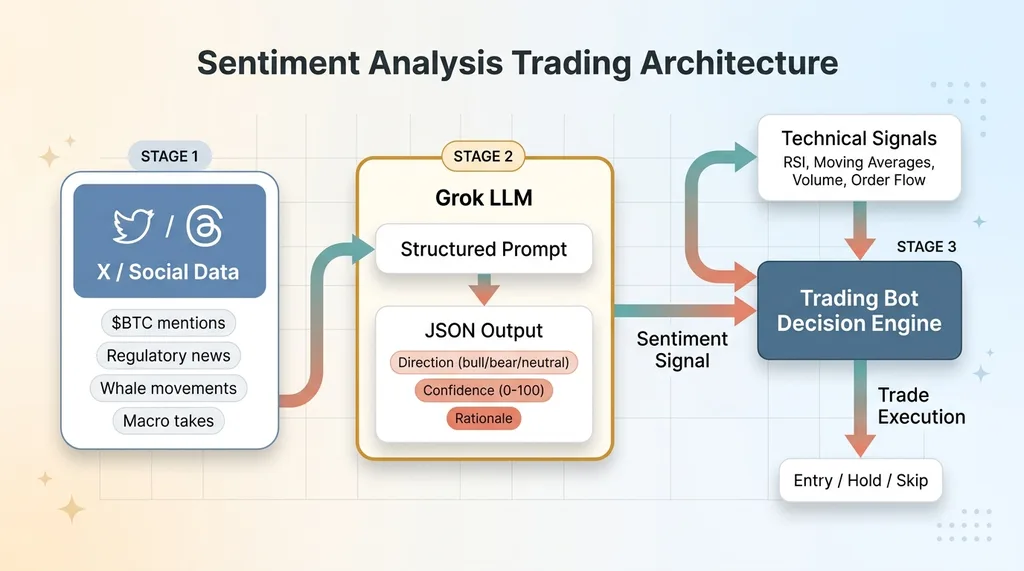

The architecture is straightforward, which is part of why it works. Grok queries X for specific crypto tickers and related narratives — not just "$BTC" but contextual threads about catalysts, regulatory news, whale movements, and macro takes. Raw X data goes into Grok with a structured prompt. Grok's job is to return a JSON object with three fields: direction (bullish, bearish, neutral), confidence score (0-100), and a one-sentence rationale. That JSON plugs directly into the trading bot's decision engine alongside the technical signals.

Sentiment Analysis Trading Architecture

Sentiment Analysis Trading Architecture

Here's what a sample output looks like:

{

"ticker": "BTC",

"sentiment": "bullish",

"confidence": 82,

"rationale": "Multiple independent analysts noting accumulation patterns, ETF inflow data trending positive, engagement velocity high on institutional adoption thread",

"sources_weighted": 14,

"timestamp": "2024-12-15T14:32:00Z"

}

The bot consumes that like any other data feed. No manual interpretation required.

Signal Quality Scoring

This is where the real work happened. Not all sentiment is equal, and treating every X post the same is a fast way to lose money.

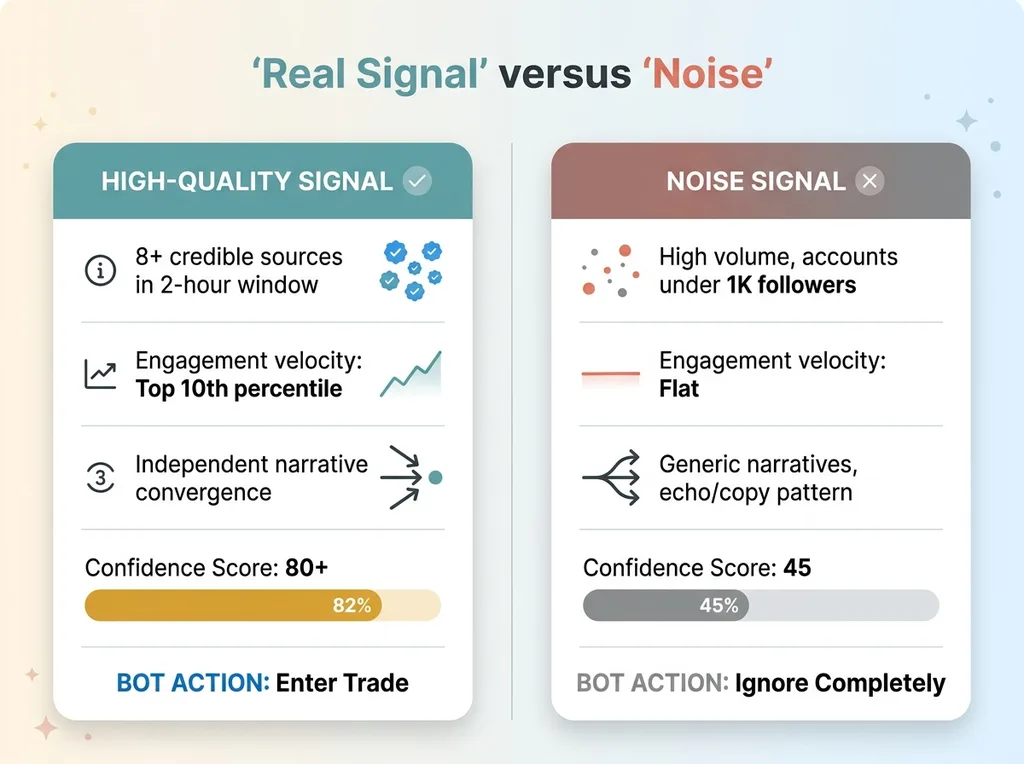

Signal Quality Scoring — Real Signal vs. Noise

Signal Quality Scoring — Real Signal vs. Noise

A whale wallet thread from a known on-chain analyst with 50,000 engaged followers carries fundamentally different weight than 10,000 retail accounts posting rocket emojis. I built a scoring layer on top of Grok's raw output that weights three factors:

- Source credibility: Accounts with a track record of accurate calls, verified on-chain analysts, institutional researchers. Weighted 3-5x vs. anonymous retail accounts.

- Engagement velocity: How fast a take is spreading matters more than total engagement. A thread getting 200 retweets in 10 minutes is a stronger signal than one with 2,000 retweets over two days.

- Narrative consistency: Are multiple independent sources converging on the same thesis without obviously copying each other? Three unrelated analysts noting the same accumulation pattern is a signal. One viral thread getting echoed verbatim is not.

A high-quality bullish signal looks like this: 8+ credible sources within a 2-hour window, engagement velocity in the top 10th percentile, independent narrative convergence, confidence score 80+. That's the kind of signal that meaningfully shifts the bot's entry bias.

A noise signal looks like this: high volume of positive mentions but all from accounts under 1,000 followers, engagement velocity flat, narratives are generic ("BTC to 100k!"), confidence score 45. The bot ignores this completely.

Grok vs Claude for Trading Sentiment: An Honest Comparison

I tested both. People searching for "Grok vs Claude trading" want a straight answer, so here it is.

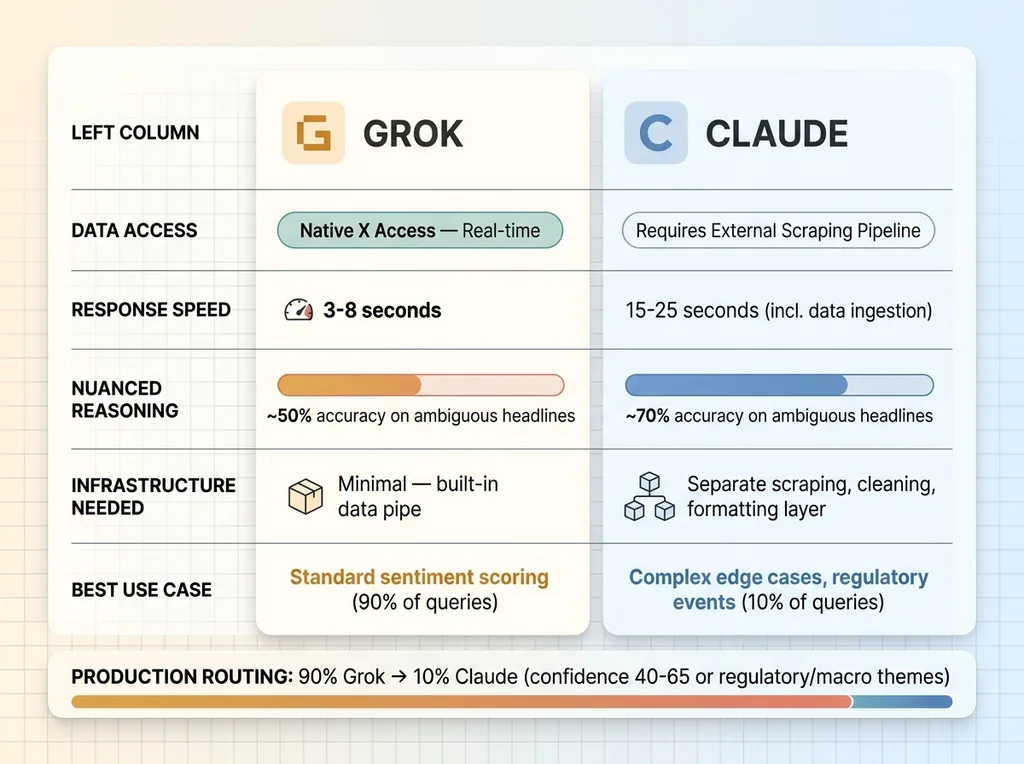

Grok vs Claude for Trading Sentiment

Grok vs Claude for Trading Sentiment

Grok's advantage is data access. It can see what's happening on X in near real-time without me building a separate scraping infrastructure. That alone saves weeks of engineering and ongoing maintenance. For crypto-specific sentiment, where the primary signal source is X, this is a massive structural advantage. Grok returns a usable sentiment score in roughly 3-8 seconds depending on query complexity.

Claude's advantage is reasoning depth. When I fed Claude the same raw X data manually, its analysis of complex narratives was noticeably better. A regulatory headline like "SEC delays ETF decision" — is that bearish (delay = bad) or actually neutral-to-bullish (delay ≠ rejection, was expected)? Claude parsed that nuance more accurately about 70% of the time compared to Grok's roughly 50%.

The tradeoff is real. Grok is faster and has the data pipe built in. Claude is more thoughtful but requires me to build and maintain the data ingestion layer externally — scraping X via API, cleaning the data, formatting it, then sending it to Claude. That's an extra 15-25 seconds of latency and a whole separate infrastructure to maintain.

My conclusion: use both. Grok handles ingestion and initial scoring for the standard workflow. For complex edge cases — major regulatory events, ambiguous macro news, black swan adjacent situations — the bot routes to Claude for a second opinion before executing. This is the same multi-model architecture philosophy I use across everything I build. Different models for different jobs. Forcing one model to do everything is like hiring one person to do sales, engineering, and accounting.

In production, 90% of sentiment queries go to Grok only. About 10% get routed to Claude for additional analysis. The routing logic is simple: if Grok's confidence is between 40-65 (the ambiguous zone) or if the query involves regulatory or macro themes, escalate to Claude.

When Sentiment Analysis Actually Helps

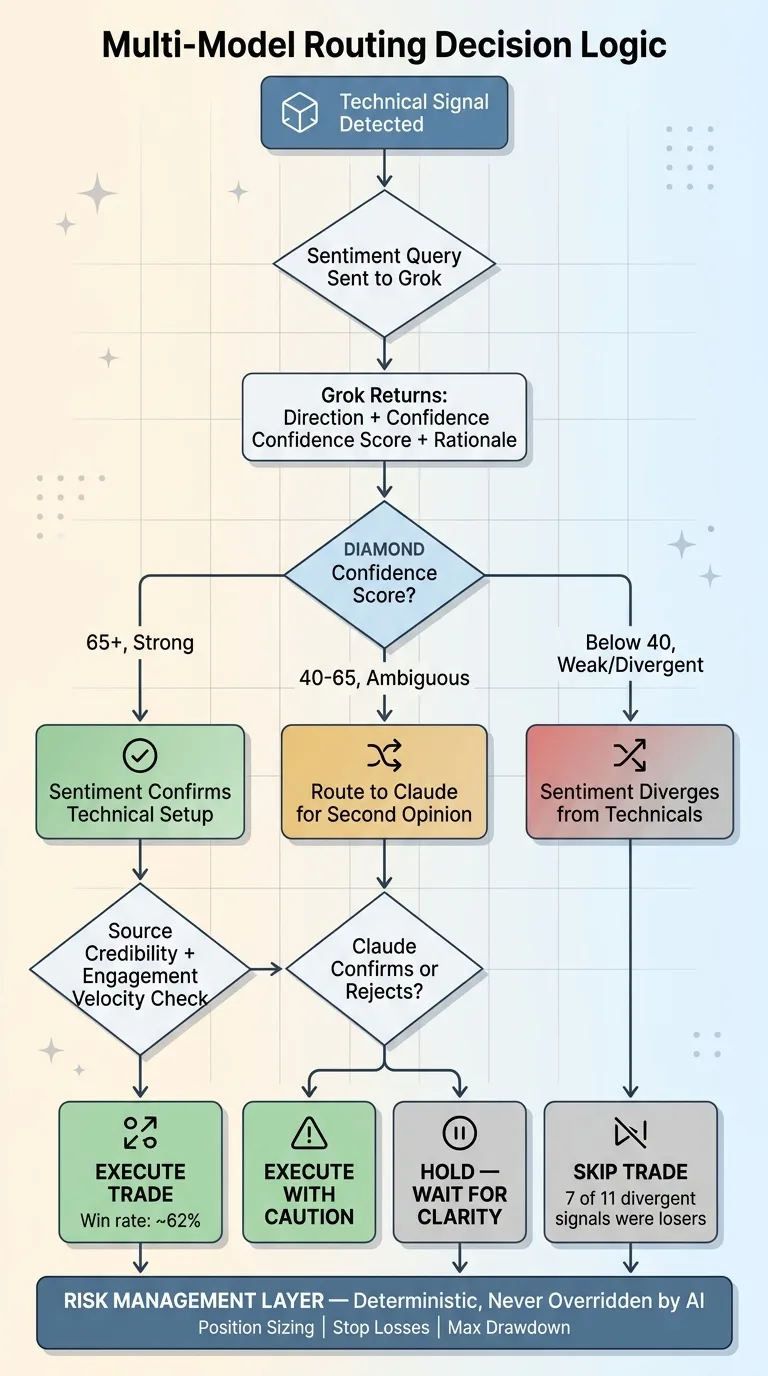

Multi-Model Routing Decision Logic

Multi-Model Routing Decision Logic

Catching Momentum Shifts Early

The clearest edge showed up on momentum trades. When a technical breakout was confirmed by surging positive sentiment — confidence score 85+ with strong source credibility — those trades performed measurably better.

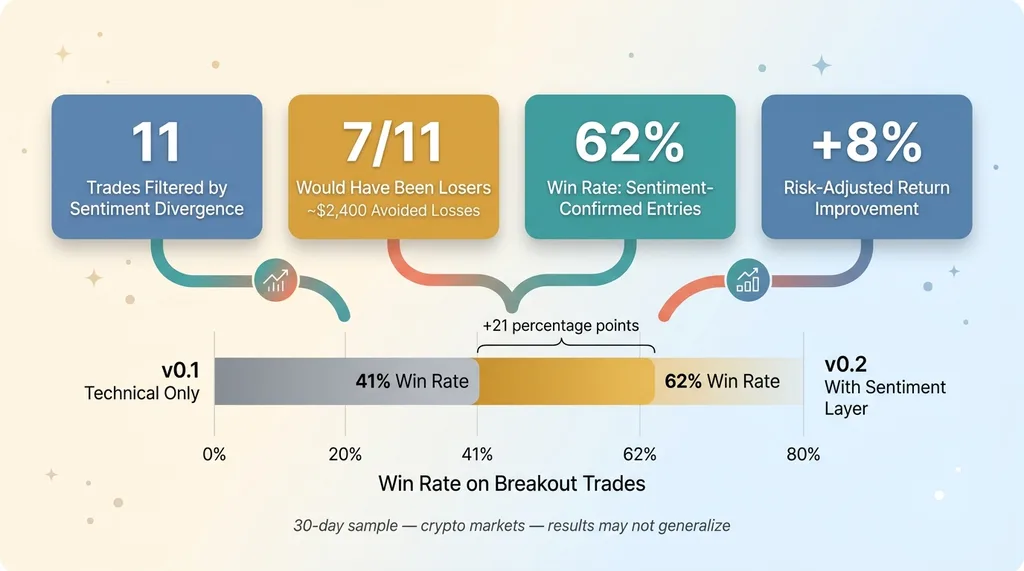

Out of the sample set, breakouts with strong sentiment confirmation hit their target roughly 62% of the time. Breakouts without sentiment confirmation (or with flat sentiment) hit target about 41% of the time. That's a meaningful difference. It's not magic. It's not 90% win rate. But a 20+ percentage point improvement in hit rate on a specific trade type is real edge.

The reason makes intuitive sense. A technical breakout backed by genuine narrative momentum — new information spreading, credible analysts converging on a thesis — has fuel behind it. A breakout on thin air, where the chart says go but nobody in the market is talking about why, is more likely to fade.

Filtering False Breakouts

This is where sentiment saved the most money.

Price breaks resistance. Volume looks decent. The technicals say go. But sentiment is divergent — either actively negative or just confused and mixed. The bot held off.

Over 30 days, the bot flagged 11 potential entries where technicals looked good but sentiment diverged. I tracked what would have happened. Seven of those 11 would have been losers — either stopping out or fading back below the breakout level within hours. That's 7 avoided losses on trades the technical-only bot would have taken.

The key insight: sentiment works best as a confirmation layer, not a primary signal. It adds edge to an already-sound technical setup. It does not replace technical analysis. I have never once entered a trade on sentiment alone, and I don't intend to start.

When Sentiment Is Pure Noise

The Echo Chamber Problem

This is the part most people writing about crypto sentiment AI won't tell you. During hype cycles, sentiment analysis becomes almost useless — and potentially dangerous.

When the market is euphoric, sentiment is overwhelmingly positive. Everyone is bullish. Source credibility gets muddied because even smart analysts get caught up in momentum. The bot flagged bullish sentiment scores of 90+ during periods that turned out to be local tops. The sentiment was real — people genuinely believed — but it was wrong.

AI can't easily distinguish genuine conviction based on new information from coordinated shilling or reflexive hype. When 95% of X is bullish, a sentiment score of 95 tells you almost nothing. Extreme consensus is a contrarian signal, but teaching an LLM to interpret it that way reliably is a problem I haven't fully solved.

Sentiment Lag on Major Events

By the time sentiment shifts during a major crash or exploit, price has already moved. This isn't a Grok problem — it's structural. If a major exchange gets hacked, price drops 15-20% in seconds. The sentiment shift follows price by minutes to hours. By the time Grok can score the narrative shift, you're already underwater.

Sentiment analysis is forward-looking for slow-building narratives and backward-looking for sudden events. That's an important distinction. It helps you catch a gradually building thesis before it hits mainstream. It does not protect you from a flash crash.

Third limitation: low-cap coins. Anything outside the top 50 by trading volume simply doesn't have enough quality discussion on X to generate a reliable sentiment score. The bot returns "insufficient data" and falls back to technicals only. Which is the right behavior — a bad signal is worse than no signal.

This connects to a broader principle: risk management stays deterministic. Sentiment informs entries. It never touches position sizing, stop losses, or maximum drawdown limits. Those are rules, not suggestions, and no AI confidence score overrides them.

The Numbers After 30 Days

I want to frame this honestly. Thirty days is a small sample. These are crypto markets, which are uniquely sentiment-driven. Results may not generalize to other asset classes.

30-Day Performance Results

30-Day Performance Results

Here's what I measured against the technical-only v0.1 baseline:

- 11 trades filtered due to sentiment divergence (technicals said go, sentiment said wait)

- 7 of those 11 would have been losers based on tracking what happened afterward — roughly $2,400 in estimated avoided losses on my position sizes

- Win rate on sentiment-confirmed entries: 62% vs. 41% on unconfirmed entries

- Overall portfolio performance: modest improvement, roughly 8% better risk-adjusted return vs. the baseline period

These aren't retirement numbers. But a small edge applied consistently compounds. An 8% improvement in risk-adjusted returns over a month, if it holds, is meaningful over a year.

The system is v0.2. I'm actively tuning confidence thresholds (currently experimenting with raising the "strong signal" cutoff from 80 to 85), adjusting source credibility weights as I get more data on which accounts actually predict price accurately, and working on reducing false positives during high-euphoria periods. This is iterative work. It doesn't ship once and run forever.

The Same Architecture Works Beyond Trading

Here's why I'm writing about this on a blog aimed at business leaders, not crypto traders.

The architecture — real-time sentiment scoring from social data, quality-weighted signals, multi-model routing for different complexity levels — applies far beyond trading. I've built similar scoring systems for competitive intelligence and content strategy in my own DTC fashion brand.

A CEO running a consumer brand faces the same problem: there's real signal in social media about your products, your competitors, and your market. But it's buried in noise. You need a system to extract, score, and route it to decisions. Whether that's catching a negative trend about your product before it hits review sites, spotting a competitor's positioning shift in real time, or measuring reception of a product launch within hours instead of weeks.

The tools are the same. The models are the same. The engineering principles are the same. The domain just changes.

Thinking About Turning Unstructured Data Into Decisions?

If any of this resonated — whether it's the trading application or the broader idea of building AI systems like this for your business — I'd be happy to talk through it. I do free 30-minute discovery calls where we look at your operations, figure out where you have unstructured data that should be driving decisions, and map out what a system would actually look like.

No slides. No pitch deck. Just a conversation about what's possible and what's practical.

Get AI insights for business leaders

Practical AI strategy from someone who built the systems — not just studied them. No spam, no fluff.

Ready to automate your growth?

Book a free 30-minute strategy call with Hodgen.AI.

Book a Strategy Call