The AI Health Check-In App That Replaced Paper Journals

I built an AI health check-in app for a family member after paper journals failed. Here's how conversational agents solve the compliance problem.

By Mike Hodgen

Someone I love has multiple chronic health conditions. Their doctor — a good one — handed them a paper journal and said: track your symptoms, meals, mood, and when you take your medications. Standard advice. The kind of thing that sounds reasonable in a 15-minute appointment. Compliance was solid for about 10 days. Then entries got shorter. Then sporadic. By week three, the journal lived under a stack of mail. This is the moment I decided to build an AI health check-in app that actually works — not because I wanted a project, but because paper was failing someone I care about.

Paper Health Journals Fail. The Data Proves It.

The Two-Week Cliff

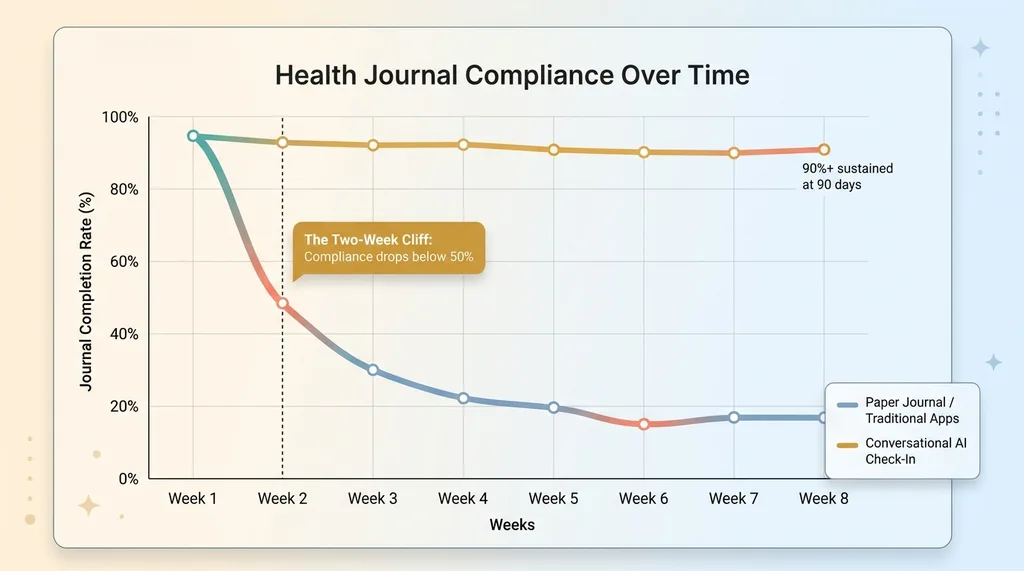

The research backs up exactly what I watched happen in real time. Studies on self-monitoring adherence consistently show health journal compliance drops below 50% within two weeks. By six weeks, you're looking at sub-20% completion rates. This isn't unique to paper — apps like MyFitnessPal and most symptom trackers follow the same dropout curve. The UI is prettier, but the fundamental problem is identical: they require structured input from someone who doesn't think in structured data.

The Two-Week Compliance Cliff

The Two-Week Compliance Cliff

My family member didn't stop tracking because they stopped caring. They stopped because the journal was a second job on top of already feeling terrible.

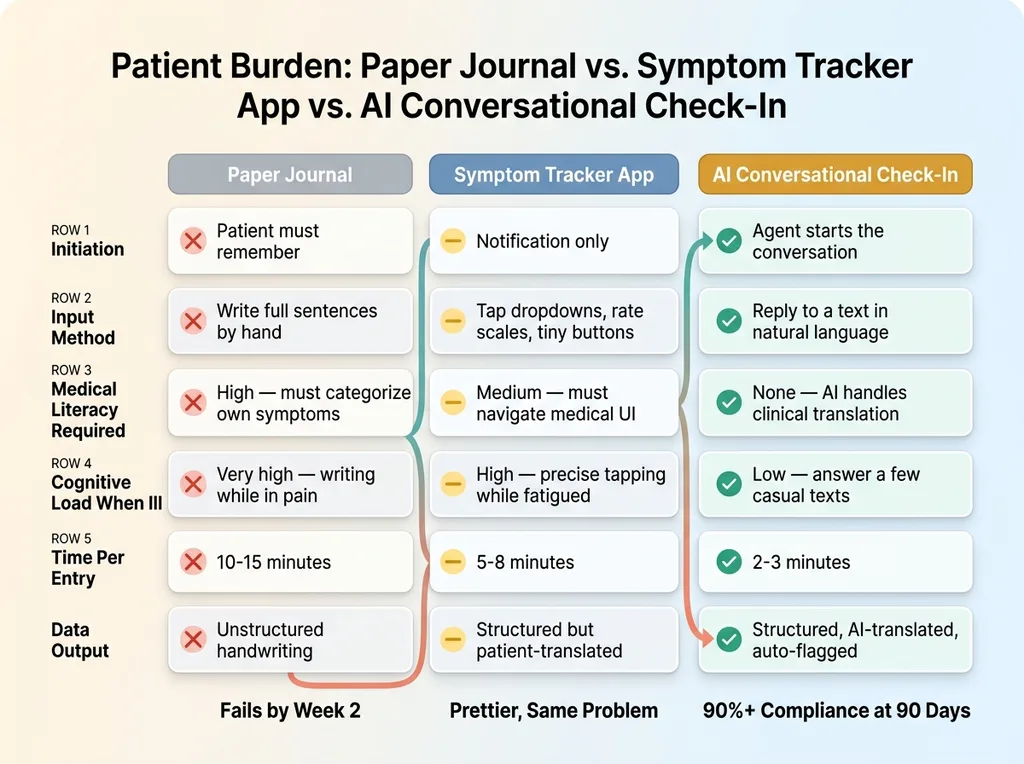

Why Compliance Isn't a Willpower Problem

Think about what a paper health journal actually demands. The patient has to remember what to track without prompting. They have to categorize their own symptoms, which requires a level of medical literacy most people don't have. They have to write coherently when they might be in pain, exhausted, or cognitively foggy from their condition or medications. And they need to do all of this consistently, at roughly the same time each day.

Why Paper Journals and Apps Fail: The Burden Comparison

Why Paper Journals and Apps Fail: The Burden Comparison

For elderly patients or anyone dealing with the cognitive load that comes with chronic illness, this is an unreasonable ask. We're blaming people for non-compliance when the tool itself is broken.

The symptom tracker apps aren't much better. They replaced the paper with a screen but kept the same paradigm: pick from dropdowns, rate things on scales, tap tiny buttons. Slightly better UX, same fundamental problem. The patient is still doing the clinical translation work. That's backwards.

What a Conversational AI Health Check-In Actually Looks Like

The Daily Conversation Flow

I built a system that flips the entire model. Instead of waiting for the patient to remember to open a journal, the agent initiates a conversation every morning at a set time via a messaging interface. It starts open-ended:

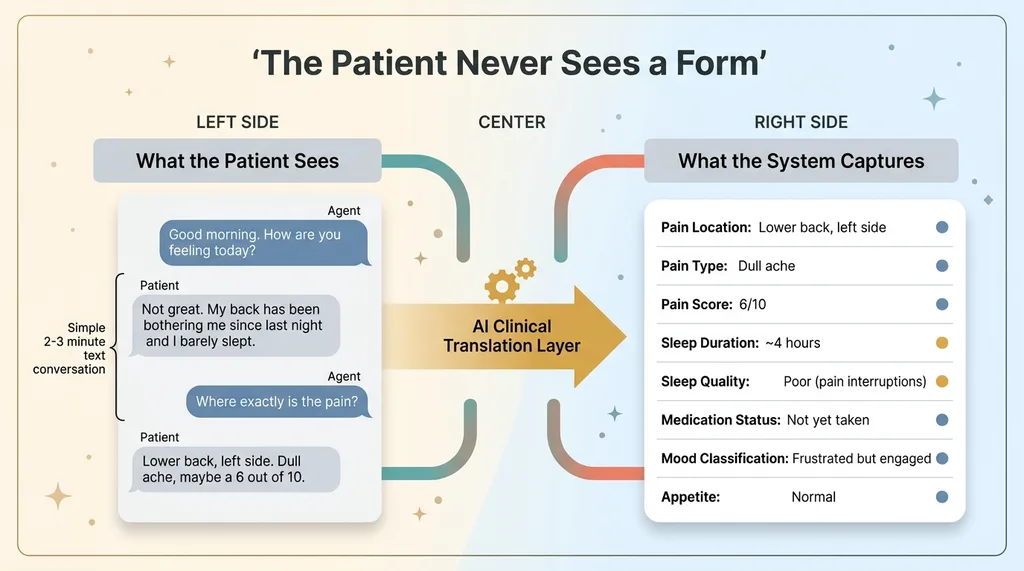

Agent: Good morning. How are you feeling today?

Patient: Not great. My back has been bothering me since last night and I barely slept.

Agent: I'm sorry to hear that. Where exactly is the pain — lower back, upper back, or somewhere else?

Patient: Lower back, left side. It's a dull ache, maybe a 6 out of 10.

Agent: Got it. You mentioned you barely slept — do you think you got less than 4 hours, or somewhere between 4 and 6?

Patient: Probably around 4. I kept waking up because of the pain.

Agent: That's tough. Have you taken your morning medications yet?

Patient: Not yet, about to.

That's it. The whole check-in takes 2-3 minutes. Compare that to 10-15 minutes with a paper journal — which is exactly why people skip it.

The conversation adapts to what the patient actually says. Mention pain, and it follows up on location, intensity, duration. Mention sleep, and it asks about quality and hours. Say "I feel fine" and it runs through a quick baseline set of questions and moves on. It feels like texting with a caring nurse, not filling out a medical form.

What the Patient Sees vs. What the System Captures

The patient sees a simple text conversation. Behind the scenes, the AI is extracting structured data from every unstructured response: pain scores mapped to a 0-10 scale, sleep duration and quality metrics, mood classification based on language patterns, medication adherence signals, food intake categories when meals come up.

Patient Conversation vs. Structured Data Extraction

Patient Conversation vs. Structured Data Extraction

The patient never sees a form. They never pick from a dropdown. They just talk. The system handles all the clinical translation — the exact work that makes paper journals and traditional apps fail.

This is a daily health tracking AI that works because it removes every barrier that causes dropout. No remembering what to track. No medical vocabulary required. No writing full sentences when you feel awful. Just answer a few texts.

The Intelligence Layer: Summaries, Flags, and Mood Over Time

This is the part that separates a real AI health journal from a chatbot with a medical skin.

Daily Summaries for Caregivers

After each check-in, the system generates a plain-English daily summary. As the family caregiver, I can read it in 30 seconds:

"Reported lower back pain (left side, 6/10, dull ache) persisting since last night. Sleep approximately 4 hours with pain-related interruptions. Morning medications not yet taken at time of check-in. Mood: frustrated but engaged. Appetite mentioned as normal."

No log files. No raw conversation transcripts. Just the clinical picture, distilled and readable.

Automatic Flag Generation

The system generates alerts when something is concerning. These aren't arbitrary thresholds I made up — they're based on patterns that matter clinically:

- "Pain score trending up 3 consecutive days" — might indicate a flare or failed treatment

- "Reported skipping evening medication twice this week" — adherence gap that needs attention

- "Sleep duration dropped below 5 hours for 4 of last 7 days" — sustained sleep disruption

- "Mentioned dizziness for the first time in 30 days" — new symptom introduction

Flags are categorized by urgency. Most are informational. Some trigger immediate caregiver notification.

Mood and Symptom Trend Analysis

Here's where it gets genuinely useful. The AI classifies emotional state from conversational cues — word choice, sentence length, response latency, topics volunteered versus pulled. It's not asking "rate your mood 1-10," which produces useless data because people anchor to whatever they said yesterday.

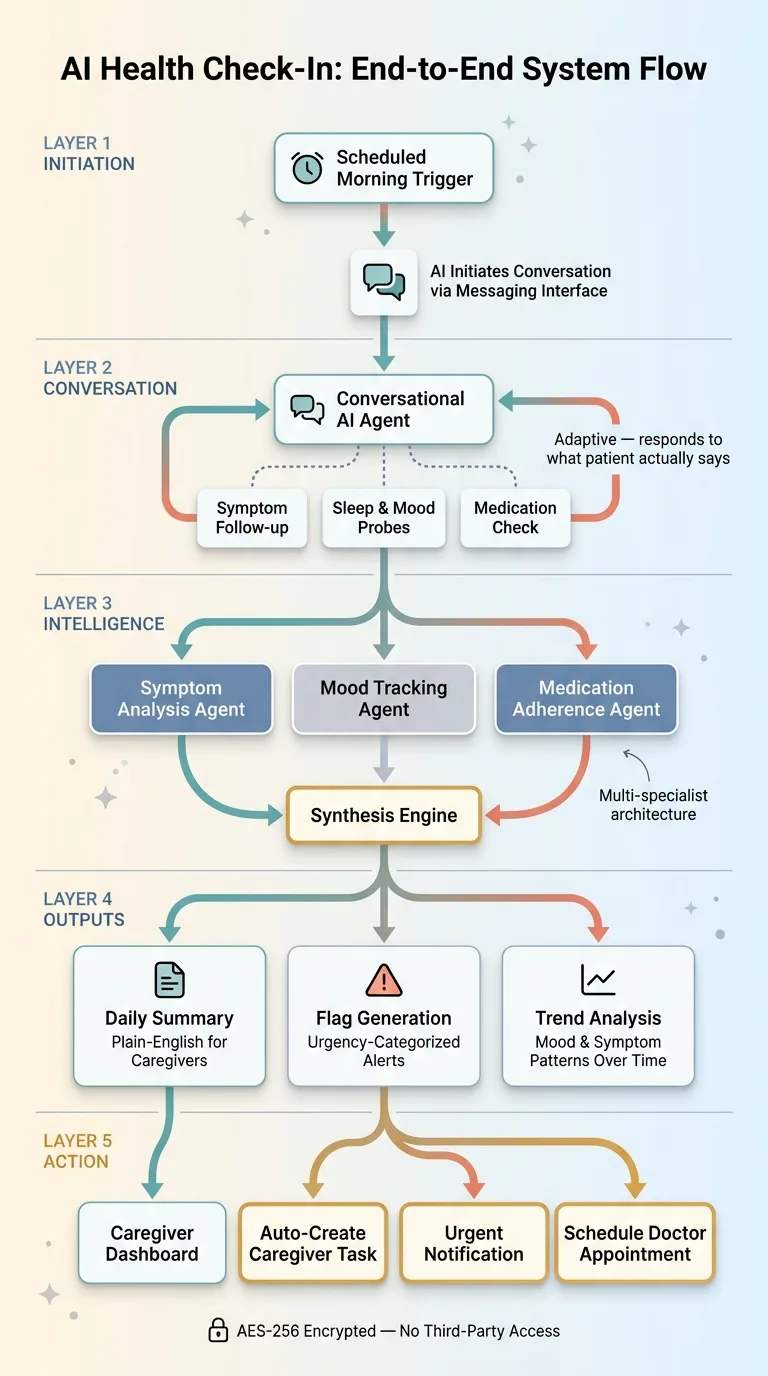

Over weeks, this creates a mood trajectory that reveals patterns invisible in daily snapshots. I built this using a similar multi-specialist AI medical team architecture, where different agents handle symptom analysis, mood tracking, and medication adherence independently before their outputs are synthesized.

The caregiver dashboard shows summaries, flags, and trends in one view. My family member's doctor was initially skeptical. After seeing two months of data — organized, trended, and flagged — they asked if other patients could use it.

Auto-Task Creation: When the Check-In Triggers Action

From Symptom to Task in Seconds

The check-in doesn't just record data. It creates action. If the patient mentions they're running low on a prescription, a task is automatically created for the caregiver to call the pharmacy. If a flag triggers — sustained elevated pain, for instance — a task is created to schedule a doctor appointment. If the patient mentions a fall or near-fall, an urgent notification goes out immediately.

Full System Architecture: From Check-In to Action

Full System Architecture: From Check-In to Action

This works through Model Context Protocol in production, which connects the conversational agent to task management and notification systems. The conversation layer talks to the action layer without any manual bridging.

Medication and Appointment Reminders

The system also handles proactive outreach: medication timing reminders, upcoming appointment alerts, even hydration prompts adjusted for local weather data. This turns a passive journal into an active care coordination tool.

One specific example that justified the entire build: during a routine morning check-in, the patient mentioned they'd started taking a turmeric supplement a friend recommended. The AI flagged this against their existing prescription list and identified a potential interaction with their blood thinner. That flag went to me within minutes. I called their doctor that afternoon. The doctor confirmed it was a real risk and told them to stop the supplement immediately.

A paper journal would have never caught that. The patient wouldn't have thought to mention it at their next appointment, which was six weeks away.

Privacy and Data Control: Why I Built This Instead of Buying It

Consumer Health Apps Have a Data Problem

Why not just use an existing health tracking app? Three reasons, and they're not small.

First, consumer health apps sell data. This isn't speculation — studies consistently show 80%+ of mental health and wellness apps share user data with third parties. For a family member's daily health conversations — including medication details, mood states, symptom descriptions — that's not a tradeoff I'm willing to make.

Second, existing apps don't do the intelligence layer. They track. They don't think. They give you charts you have to interpret yourself. The gap between "data collected" and "insight delivered" is exactly where the value lives, and commercial apps don't cross it.

Third, custom means the system adapts to this specific person's conditions, medications, and care team. Not a generic symptom list. Not a one-size-fits-all questionnaire. A system that knows their prescriptions, their baseline pain levels, their doctor's specific concerns.

AES-256 Encryption for Health Conversations

Every conversational log is encrypted using AES-256 at rest. Only designated family members and caregivers can access summaries. The LLM processes the conversation but doesn't retain it. No training on the data. No third-party access. No dark patterns trying to upsell a premium tier.

This is the real advantage of building your own conversational health AI: total control over the most sensitive data someone has. Their health, their medications, their emotional state — stored exactly where you decide, accessible only to who you decide.

Results After 90 Days: What Changed

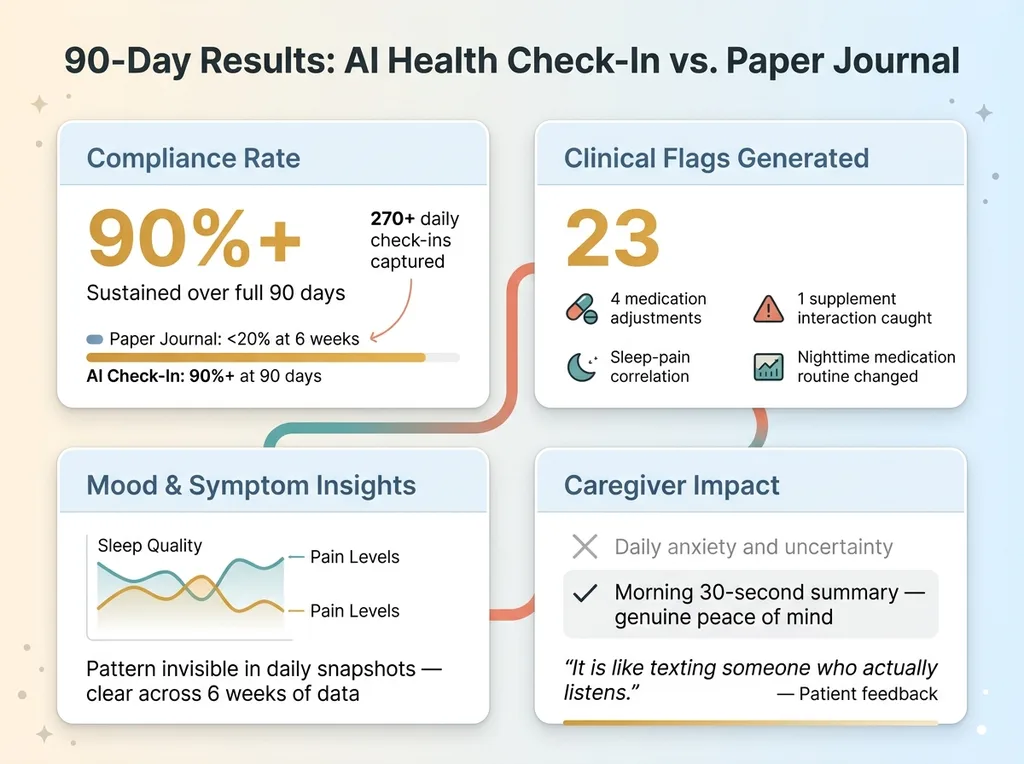

The numbers tell the story.

90-Day Results Comparison

90-Day Results Comparison

Compliance: Check-in completion rate held above 90% for the full 90 days. That's against a baseline of sub-20% with the paper journal at the six-week mark. The system captured 270+ daily check-ins — a dataset that would have been physically impossible to build with pen and paper.

Clinical value: 23 flags generated over 90 days. 4 of those led directly to medication adjustments after the doctor reviewed the trend data. One caught the supplement interaction I mentioned. Another identified that pain consistently spiked on days following poor sleep, which led to a change in the nighttime medication routine that actually helped.

Mood insights: The trend analysis revealed a correlation between sleep quality and pain levels that wasn't obvious in daily snapshots but became unmistakable across six weeks of data. This gave the doctor something actionable — not a patient saying "I've been feeling worse," but structured evidence showing exactly when and why.

Caregiver impact: I went from daily anxiety about "how are they really doing" to a 30-second morning summary that gave genuine peace of mind. On hard days, I could see it. On good days, I could see that too. The uncertainty was the worst part, and the system eliminated it.

When I asked the patient what they thought, they said: "It's like texting someone who actually listens."

That's the design goal. Not a medical device. Not a replacement for doctors. A compliance tool that works because it meets people where they are — in a conversation, not a spreadsheet.

Where Conversational Health AI Goes From Here

Elder Care and Chronic Disease Management

The architecture I built for one person scales to many. The same system works for elder care facilities monitoring dozens of residents, chronic disease management programs where compliance determines outcomes, employee wellness programs that actually get used instead of collecting dust, and post-surgical recovery tracking where early flag detection prevents costly readmission.

The pattern is always the same: replace structured input that humans won't complete with conversation that humans naturally engage with, then let AI do the structuring. It works because it's aligned with how people actually communicate, not how databases want to receive information.

Building This for Your Organization

For organizations managing patient populations or employee health, this is a real system that produces real data. Not a wellness app that gets deleted after a week. The first version took a weekend to build. Hardening it for production took a few weeks. I know because I did it.

If you're running a care facility, managing a chronic disease program, or just trying to build something that actually tracks health outcomes instead of collecting dust, this is the kind of project I take on.

Want to Explore What AI Could Do for Your Organization?

I built this AI health check-in app because someone I love needed it and nothing on the market came close. But every week I talk to business leaders with their own version of this problem — a process that depends on human compliance, generates messy data, and fails silently until something goes wrong.

If that sounds familiar, let's talk. I do a free 30-minute strategy call — no pitch deck, no sales team, just a real conversation about your operations and where AI actually fits.

Get AI insights for business leaders

Practical AI strategy from someone who built the systems — not just studied them. No spam, no fluff.

Ready to automate your growth?

Book a free 30-minute strategy call with Hodgen.AI.

Book a Strategy Call