Teaching Claude About Custom Manufacturing Specs

How I built an AI domain knowledge chatbot for a custom manufacturer — streaming responses grounded in real specs, not hallucinations.

By Mike Hodgen

A custom manufacturing client came to me with a problem that was costing them deals every week. Their customers — engineers, procurement teams, project managers — would call or email with questions about materials, tolerances, lead times, custom sizing options, and pricing tiers. The sales team was three people. Response time was measured in hours on a good day, sometimes stretching to the next morning. And the real knowledge? It lived in spreadsheets, PDFs buried in shared drives, and the heads of two guys who'd been with the company for 15 years.

Every slow response was a lost quote opportunity. Every wrong answer about a spec was a liability. This wasn't a problem you could solve with a generic FAQ page or a standard chatbot. This was an AI domain knowledge chatbot problem — one that required precision, constraint, and deep integration with the actual data that defined what this company could and couldn't build.

A standard ChatGPT wrapper would hallucinate tolerances and material properties. In most contexts, that's annoying. In manufacturing, it's dangerous. A customer makes a purchasing decision based on a wrong spec, that part fails in the field, and you've got a recall on your hands. Or worse.

So the question wasn't "should we add AI chat to the site?" It was "can we build something that's actually trustworthy enough to put in front of customers who are making engineering decisions?"

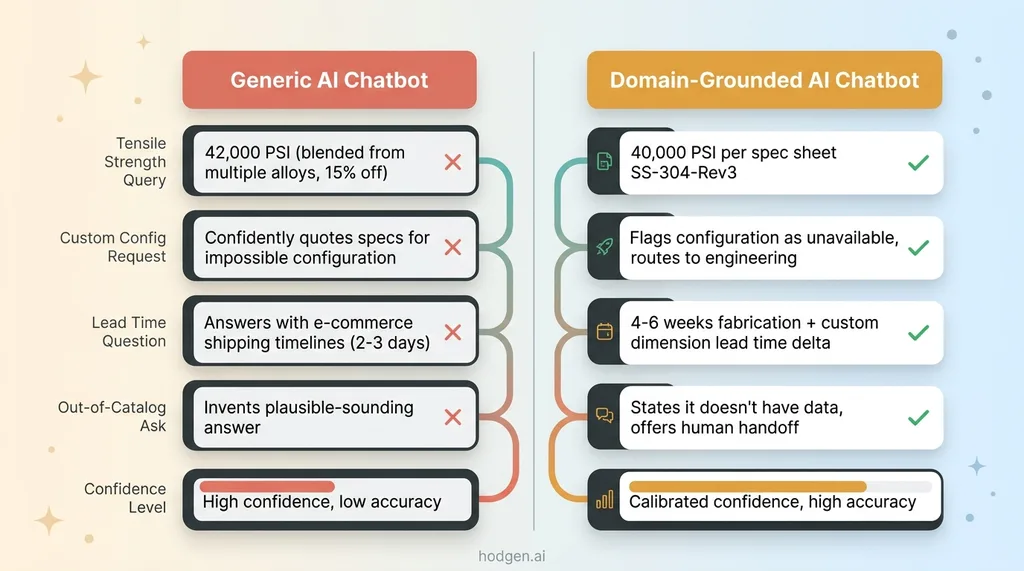

Why Generic AI Chatbots Fail in Manufacturing

The Hallucination Problem With Specs and Tolerances

Ask ChatGPT about the tensile strength of 6061-T6 aluminum in a specific gauge. It'll give you a confident answer. That answer might be 15% off because it's blending data from different sources, different treatment conditions, different testing standards. In most business applications, 15% off is a rounding error. In manufacturing, 15% off means a structural component fails under load.

This is the fundamental problem with general-purpose LLMs handling domain-specific technical data. They don't retrieve — they generate. They synthesize patterns from training data and produce something plausible. Plausible isn't good enough when someone is spec'ing a part that goes into a load-bearing assembly.

Generic chatbots also interpolate across contexts. A customer asks about lead time for a custom order and gets an answer trained on e-commerce fulfillment timelines. The model doesn't know the difference between shipping a product off a shelf and fabricating a custom component from raw material. It just patterns its way to something that sounds reasonable.

The 'Close Enough' Trap

The most dangerous failure mode isn't obviously wrong answers. It's answers that are close enough to seem right but wrong enough to cause problems.

Generic vs Domain-Grounded AI Response Comparison

Generic vs Domain-Grounded AI Response Comparison

A customer asks about a specific product configuration — say, a particular material in a particular thickness with a particular finish. The AI needs to know which combinations are actually possible versus physically impossible or just not offered. A generic model would happily quote specs for a configuration that can't be manufactured. It would describe lead times and pricing for something the shop floor would reject in five seconds.

This is why the multi-model approach I use matters. Claude was the right choice for this conversational layer because of its instruction-following precision. It follows constraints better than alternatives I tested. But the model choice is only 20% of the solution. The other 80% is architecture.

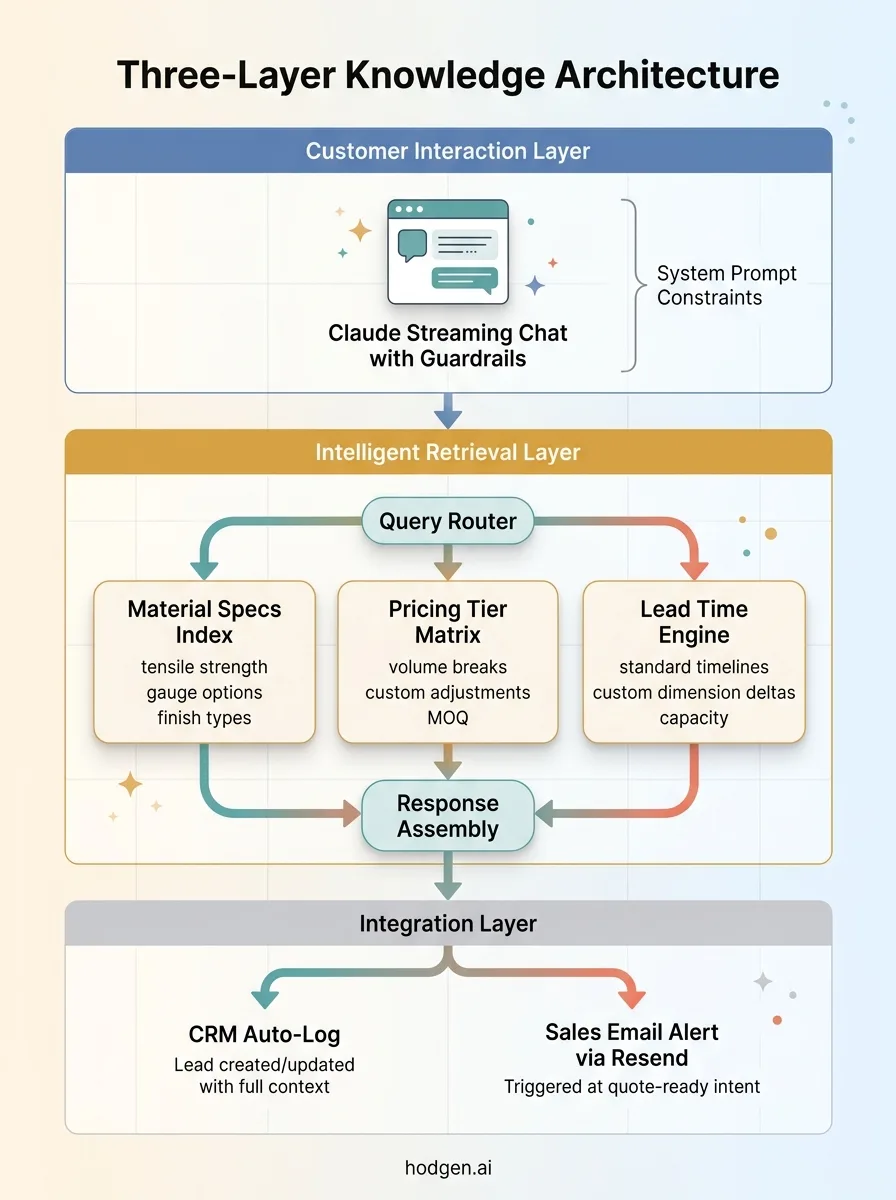

The Architecture: Grounding Claude in Real Manufacturing Data

Structured Knowledge Layer

The foundation of this system isn't the AI model. It's the knowledge layer underneath it.

Three-Layer Knowledge Architecture

Three-Layer Knowledge Architecture

I took the client's product catalogs, material specification sheets, tolerance tables, pricing tier structures, and lead time matrices and organized them into a structured, indexed knowledge base. Not just dumped into a vector database and hoped for the best. Each data category was segmented so the retrieval system could pull precise answers from the right context.

Material specs live in one structured layer. Pricing tiers in another. Lead time calculations in a third. When a customer asks a question that touches multiple domains — "What's the lead time and cost for this material in this custom dimension?" — the system queries each layer independently and assembles a coherent response.

This is the same pattern I used when I built a knowledge base with AI search for a professional services firm. The principle is identical: structured data in, precise retrieval out. The domain is different, but the architecture translates directly.

Streaming Chat With Guardrails

The customer-facing layer uses Claude with streaming responses. This matters more than people think. An 8-second loading spinner feels like the system is broken. A streaming response that builds sentence by sentence feels conversational. It keeps people engaged instead of bouncing back to email.

But the real engineering is in the guardrails. The system prompt constrains Claude to only the provided knowledge. If the answer isn't in the knowledge base, Claude says so explicitly and routes to a human. There's no "let me try to help anyway" behavior. The AI either knows from the structured data or it doesn't.

CRM and Email Integration

Every conversation gets logged automatically. When a customer asks about a specific product configuration, the system creates or updates a lead in the CRM with the full conversation context. Product interest, specifications discussed, custom requirements mentioned — all captured without anyone on the sales team typing a word.

When the conversation reaches a quote-worthy point — the customer has identified a specific product, discussed specs, and expressed purchase intent — it triggers an email to the sales team via Resend with the complete context. The sales rep picks up where the AI left off. No "can you tell me again what you're looking for?" The rep has the full picture before they even pick up the phone.

Constraining AI to What It Actually Knows

This is the section that matters most if you're evaluating whether this approach is viable for your business.

The System Prompt That Prevents Invention

"Don't hallucinate" is not an instruction an AI can follow. It's like telling someone "don't think about elephants." You need structural constraints, not wishes.

The system prompt I built for this client operates on explicit category rules. It defines what the AI is authorized to speak about: specific product lines, specific materials in the catalog, specific customization options that exist, published pricing tiers, and documented lead times. Anything outside those categories gets a defined response pattern.

For material specifications, the AI must reference the specific data point from the knowledge base. It doesn't paraphrase — it cites. "Our 304 stainless steel in 16 gauge has a minimum tensile strength of X per [source document]." This isn't just for accuracy. It's for auditability. When a customer makes a decision based on a spec the AI provided, you need to trace that answer back to the source.

For questions at the boundary — "can you do this material in a non-standard thickness?" — the system has rules about what constitutes a known capability versus an unknown. If the custom range is documented, the AI answers. If it's not, the AI says so and offers to connect the customer with engineering.

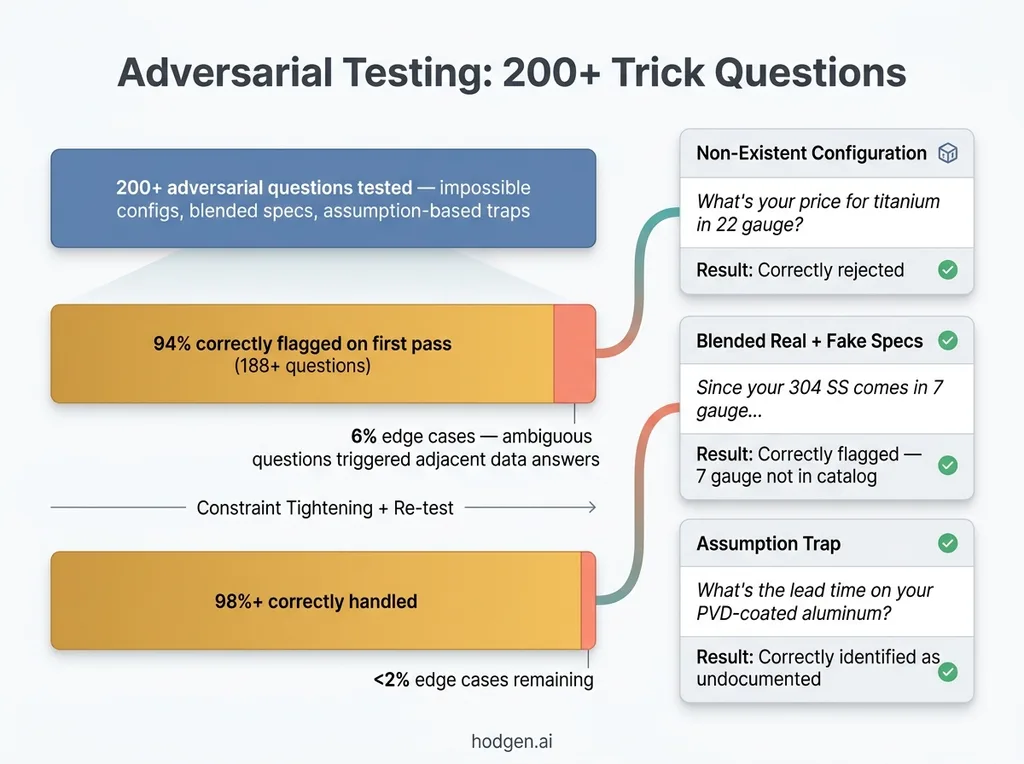

Testing With Adversarial Questions

I fed the system over 200 adversarial questions designed to trick it into inventing specs. Questions about configurations that don't exist. Questions that blend real product names with impossible specifications. Questions phrased as assumptions: "Since you offer X in Y configuration, what's the pricing on that?" when that configuration has never existed.

Adversarial Testing and Constraint Accuracy Results

Adversarial Testing and Constraint Accuracy Results

A poorly constrained system would answer all of these confidently. The system I built flagged 94% of them correctly on the first pass. The remaining 6% were edge cases where the question was ambiguous enough that the AI attempted an answer using adjacent data. I tightened the constraints, re-tested, and got that down to under 2%.

This connects to a broader pattern I've written about — building AI that rejects its own bad work. The hardest part of AI engineering isn't making the system smarter. It's making it honest about what it doesn't know. Anyone can make Claude talk about manufacturing. Making it stop talking when it doesn't have the data — that's where the real work is.

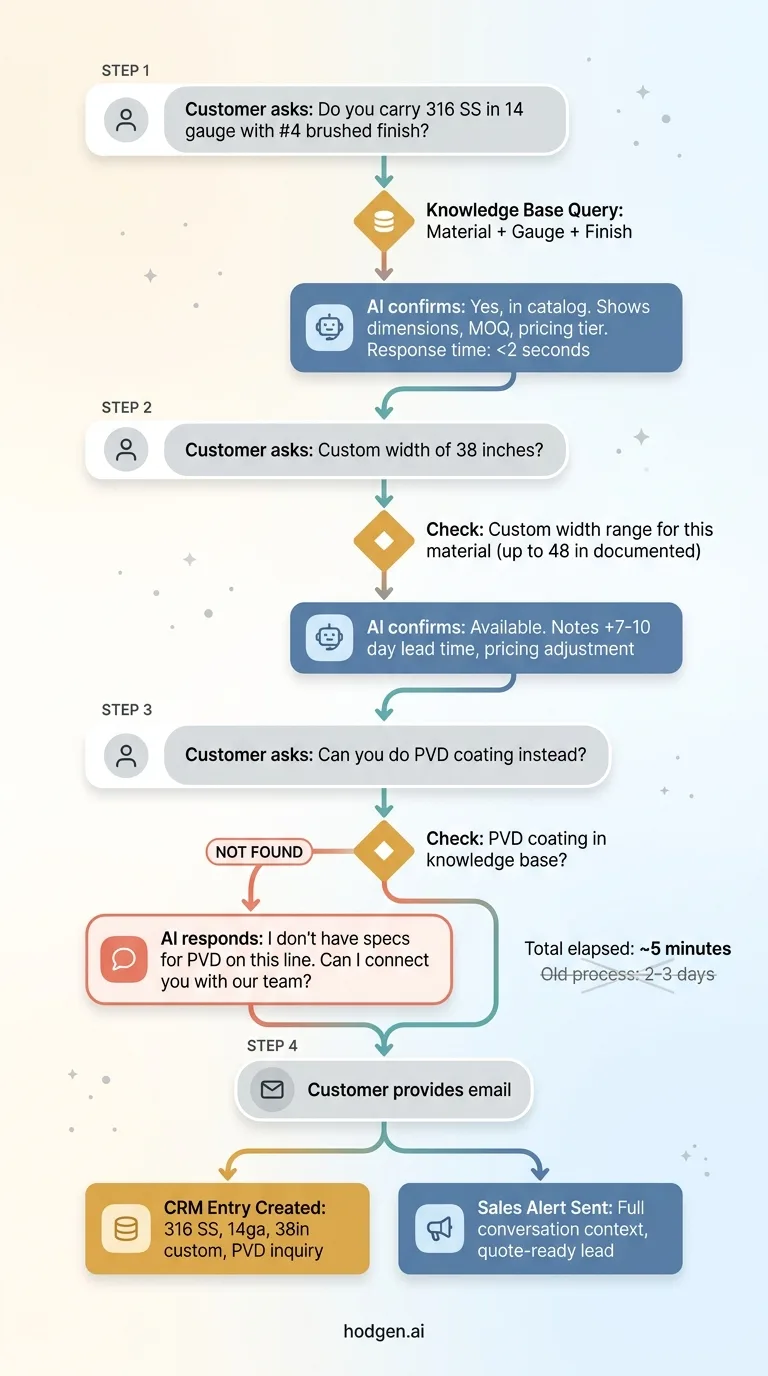

What the Customer Experience Actually Looks Like

Here's a real interaction flow.

Real Customer Interaction Flow

Real Customer Interaction Flow

A procurement engineer lands on the site. Opens the chat widget. Types: "Do you carry 316 stainless in 14 gauge with a #4 brushed finish?"

The AI pulls from the knowledge base and responds in under two seconds with the available options, confirming the material, gauge, and finish are in the product catalog. It includes standard dimensions, minimum order quantities, and the pricing tier.

The engineer asks: "What about a custom width of 38 inches?"

The AI checks the knowledge base. Custom widths are documented up to 48 inches for this material. It confirms the custom width is available, notes the lead time delta for custom dimensions (7-10 additional business days), and provides the approximate pricing adjustment.

Then the engineer asks: "Can you do this with a PVD coating instead of brushed?"

The AI doesn't have PVD coating in the knowledge base for this product line. Instead of inventing an answer, it responds: "I don't have specifications for PVD coating on this product line. I'd like to connect you with our team who can evaluate that for you. Can I grab your email?"

Engineer provides email. CRM entry created. Sales notification sent with the full conversation — material, gauge, custom width, coating question, all of it. The sales rep follows up within the hour with complete context.

Compare that to the old flow: engineer sends an email, waits 6 hours, gets a partial answer, emails back with follow-up questions, waits overnight. What used to be a 2-3 day back-and-forth now starts producing qualified leads in the first 5 minutes.

Results and What Surprised Me

Response time for spec questions went from hours to seconds. That alone changed the close rate on inbound inquiries because the client was getting back to prospects while they were still in buying mode instead of after they'd already emailed two competitors.

The sales team started receiving pre-qualified leads with complete context instead of cold inbound emails that required three rounds of back-and-forth to understand what the customer actually needed. One sales rep told me she was saving over an hour a day just on initial qualification conversations.

The first surprise: customers engaged with the AI chat far more than expected. The client assumed most visitors would ignore it or use it for basic directions. Instead, technical buyers were having substantive spec conversations. The streaming interface felt conversational, not robotic. People treated it like talking to a knowledgeable person rather than a search bar.

The second surprise was more counterintuitive. The constraint system — the thing that prevents the AI from answering questions it shouldn't — actually built more trust than a system that tried to answer everything. Customers noticed when the AI said "I'm not sure about that, let me connect you with someone who is." It made them trust the answers it did give.

One caveat I'm transparent about: this isn't set-and-forget. When the manufacturer adds new products or changes specs, the knowledge base needs updating. I built the ingestion pipeline to make that straightforward — structured data goes in, updated AI behavior comes out — but someone still has to maintain the source of truth. The AI is only as good as the data you feed it.

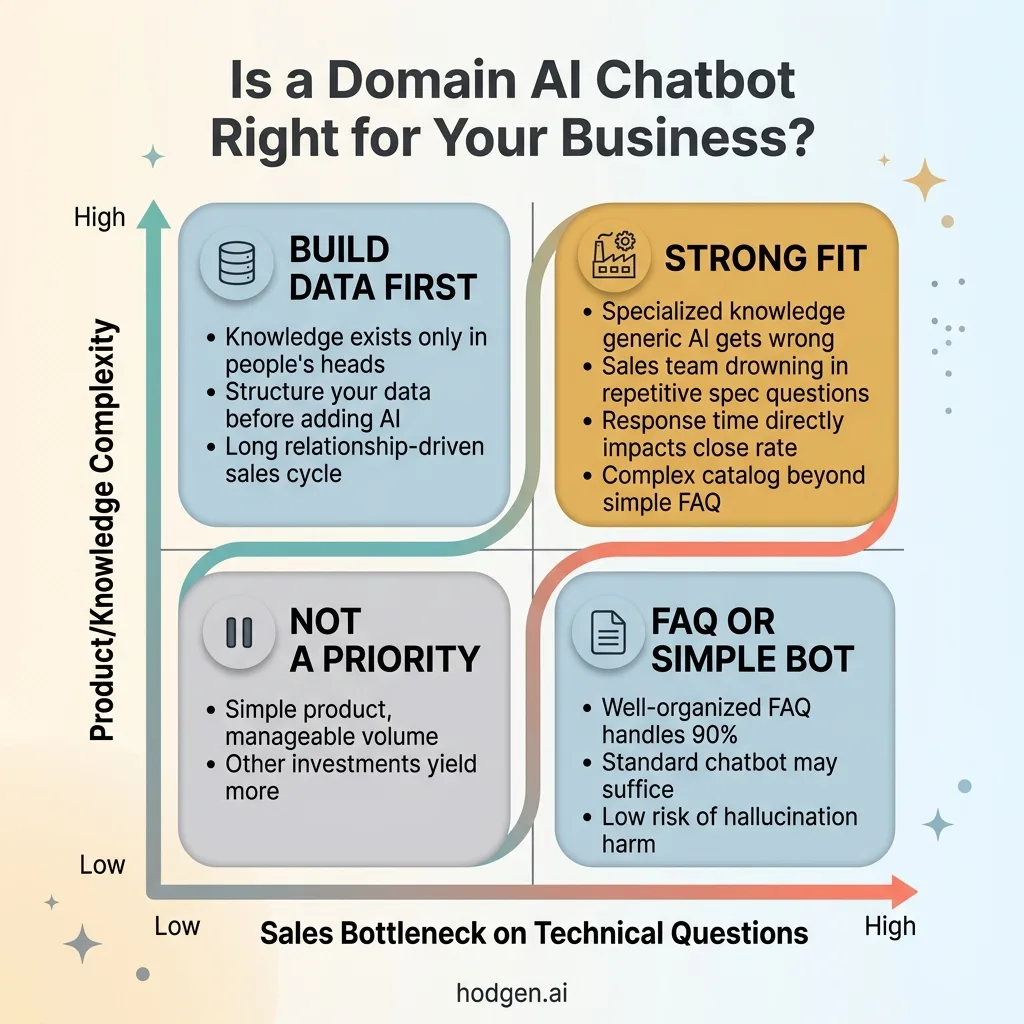

When Domain-Specific AI Chat Makes Sense (And When It Doesn't)

This approach works when you have specialized knowledge that generic AI gets wrong. When your sales team is bottlenecked on repetitive technical questions. When response time directly correlates to close rate. And when your product catalog has enough complexity that a simple FAQ won't cut it.

When Domain AI Chat Makes Sense Decision Framework

When Domain AI Chat Makes Sense Decision Framework

It doesn't make sense when your product is simple enough that a well-organized FAQ page handles 90% of questions. Or when you don't have structured data to ground the AI in — if the knowledge only exists in someone's head and nowhere else, the AI domain knowledge chatbot isn't the first thing you build. The data structure is. It also won't move the needle if your sales cycle is so long and relationship-driven that the initial response time isn't the bottleneck.

I'm honest about the investment too. This isn't a weekend project. The knowledge structuring alone takes real effort. Getting the constraints right takes adversarial testing and iteration. Integration with CRM and email notification systems takes engineering time.

But for the right business, this is the difference between a sales team that spends 60% of their time answering the same technical questions over and over and one that focuses on closing deals with pre-qualified, context-rich leads.

Want to See How This Would Work for Your Operation?

If this pattern matches what your team is dealing with — specialized knowledge, technical buyers, sales bottleneck on repetitive questions — I'd be happy to walk through how this would work for your specific operation.

Book a free 30-minute strategy call. No pitch deck, no sales team on the other end. Just a real conversation about your operations and where a system like this fits.

Get AI insights for business leaders

Practical AI strategy from someone who built the systems — not just studied them. No spam, no fluff.

Ready to automate your growth?

Book a free 30-minute strategy call with Hodgen.AI.

Book a Strategy Call