Building an AI Knowledge Base for a Professional Services Firm

How I built an AI knowledge base for a wealth management firm — searchable docs, AI categorization, and why this is step one before scaling AI.

By Mike Hodgen

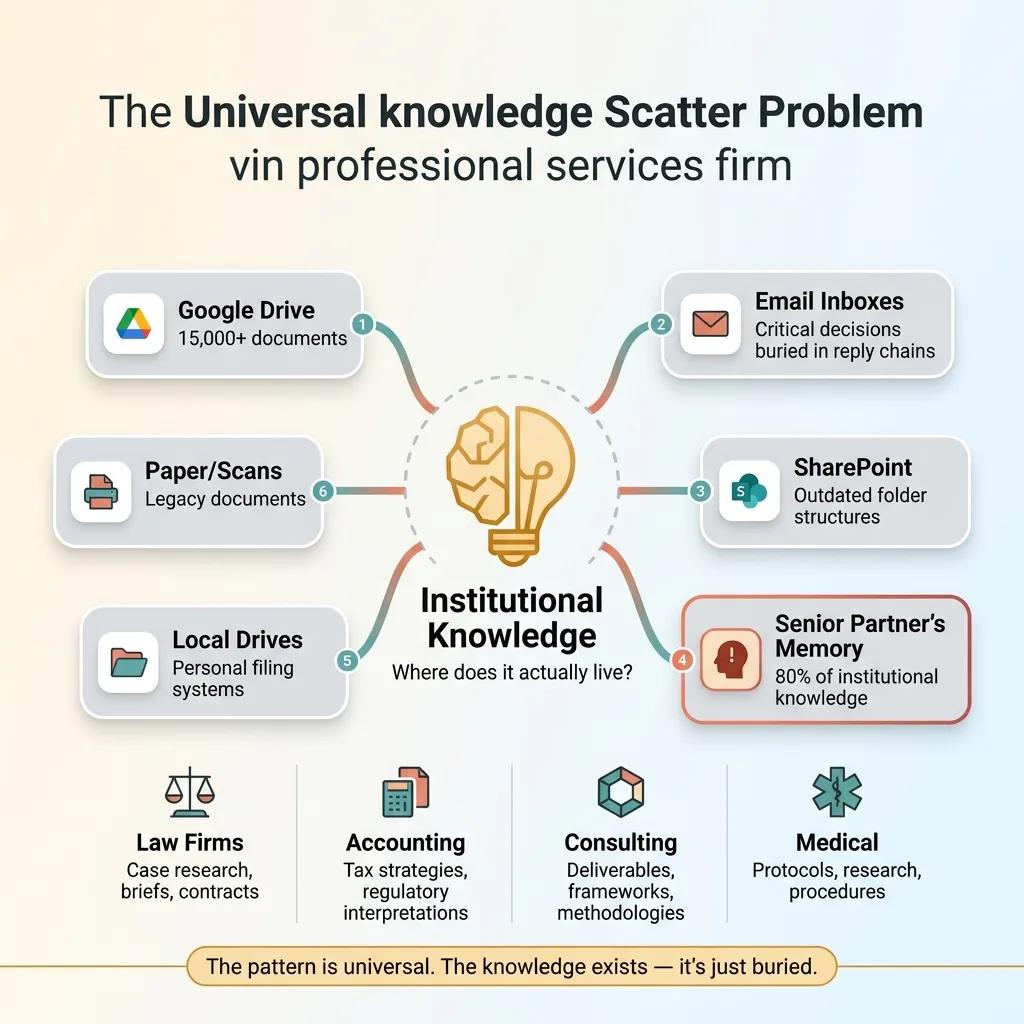

Every professional services firm reaches a point where its own expertise becomes a liability. Not because the knowledge isn't valuable — but because nobody can find it when they need it.

I saw this play out clearly when I started working with a financial advisory firm managing $500M+ in assets. They had eight years of compliance documents, investment memos, client research, internal procedures, and regulatory filings spread across Google Drive, Outlook inboxes, SharePoint folders, and — most dangerously — the founding partner's memory.

The symptoms were predictable. New advisors took three to four months to ramp up because tribal knowledge wasn't searchable. It lived in senior people's heads and in folder structures that only made sense to whoever created them. A compliance question that should take two minutes took 45, because nobody remembered which subfolder the relevant policy lived in — or whether it was the version from 2021 or 2023.

The founding partner carried roughly 80% of the firm's institutional knowledge in their head. That's not a compliment. That's a business continuity risk with a heartbeat.

Building an AI knowledge base for this business wasn't a nice-to-have. It was the single most impactful project we could start with. And here's the thing — this isn't unique to wealth management. Law firms, accounting practices, consulting firms, medical groups. Every professional services firm has this exact problem. The knowledge exists. It's just buried.

Why Knowledge Management Is Step One Before Any AI Strategy

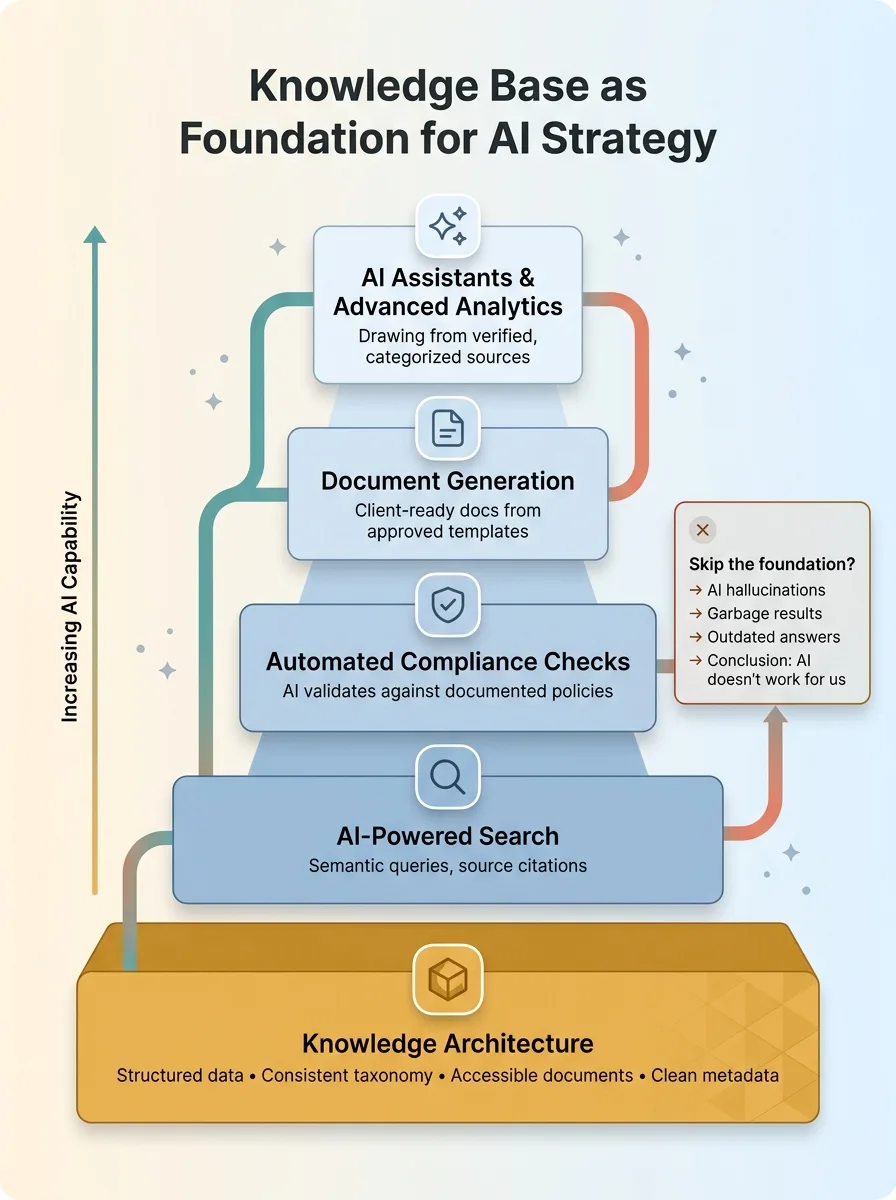

Knowledge Base as Foundation for AI Strategy

Knowledge Base as Foundation for AI Strategy

The Garbage In, Garbage Out Reality

Most firms I talk to want to start with the flashy stuff. An AI chatbot for clients. Automated report generation. Predictive analytics on their portfolio. Those are all real possibilities. But they all depend on one thing: structured, accessible data underneath.

AI is only as good as the information it can reach. If your documents are scattered across six platforms with no consistent naming convention, no tagging, no metadata, and no structure, any AI you layer on top will either hallucinate or return garbage. It's not a technology problem. It's an architecture problem.

This matches my own experience building AI systems for my DTC fashion brand. The product pipeline, the pricing engine, the SEO automation — all 29 automation modes I run in production — every single one depends on clean, structured, accessible data at the foundation. That's not glamorous work, but it's the work that makes everything else possible. I've written about the first AI systems every business should build, and knowledge architecture is always near the top of the list.

What Happens When You Skip This Step

I've seen the failure pattern multiple times. A firm buys an AI search tool, points it at a messy Google Drive with 15,000 documents, and gets useless results. The AI returns outdated policies. It misses relevant documents because they're titled "Final_v3_REAL_final_JT_edits.docx." It can't distinguish between a draft and an approved policy.

The firm concludes: "AI doesn't work for us."

The actual problem was never the AI. It was knowledge architecture. You wouldn't blame a search engine for returning bad results when your filing system is chaos. The same principle applies here. Fix the foundation first, then the AI becomes genuinely powerful.

What I Actually Built: Architecture of the Knowledge Base

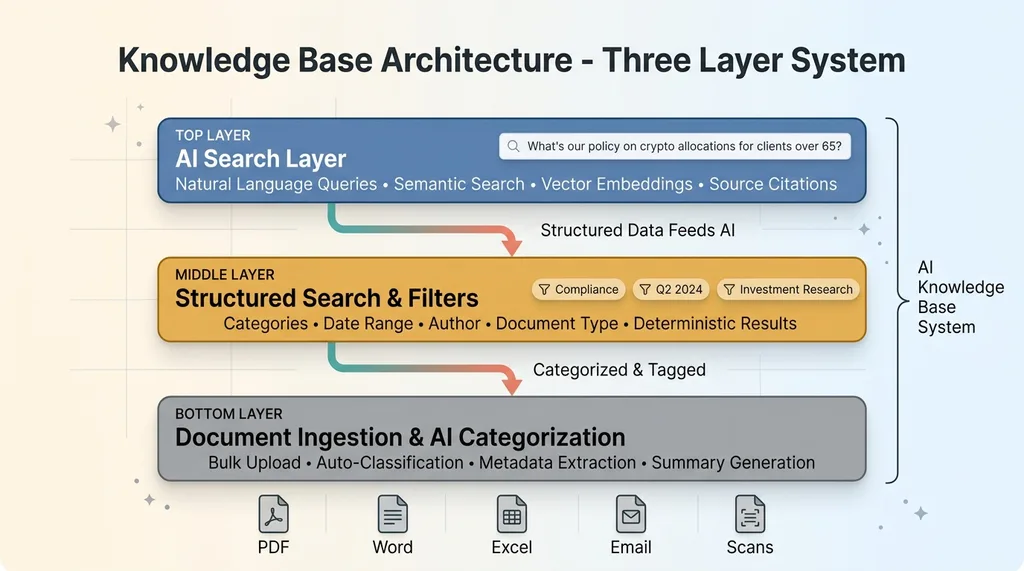

Knowledge Base Architecture - Three Layer System

Knowledge Base Architecture - Three Layer System

Document Ingestion and AI Categorization

The first challenge was getting everything into one system. The firm had documents in PDFs, Word files, Excel spreadsheets, PowerPoint decks, scanned images, and email threads with critical decisions buried in reply chains.

We built an ingestion pipeline that could handle bulk uploads of existing documents across all these formats. But here's where it gets interesting — instead of dumping everything into a folder and calling it done, AI processes each document on intake. It reads the content, identifies the document type, generates a summary, proposes category tags, extracts key metadata like dates and referenced clients (anonymized for the knowledge base), and flags whether it appears to be a draft or final version.

This is work that would have taken a junior employee weeks of full-time effort. The AI processed the entire library in hours.

Search, Sort, and Filters

The structured search layer is the workhorse. Users can filter by document category (compliance, client-facing, internal procedures, investment research), by date range, by author, by document type. Standard stuff, but essential.

This layer is fast, deterministic, and reliable. When an advisor knows they're looking for "the updated suitability policy from Q2 2024," structured search gets them there in seconds. No ambiguity, no AI interpretation needed.

The AI Search Layer

The AI search layer sits on top and handles the queries that structured search can't. Natural language questions like: "What's our policy on crypto allocations for clients over 65?" or "What did we decide about the Henderson account rebalancing threshold?"

Under the hood, this uses vector embeddings for semantic search — the system understands meaning, not just keywords. Documents are chunked strategically so long compliance manuals don't get treated as a single blob. And every result includes source citations: the specific document, the specific section, and a link to the original.

That last part — source attribution — is non-negotiable. In a regulated industry, advisors can't just trust what an AI tells them. They need to verify. The system makes verification frictionless: read the AI's answer, click through to the source document, confirm it yourself. Two clicks, not 45 minutes of folder archaeology.

The dual approach is the key design decision. Structured filters for known queries. AI search for discovery and complex questions. Each one covers the other's blind spots.

The Categorization Problem Nobody Talks About

This was the hardest and most valuable part of the entire project, and it's the piece that most people underestimate.

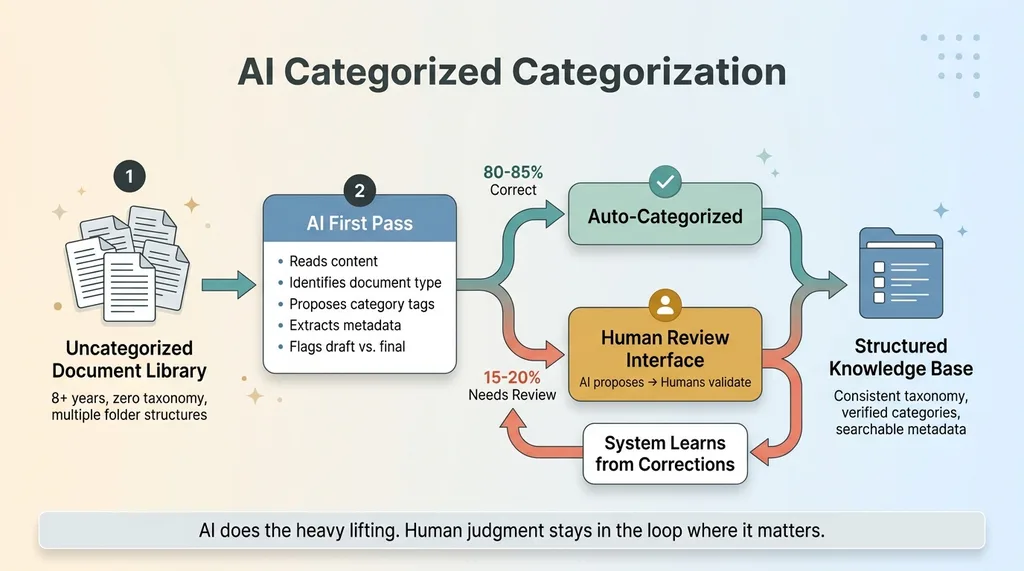

AI Categorization Process with Human-in-the-Loop

AI Categorization Process with Human-in-the-Loop

The firm had eight-plus years of documents with zero consistent taxonomy. Some folders were organized by client name. Some by year. Some by document type. Some by the person who created them. One senior advisor had a folder called "Important" with 2,300 files in it.

Manually categorizing thousands of documents would have consumed weeks of billable-hour employee time. Nobody wanted to do it. Nobody had done it for eight years. That's how they got here.

AI categorization processed the entire library and proposed a taxonomy based on what actually existed — not what someone assumed the categories should be. It identified document types the firm didn't even realize they had as a distinct category. Turns out they had a whole class of "informal policy memos" — emails where a partner made a decision that became de facto policy but was never formalized. The AI spotted the pattern across hundreds of documents. No human would have caught that without reading every single one.

But here's the honest part: the AI's first pass wasn't perfect. About 15-20% of categorizations needed human correction. Some documents straddled two categories. Some were miscategorized because the language was ambiguous. A client research memo that referenced compliance requirements got tagged as a compliance document when it was really investment research.

That's why you build a review interface, not a fire-and-forget system. AI proposes. Humans validate. The system learns from corrections. This is the same quality control pattern I use across every AI system I build — the AI does the heavy lifting, but human judgment stays in the loop where it matters.

What Changed After Deployment

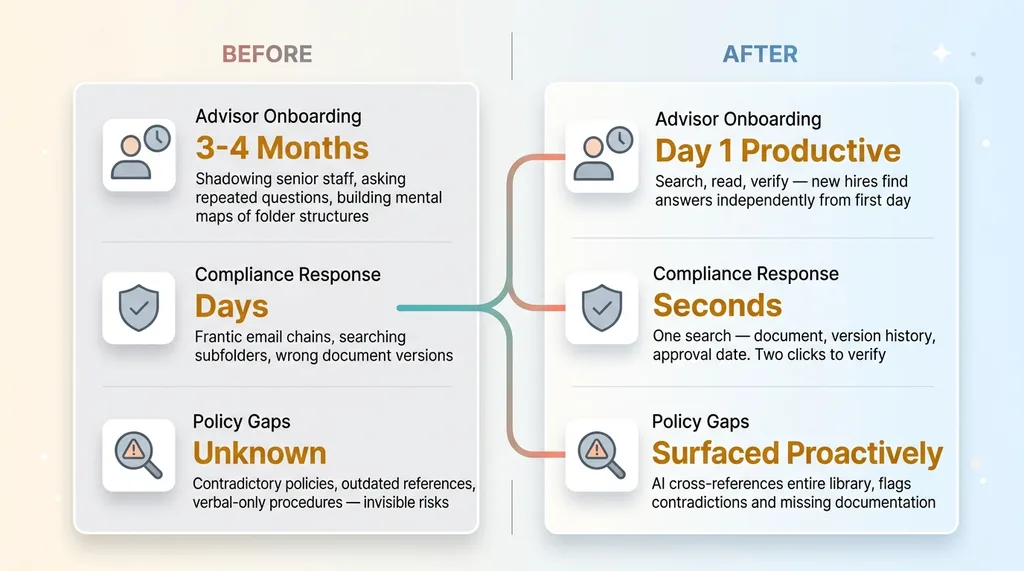

Before vs After Knowledge Base Deployment

Before vs After Knowledge Base Deployment

Advisor Onboarding

Before the knowledge base, new advisors spent their first three to four months in a fog. They'd shadow senior advisors, ask the same questions repeatedly, and slowly build a mental map of where things lived and how the firm operated.

After deployment, new hires could search and find answers independently from day one. "How do we handle IRA rollovers for clients over 72?" Search, read, verify. The ramp time for a new advisor to become independently productive dropped significantly. They still shadowed senior people for relationship and judgment skills — AI can't teach that — but the mechanical knowledge transfer happened through the system instead of through interruptions.

Compliance Response Time

This was the metric the founding partner cared about most. When a regulator asks "show me your policy on X," the answer needs to come fast and it needs to be accurate. Before, that request triggered a frantic email chain: "Does anyone know where the updated suitability standards doc is?" Sometimes it took days to locate the right version.

After deployment, one search. Seconds. The document, the version history, the approval date. When your compliance response time drops from days to seconds, that's not just efficiency — that's the difference between a routine audit and a regulatory finding.

The Unexpected Win

Here's what nobody planned for: the knowledge base revealed what was missing.

When AI categorized and cross-referenced the entire document library, it surfaced inconsistencies the firm didn't know they had. Two policies that directly contradicted each other — one from 2019, one from 2022, both technically active. Procedures that referenced a software platform the firm stopped using two years ago. Entire topic areas where no written policy existed at all, meaning the firm was operating on verbal tradition.

The knowledge base didn't just organize what they had. It showed them the gaps. For a regulated firm, those gaps are liability. Finding them proactively — before an auditor does — is worth the entire investment by itself.

Every Professional Services Firm Has This Problem

The specifics vary, but the pattern is universal.

The Knowledge Scatter Problem Across Professional Services

The Knowledge Scatter Problem Across Professional Services

Law firms have decades of case research, briefs, legal memos, and contract templates scattered across partner drives. Accounting firms have tax strategies, client correspondence, and regulatory interpretations buried in email threads. Consulting firms have deliverables, frameworks, and institutional methodologies that only exist in senior consultants' heads. Medical practices have clinical protocols, research summaries, and administrative procedures spread across half a dozen systems.

The cost isn't just inefficiency. It's risk. When institutional knowledge lives in one person's head and that person retires, takes another job, or gets hit by a bus, the firm loses years of accumulated intelligence overnight. This is a business continuity issue, not a productivity issue.

And here's the strategic point: a structured, searchable knowledge base is the foundation for everything else you want to build with AI. Once your knowledge is organized, you can build AI assistants that actually work because they're drawing from verified, categorized sources. You can automate compliance checks against your own documented policies. You can generate client-ready documents from approved templates. You can build what I'd call a multi-skill AI platform — where the knowledge base is one component of a larger system that keeps compounding in value.

Without that foundation, every AI project starts with a data cleanup that should have been done first.

Where to Start If Your Firm's Knowledge Is Scattered Everywhere

Don't start by buying software. Start by auditing what you have.

Where do documents live? How many sources? What formats? What does the current search experience actually look like for your team? Ask a junior employee to find a specific policy document and time how long it takes. That number is your baseline.

Then decide on taxonomy — AI can help here, but someone needs to validate it against how your team actually thinks about their work. Categories that make sense to the AI but don't match your team's mental model will get ignored.

Build the structured layer first: categories, tags, metadata, consistent naming. This works even if you never add AI search. Then add the AI search layer on top. The structured layer is reliable. The AI layer makes it powerful.

This is exactly the kind of project I take on as a Chief AI Officer — it's not just building the system, it's understanding a firm's workflows deeply enough to make the knowledge base match how people actually work. I've written about what a Chief AI Officer actually does if you want to understand the role.

If your firm has years of expertise trapped in scattered folders and senior people's heads, that's a solvable problem. And it's the right place to start before any other AI investment.

Want to Explore What an AI Knowledge Base Could Do for Your Firm?

I do a free 30-minute strategy call. No pitch deck, no sales team — just a real conversation about where your knowledge lives, what's broken, and whether building a system like this makes sense for your situation.

Book a Discovery Call or walk through what this would look like for your firm.

Get AI insights for business leaders

Practical AI strategy from someone who built the systems — not just studied them. No spam, no fluff.

Ready to automate your growth?

Book a free 30-minute strategy call with Hodgen.AI.

Book a Strategy Call