I Built an AI Real Estate Analysis Tool for Syndications

How I built an AI real estate analysis tool that turns 40-page offering memos into 2-page decision documents with live comp data and structured financials.

By Mike Hodgen

A real estate syndication client came to me with a problem I hear constantly, just dressed in different clothes depending on the industry: too much information, not enough time, and decisions getting bottlenecked by the sheer volume of paper.

This firm evaluates adaptive reuse deals — converting warehouses, old office buildings, and industrial properties into residential or mixed-use developments. Interesting niche. Also a nightmare for analysis, which I'll get to. They were seeing 15 to 20 deals per month, each one arriving as a 40 to 60 page offering memorandum. Dense PDFs packed with pro formas, rent rolls, market analysis, sponsor track records, waterfall structures, and legal entity diagrams.

The partners were spending 3 to 4 hours per deal just to decide whether it deserved a closer look. Not to do due diligence — just to figure out if due diligence was warranted. That's 60+ hours a month burned before any real analysis begins.

The ask was clear: build an AI real estate analysis tool that turns those 40-page memos into 2-page executive decision documents. Structured financials, market comps pulled from live data, and a preliminary risk score. Something that makes every deal comparable at a glance.

Here's the wrinkle that made this project genuinely interesting. Adaptive reuse means the property's current state is irrelevant to its investment thesis. A deal memo describes a 1960s industrial warehouse, but the investment is really about the 120-unit apartment complex it will become after $14M in renovations and a zoning change. Standard commercial real estate analysis tools evaluate what a property is. This system needed to evaluate what a property will be — which means parsing renovation budgets, construction timelines, zoning risk, and projected rents for a building that doesn't exist yet.

No tool on the market does this well. So I built one.

What the AI Real Estate Analysis Tool Actually Does

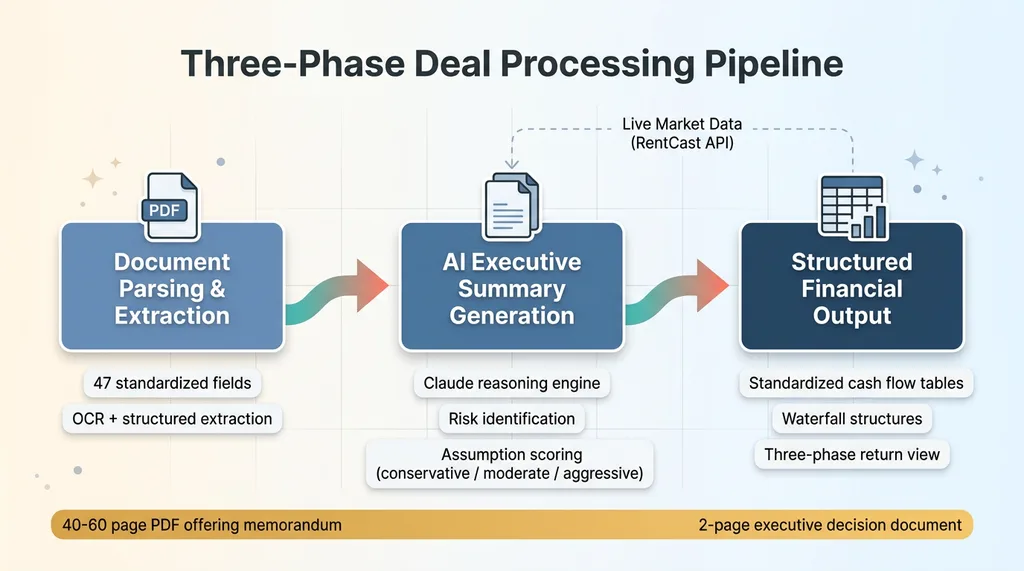

The system has three core functions that run in sequence every time a new deal memo enters the pipeline.

Three-Phase Deal Processing Pipeline

Three-Phase Deal Processing Pipeline

Document Parsing and Extraction

When a PDF lands in the system, it goes through OCR where needed and then into a structured extraction pipeline. The AI pulls specific financial metrics into a standardized schema: projected IRR, equity multiple, hold period, entry and exit cap rates, debt structure (LTV, interest rate, term), sponsor co-investment amount, and fee breakdown (acquisition fee, asset management fee, disposition fee).

This isn't summarization. It's extraction into a rigid data structure so that Deal #7 and Deal #34 are formatted identically and can be compared side by side. The schema I designed has 47 fields. Every deal gets mapped to the same 47 fields, or the system flags what's missing.

For adaptive reuse specifically, the parser also extracts renovation budget (total and per-unit), construction timeline, zoning status, and entitlement risk factors. These fields don't exist in standard CRE analysis templates, which is exactly why off-the-shelf tools fall short.

AI Executive Summary Generation

Once extraction is complete, Claude generates a 2-page decision document. This is where using multiple AI models in production matters — Claude handles the structured reasoning and document generation because it's the strongest model I've tested for maintaining factual consistency across long financial documents.

The summary includes: deal structure in plain English, key financial metrics in a standardized table, risk factors the AI identifies (sponsor concentration, market exposure, construction timeline risk, entitlement dependency), and a plain-language assessment of whether the deal's assumptions are conservative, moderate, or aggressive relative to market data.

The risk identification is where real value shows up. The AI flags things like a sponsor who's running seven simultaneous projects with a combined $200M in equity — that's concentration risk that's easy to miss when you're reading one deal memo in isolation.

Structured Financial Output

The system produces standardized tables for cash flow projections, waterfall structures, and fee analysis. Every deal gets the same format. Partners stopped arguing about which page of a memo to reference because the output is always structured identically.

For adaptive reuse deals, the financial output separates the construction phase (cash outflow, no distributions) from the stabilization phase (lease-up, early distributions) from the hold phase (steady-state cash flow). This three-phase view is critical because lumping them together — which most sponsor memos do — obscures the real return profile.

Live Market Comps via RentCast API

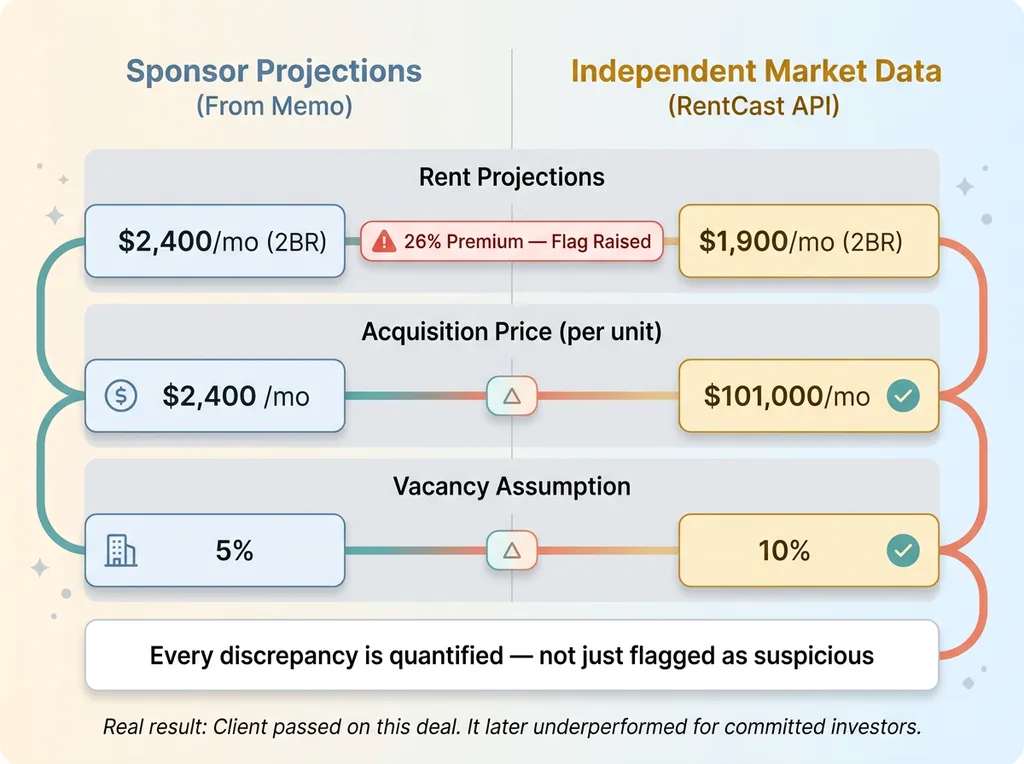

The AI deal analysis gets genuinely useful when it's grounded in real market data rather than relying solely on what the sponsor tells you.

Adaptive Reuse Analysis Gap — Current vs. Future State

Adaptive Reuse Analysis Gap — Current vs. Future State

I integrated the RentCast API so that when a deal enters the system, it automatically pulls comparable rental data for the target submarket. Current rents per square foot, rent growth trends over the trailing 12 months, vacancy rates, and comparable property values. This runs automatically — no one has to go look anything up.

Why this matters: it stress-tests the sponsor's assumptions against reality. If a sponsor projects $2.50/sqft rents but RentCast shows the submarket is averaging $1.85, that gap gets flagged immediately in the executive summary. The AI doesn't just note the discrepancy — it recalculates the projected returns using market-rate assumptions so the partners can see both scenarios side by side.

For adaptive reuse, this is where the system earns its keep. It pulls comps for the intended use (residential apartments), not the current use (warehouse or office). A warehouse comp tells you nothing about what 120 apartments will rent for. The system knows to query based on the target property type, not the source property type. That distinction sounds obvious, but every standard real estate deal analysis AI tool I evaluated before building this one got it wrong.

A concrete example from early deployment: one deal memo projected 94% occupancy for a converted office building in a secondary market. RentCast data showed that submarket was running 87% average occupancy for comparable multifamily. The AI flagged this as an aggressive assumption, held everything else constant, and recalculated. The sponsor's 14.2% projected IRR dropped to 10.8% under market-reality assumptions.

That's the difference between a "yes" and a "pass" for this firm. And it took the system about 90 seconds to surface it — versus a partner spending an hour pulling comps manually and doing the math in a spreadsheet.

Investor Management and Deal Tracking

An AI property analysis system that only processes documents is a parlor trick. To be a real business tool, it needs to connect analysis to action.

Investor CRUD System

The platform includes full investor management. Each investor profile tracks contact information, accreditation status, investment preferences (minimum and maximum deal size, preferred asset classes, target geographies, acceptable hold periods), historical investments, and current capital commitments.

Not glamorous. Absolutely essential. When a new deal gets analyzed and scored, the system matches it against investor preferences and flags which investors in the database are likely fits. A $5M adaptive reuse deal in Phoenix with a 5-year hold automatically surfaces the eight investors who've indicated interest in that profile — instead of someone manually scanning a spreadsheet.

The whole system runs on a Supabase backend with row-level security, so investor data is properly segmented and protected. In a regulated industry dealing with accredited investors, this isn't optional.

Deal Pipeline Dashboard

Every deal that enters the system gets tracked through stages: received, AI analysis complete, human review, due diligence, and final outcome (passed or invested). Over time, this pipeline data becomes its own asset.

After 50+ deals analyzed, the firm started seeing patterns. They noticed they were consistently passing on deals with sponsor acquisition fees above 2.5%, and that their best-performing investments correlated with sponsor co-invest above 10% of total equity. Those patterns were always there in the data — they just never had the data structured consistently enough to see them.

The Validation Layer: When AI Gets Numbers Wrong

Here's where I have to be honest, because the stakes demand it.

Financial data extraction from PDFs is not a solved problem. Tables render inconsistently. OCR misreads characters. A cap rate of 5.2% becomes 52% if a decimal gets dropped. A renovation budget of $12M becomes $1.2M when a table header gets misaligned.

That second example actually happened during testing. The system extracted a renovation budget of $1.2M from a deal where the actual figure was $12M — a table formatting issue in the source PDF. If that number had made it into the executive summary unchecked, it would have made the deal look absurdly attractive. Per-unit renovation costs would have shown as roughly $10,000 for a full gut renovation of an industrial building. Obvious nonsense to a human, but the AI presented it with full confidence.

This is why I built a validation layer that I consider non-negotiable for any AI system touching financial data. It's the same principle I wrote about in AI that rejects its own bad work — the system has to know when it doesn't know.

The validation layer runs three types of checks. First, range validation on every extracted metric: cap rates must fall between 2% and 15%, IRR projections between 5% and 40%, equity multiples between 1.0x and 5.0x, per-unit renovation costs between $15,000 and $150,000 depending on property type. Second, cross-validation between related numbers: does the stated NOI divided by the purchase price actually equal the stated cap rate? Does the equity multiple match the cash flow projections over the stated hold period? Third, confidence scoring on each extraction — how certain is the model that it read this number correctly?

When confidence drops below threshold or a validation check fails, those fields get highlighted in yellow for human review. The system does not present uncertain numbers as facts. It says "I think this is right but you should verify." In financial analysis, a hallucinated number isn't embarrassing — it could drive a seven-figure investment decision in the wrong direction.

The system is designed to be wrong safely rather than confidently wrong. That's a design choice I make in every AI system I build, but it's especially critical in real estate syndication analysis where capital is at stake.

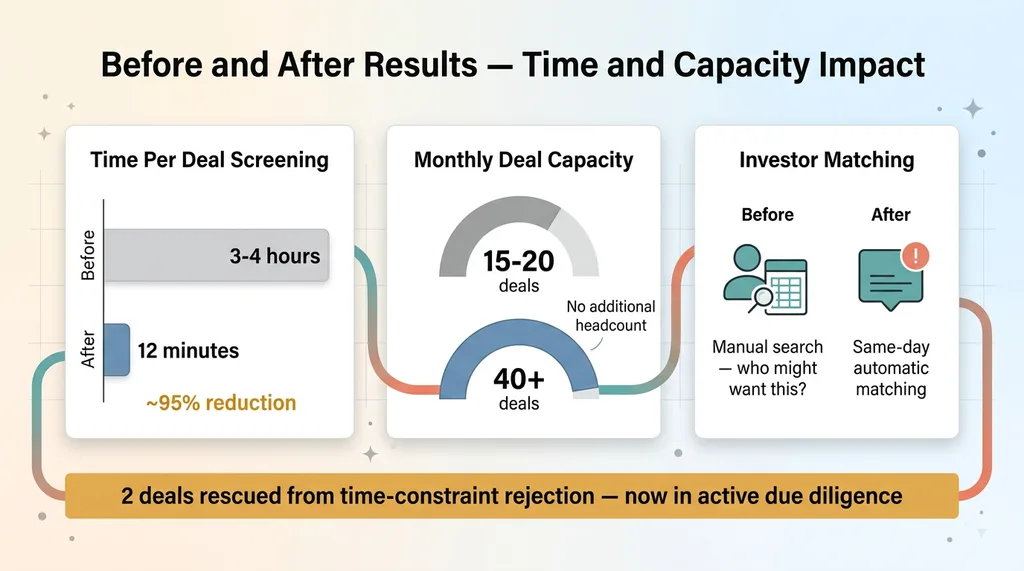

Results: 3-4 Hours Down to 12 Minutes

The numbers tell the story.

Before and After Results — Time and Capacity Impact

Before and After Results — Time and Capacity Impact

Time per initial deal screening dropped from 3-4 hours to roughly 12 minutes. Most of that is AI processing time plus a quick human review of any yellow-flagged items. Monthly capacity went from 15-20 deals evaluated to 40+ — without adding headcount. Two deals that would have been passed over due to time constraints made it through the expanded pipeline and are now in active due diligence.

The 2-page decision documents became the standard format for partner meetings. Everyone reads the same structured output instead of each partner skimming different sections of a 40-page memo and arriving with different takeaways.

Investor matching cut the notification process from a manual "who might want this" exercise to automatic. When a deal clears initial screening, matched investors get notified the same day.

One distinction worth making explicit: the AI doesn't make investment decisions. It compresses information and grounds it in market data so humans can make better decisions faster. The partners still apply judgment, relationships, and gut instinct built over decades. They just spend that judgment on 40 deals a month instead of 15, and they start from a position of structured knowledge rather than raw information overload.

Building Domain-Specific AI Systems That Actually Ship

Real estate syndication is a specific domain, but the pattern is universal. Find a process where smart people spend hours reading documents to make decisions. Compress the information layer with AI. Ground it in real-time data via API integrations. Wrap validation guardrails around everything so the system fails safely.

I've built this same pattern across my DTC fashion brand (product pipeline that goes from concept to live in 20 minutes, 564 products dynamically priced by AI), content operations (313 blog articles managed with AI-assisted SEO), and now financial analysis. The domain changes. The architecture doesn't.

The key insight: off-the-shelf AI tools don't work for specialized domains. They don't understand your data structures, your decision criteria, or your risk tolerances. You need purpose-built systems from someone who builds production software — not someone who demos ChatGPT in a boardroom and calls it strategy. That's the core of why I don't just advise on AI — I build it.

If this pattern resonates — if you have a domain where people spend hours reading documents to extract decisions — that's exactly where a purpose-built AI real estate analysis tool (or its equivalent in your industry) delivers real, measurable ROI.

Want to Explore What AI Could Do for Your Business?

I do a free 30-minute strategy call. No pitch deck, no sales team sitting in. Just a real conversation about your operations, where the bottlenecks are, and whether a purpose-built AI system makes sense for your situation.

Or if you want to apply to work together, start there. Either way, I'll tell you honestly whether I can help.

Get AI insights for business leaders

Practical AI strategy from someone who built the systems — not just studied them. No spam, no fluff.

Ready to automate your growth?

Book a free 30-minute strategy call with Hodgen.AI.

Book a Strategy Call