AI Consultant vs AI Agency: Why I Build What I Advise

AI consultant vs AI agency — and why the best option is someone who does both. Real examples from 29 production AI systems.

By Mike Hodgen

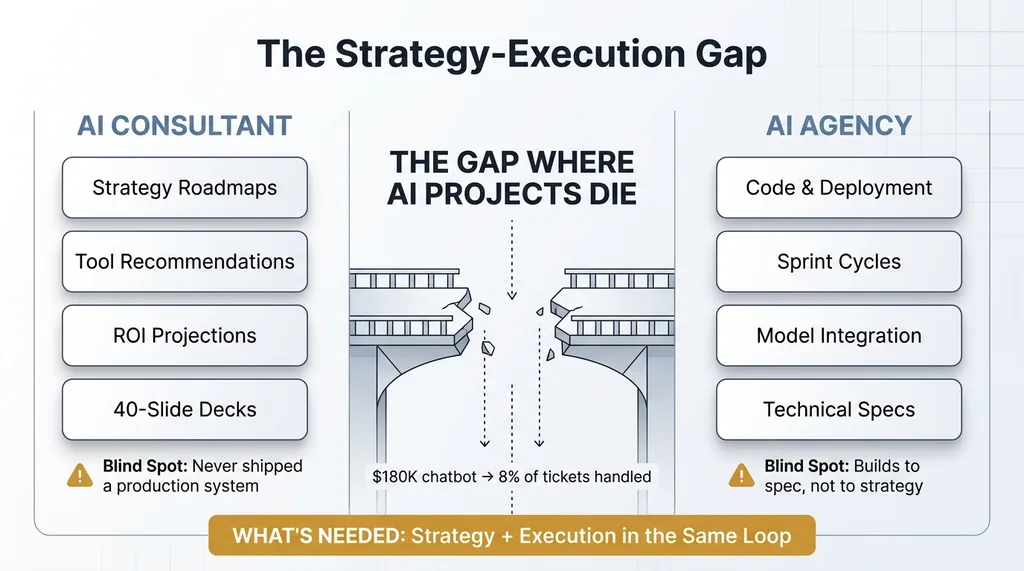

The AI consultant vs AI agency debate frames a choice that shouldn't exist. Most companies shopping for AI help are choosing between someone who can think about AI and someone who can build AI. That's a false binary, and it's costing businesses real money and months of wasted effort.

Here's what I mean.

The AI Market Has Split Into Two Camps (Both Are Missing Something)

On one side, you've got the consultants and advisors. They deliver roadmaps, framework diagrams, and tool recommendations. They speak fluent ROI. They'll give you a 40-slide deck showing where AI fits in your business. Good ones are genuinely smart people who understand business strategy.

The Strategy-Execution Gap

The Strategy-Execution Gap

On the other side, you've got agencies and dev shops. They write code, deploy models, and ship integrations. They have engineering teams, sprint cycles, and project managers. They can build things.

The problem is that neither camp has the feedback loop between strategy and execution.

I watched this play out last year. A CEO I know hired a well-known AI consulting firm to assess their customer service operations. The consultants recommended an AI chatbot — solid recommendation on paper. They spec'd out the vendor, projected a 35% reduction in ticket volume, and delivered a polished strategy document.

Then reality hit. The company's customer data lived across three systems that didn't talk to each other. The chatbot needed clean product data that didn't exist. The "simple" integration required rebuilding their entire data pipeline first. The consultants didn't know this because they'd never actually built a chatbot on messy real-world data. They'd evaluated vendor demos on clean sample datasets.

Six months and $180K later, the chatbot handled about 8% of tickets.

That gap — between what looks right in a strategy document and what actually works in production — is where most AI projects go to die.

What AI Consultants Get Right (And Where They Fall Short)

The Value of Strategic Context

I want to be fair here. Good AI consultants bring real value. They understand business problems. They can look at a company's operations and identify which processes are candidates for AI, which aren't, and what order makes sense. They speak the language of margins, headcount, and competitive positioning. They help CEOs think clearly about where AI fits.

That strategic framing matters. Without it, companies build shiny AI tools that don't connect to any business outcome. I've seen companies spend $100K on an internal AI tool that saves one employee 3 hours a week. The math never worked, but nobody with business context was in the room when the build started.

The Advisor Blind Spot

Here's where it breaks down. Most AI consultants have never shipped a production AI system. They recommend tools based on vendor demos, analyst reports, and conference talks — not deployment experience.

They don't know that Claude handles structured content generation better than GPT for certain tasks but that Gemini approaches image generation with a completely different logic. They haven't discovered that an AI shopping assistant needs 14 different failure modes handled before it's reliable enough to put in front of customers. They've never learned the hard way that a multi-model architecture isn't a luxury — it's a cost and quality necessity once you're running AI at any real scale.

There's a massive difference between recommending "you should use AI for dynamic pricing" and knowing that 564 products need different pricing logic based on material cost, competition data, and margin targets — organized into a 4-tier ABC classification system that took weeks of iterative building to get right.

The consultant gives you the first sentence. The second sentence only comes from building.

And that gap between advice and implementation? That's where most AI projects die. Not because the strategy was wrong, but because nobody on the strategy side understood what the build would actually require.

What AI Agencies Get Right (And Where They Fall Short)

The Value of Technical Execution

Agencies can build. That's their job, and the good ones do it well. They ship code. They deploy models. They create integrations. They have engineering teams who know how to handle infrastructure, testing, and deployment pipelines.

If you know exactly what you need built and can write a tight spec, a good agency will execute it. That's genuinely valuable.

The Agency Blind Spot

Agencies build to spec, not to strategy. They optimize for the deliverable because the deliverable is the contract. They don't push back on the brief because pushing back means scope creep, timeline risk, and uncomfortable conversations with clients who are paying by the milestone.

Here's a concrete example. An agency would happily build you a blog automation system that publishes 50 articles a month. They'd nail the technical execution — content generation, formatting, scheduling, publishing. Clean code, on time, on budget.

But without strategic context, those articles target the wrong keywords, cannibalize each other's search rankings, and don't connect to your sales funnel. You end up with volume that produces zero revenue.

I manage 313 blog articles with AI-assisted SEO across my own DTC fashion brand. That system works — not because the code is clever, but because the strategy and the build happened in the same brain. Every article connects to a keyword strategy. Every keyword connects to a product category. Every product category connects to revenue. That connection doesn't show up in a technical spec.

Agencies also create dependency. They build it, hand it over, and you need them for every change. My AI toolkit has 22,000+ lines of custom Python. That's not something you can hand off to a rotating agency team with developer turnover every six months and expect coherence. The codebase has opinions — architectural choices that reflect business strategy. A new team wouldn't know why things were built the way they were, and they'd start making "improvements" that break the strategic logic.

When Building Informs Strategy (And Vice Versa)

This is the core of why the AI consultant vs AI agency framing misses the point. The real advantage isn't consulting or building. It's the feedback loop between them.

The Strategy-Build Feedback Loop

The Strategy-Build Feedback Loop

The Product Pipeline Discovery

When I started building the product creation system for my DTC brand, the goal was simple: speed up listing creation. Write product descriptions faster, format images quicker, get products live sooner.

That was the strategy going in.

But during the build, I discovered something the strategy hadn't accounted for. The bottleneck wasn't listing speed. It was concept validation. My team was spending hours creating listings for product concepts that didn't perform. The real waste wasn't slow listings — it was listings for the wrong products.

That insight — which only surfaced because I was hands-on in the build — completely restructured the project. Instead of a faster listing tool, I built an end-to-end pipeline that goes from concept to live product in 20 minutes. Concept generation, validation, description writing, image handling, pricing, and publishing — all in one flow.

An advisor would have recommended a copywriting tool. An agency would have built faster listing templates. Neither would have restructured the entire pipeline, because neither would have been deep enough in the process to see what was actually broken.

Strategy Changes Mid-Build

Second example. I started with a single LLM for everything. That was the strategy: pick the best model, build everything on it, keep it simple.

Building revealed that was wrong. Claude excels at structured content — product descriptions, blog drafts, data analysis. But Gemini handles certain visual and generative tasks with a fundamentally different approach that produces better results for specific use cases. Meanwhile, cost optimization at scale demands routing different tasks to different models based on complexity.

That technical discovery became a strategic principle: match models to tasks. It saves real money when you're running thousands of AI operations per month.

Here's the feedback loop in plain terms. Strategy said "we need to reduce manual operations time." Building revealed that a 42% reduction required rethinking the workflow entirely — not just automating existing steps. You can't automate a bad process and call it progress. Building showed me which processes needed to be redesigned before they could be automated. That changed the strategy. The new strategy changed what I built next.

Strategy and building have to happen together. Separate them into different companies — or even different people — and you lose the loop that makes AI projects actually work.

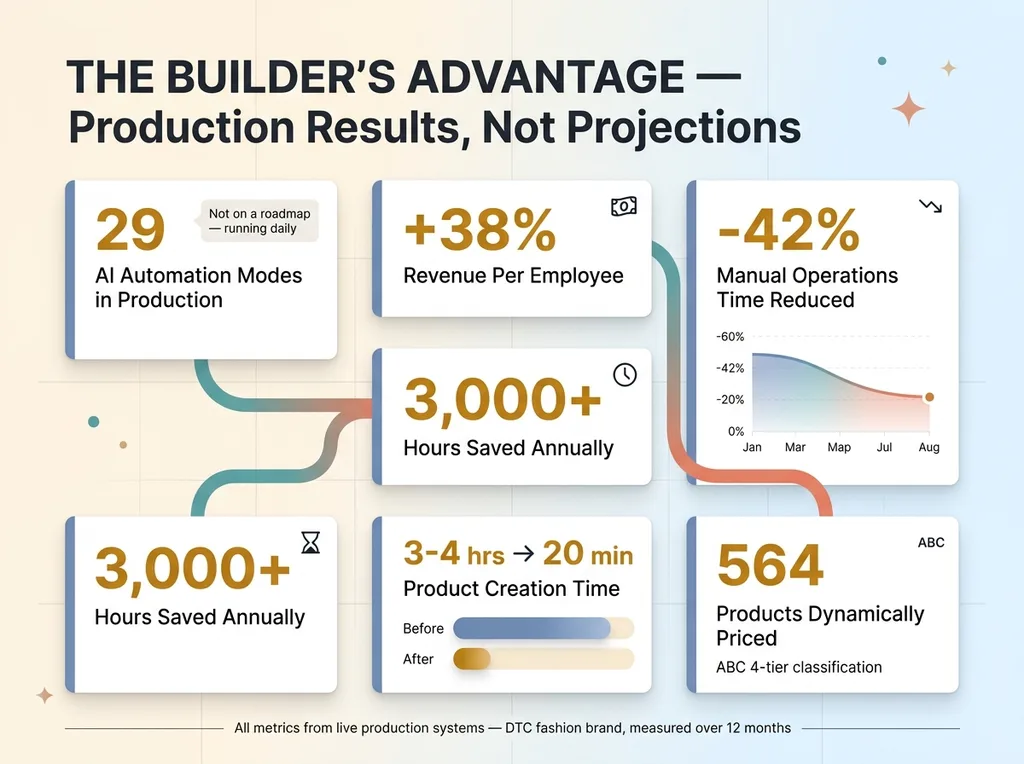

The Numbers Behind the Builder's Advantage

Let me make this concrete with hard data.

Hard Numbers: Builder's Advantage Results

Hard Numbers: Builder's Advantage Results

29 AI-powered automation modes in production. Not proposed. Not on a roadmap. In production, running daily, handling real business operations. That distinction matters because proposals don't generate revenue.

+38% revenue per employee. That metric only exists because the same person tracking business KPIs was building the systems. I knew which metrics mattered, so I built systems that moved those specific metrics.

-42% manual operations time. Knowing which 42% to cut required understanding both the business priorities and the technical possibilities. Some tasks that seemed like obvious automation candidates turned out to be poor fits. Other tasks I never would have thought to automate turned out to be the biggest wins.

3,000+ hours saved annually. But here's the thing — the hours that matter most aren't the obvious ones. Building reveals where the real time sinks are, often in places you'd never identify from a strategy document.

Product creation: 3-4 hours down to 20 minutes. That 10x improvement happened iteratively during the build. The first version saved maybe 30%. Then I saw where the remaining time went, rebuilt those steps, and kept compressing. That iteration only happens when the builder understands the business context of every minute saved.

564 products dynamically priced. The pricing logic evolved through building and testing — adjusting rules, watching results, refining the ABC classification tiers based on actual margin data. No strategy document would have gotten the pricing logic right on the first pass. Or the fifth.

These numbers are the result of strategy and building happening in the same loop. Not in separate departments. Not in separate companies. In the same brain, every day.

How to Evaluate Who Should Lead Your AI Efforts

Three Questions to Ask Any AI Partner

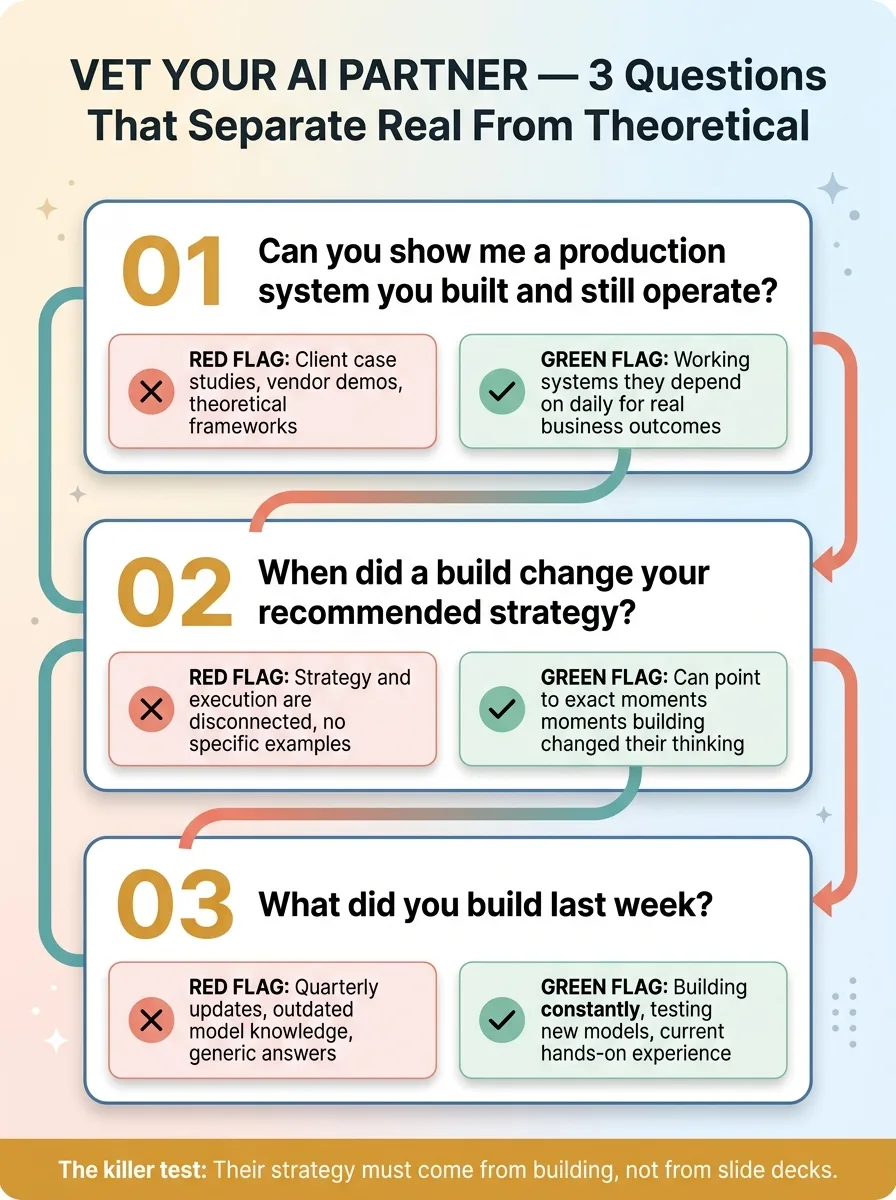

If you're comparing options — consultant, agency, or someone who does both — here are three questions that will separate the real from the theoretical.

Three Evaluation Questions Decision Framework

Three Evaluation Questions Decision Framework

1. Can you show me a production system you built and still operate?

Not a client case study. Not a demo. A system you built for yourself, that you run daily, that you depend on for real business outcomes. If they can't show you one, they're advising from theory.

2. When did a build change your recommended strategy?

This is the killer question. If they can't point to a specific moment where hands-on building changed their strategic thinking, their strategy and execution are disconnected. They're operating in two separate worlds.

3. What did you build last week?

AI moves too fast for quarterly updates. The person leading your AI efforts needs to be building constantly — testing new models, trying new approaches, discovering what works now versus what worked three months ago.

Red Flags That Signal the Wrong Fit

Watch for these:

- They recommend tools they don't personally use

- Their case studies are all "we helped [client] achieve [vague metric]"

- They can't explain tradeoffs between different AI models from firsthand experience

- They propose a 6-month roadmap before understanding your data

- They want a $30K "discovery phase" before anything gets built

- They talk about AI in generalities — "machine learning," "natural language processing" — without specifics about which models, which approaches, which tradeoffs

Green flags: they've shipped their own AI systems. They can show you working code. They talk openly about failures and limitations. They can start building in weeks, not months.

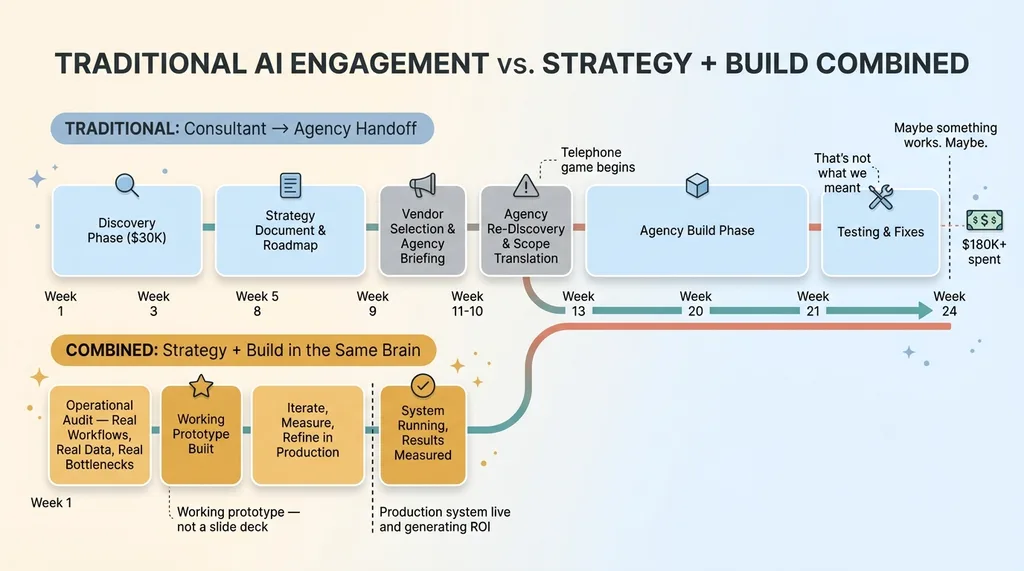

What It Looks Like When Strategy and Building Are the Same Job

When I engage with a company, week one isn't a PowerPoint. It's an operational audit — looking at real workflows, real data, real bottlenecks. By the end of week one, we've identified the highest-ROI system to build. By week two, there's a working prototype.

Week-by-Week Engagement Timeline vs Traditional Approach

Week-by-Week Engagement Timeline vs Traditional Approach

Decisions happen faster because the person recommending the approach is the same person who will build it and live with the consequences. There's no telephone game between the strategy team and the engineering team. No scope document that gets reinterpreted three times. No "that's not what we meant" moment four weeks into development.

This is what a Chief AI Officer actually does — not advise from the outside, but operate from the inside. Sit in the business, understand the priorities, build the systems, measure the results, and adjust. Continuously.

If you're earlier in your thinking and want to understand which AI systems to prioritize, that's a good place to start. Not every company needs 29 automation modes. Some need three. The right three.

This approach isn't for everyone. It's for companies that are done with slide decks and ready to see real systems running inside their business. If that's where you are, start a conversation about what that looks like.

Ready to Bring AI Leadership Into Your Company?

I work with a small number of companies at a time. If you're serious about building real AI systems — not just talking about them — apply to work together and I'll review your application personally.

Get AI insights for business leaders

Practical AI strategy from someone who built the systems — not just studied them. No spam, no fluff.

Ready to automate your growth?

Book a free 30-minute strategy call with Hodgen.AI.

Book a Strategy Call