I Built an AI Video Review System Using Gemini 2.5 Pro

How I built an AI video analysis business tool that reviews advisor recordings and delivers coaching feedback using Gemini 2.5 Pro's native video understanding.

By Mike Hodgen

A financial advisory firm managing $500M+ came to me with a problem that was eating their senior partner alive. They wanted to improve how their advisors showed up on camera — client-facing recordings, educational videos, quarterly market updates. The kind of content that builds trust or quietly erodes it.

Their process was fully manual. The managing partner would sit down, watch each advisor's recordings, take notes, then deliver feedback in a one-on-one. It took hours every week. The feedback was inconsistent — sometimes detailed, sometimes a quick "looks good" because he was behind on everything else. And it created a bottleneck that meant most videos went unreviewed entirely.

Here's why they cared enough to call me: in wealth management, a single client relationship can represent $50K to $500K+ in annual fees. When an advisor looks nervous, rushes through a market downturn explanation, or reads from notes during a "personal" update, clients notice. Maybe not consciously. But trust erodes, and eventually they take a call from a competitor who seems more polished.

The firm knew the stakes. They just didn't have the bandwidth to address them at scale.

So they asked me a question I hadn't been asked before: can AI actually watch a video and give meaningful, nuanced coaching feedback?

Most people still think of AI as a text tool. Chatbots. Email drafts. Content generation. This project pushed into genuinely new territory — AI video analysis for business purposes, applied to a problem where the nuance actually matters and the dollars are significant.

I said yes, but with a caveat: let me build it and prove it before you commit. That honesty is what got the project off the ground.

Why Video Is the Next Frontier for AI Analysis in Business

Text and images are solved problems

At this point, AI handling text is table stakes. Summarizing documents, writing drafts, analyzing customer feedback — these are mature applications. Even image analysis has become routine. I use AI to process product photography for my DTC fashion brand without thinking twice about it.

But video? Almost nobody is using AI to analyze video in a business context. And video is everywhere. Sales demo recordings. Training sessions. Compliance review footage. Onboarding walkthroughs. Marketing content. The average mid-size company produces dozens of hours of video per month that either gets watched by expensive humans or — more commonly — never gets reviewed at all.

AI video analysis for business is where text analysis was two years ago: early, underexplored, and carrying massive potential for the companies that move first.

What Gemini 2.5 Pro actually understands about video

What makes Gemini 2.5 Pro different from duct-taping together a transcription service and an image analyzer is that it processes video natively. It's not looking at a series of screenshots. It understands temporal relationships — what happened before and after a given moment. It tracks pacing, transitions, and audio-visual alignment in a way that feels closer to how a human watches video.

It picks up on body language cues, gaze direction, speech rhythm, and how content is structured over time.

I'll be honest about the limitations. It's not perfect at reading subtle facial expressions. It can miss cultural nuances in communication style. It sometimes misinterprets deliberate pauses as hesitation. But for structured coaching feedback against defined criteria — specific rubrics with clear behavioral anchors — it's remarkably capable.

This is exactly why I use different AI models for different jobs. Claude excels at text reasoning and nuanced written analysis. Gemini excels at multimodal understanding. I'm not loyal to any single model. I'm loyal to results.

The System Architecture: Multi-Take Recording to AI Review

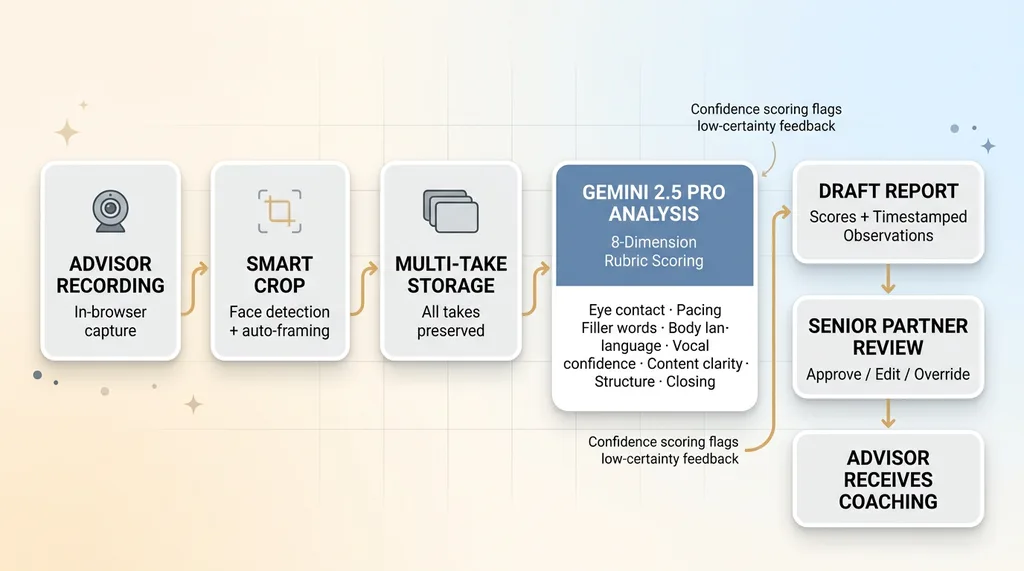

System Architecture: Recording to AI Review Pipeline

System Architecture: Recording to AI Review Pipeline

Smart crop and recording pipeline

The first problem wasn't the AI. It was getting usable video from advisors who aren't content creators.

I built a system where advisors record directly in-browser. No apps to install, no equipment to buy. They open a link, hit record, and talk. The key feature: smart crop. Using face detection, the system automatically frames the speaker, centering them in a consistent composition regardless of their webcam setup, office lighting, or how far they sit from the screen.

This matters more than it sounds. Without it, every video looks different — one advisor is a tiny figure in a massive room, another is so close you can see their pores. The smart crop normalizes everything so the AI is evaluating performance, not production quality.

The multi-take workflow

Advisors hated the pressure of one-shot recording. They'd get three sentences in, stumble, and want to start over. Then they'd overthink the second attempt.

So I built a multi-take workflow. Advisors can record as many takes as they want. The system stores each one. The AI reviews all of them, recommends which take is strongest, and explains why. This alone changed adoption. Advisors went from dreading recording sessions to treating them like practice with a built-in coach.

Feeding video to Gemini 2.5 Pro

The video uploads to Gemini via its API. Straightforward on the surface. The hard part — by a wide margin — was the prompt engineering.

My first attempt produced feedback like "Good energy! Consider working on your pacing." Useless. The kind of generic encouragement you'd get from a motivational poster.

I had to build detailed rubrics covering 8 specific dimensions: eye contact consistency, speech pacing, filler word frequency, body language openness, vocal confidence, content clarity, structural organization, and closing effectiveness. Each dimension is scored 1-10 with specific behavioral anchors. A 7 on eye contact means something different from a 7 on pacing, and the system knows why.

Getting those rubrics right took 3 full iterations. The first version was too vague. The second was too rigid — it penalized stylistic choices that were actually effective. The third version balanced structure with enough flexibility to recognize that a slower pace isn't always a problem and direct eye contact norms vary.

The prompt also instructs Gemini to provide timestamped observations. Not "your pacing needs work" but "between 1:42 and 2:15, your speech rate dropped to approximately 90 words per minute while explaining the fee structure — this may signal uncertainty to clients who are already sensitive about costs."

That level of specificity is what separates a useful system from a toy.

What the AI Actually Catches (And What It Misses)

Surprisingly good at pacing and filler words

Filler word detection is near-perfect. The system catches every "uh," "um," "like," "so," and "you know" with timestamps. One advisor was identified as saying "um" 47 times in a 6-minute video. He had no idea. He'd been recording for months and nobody had mentioned it because the human reviewer was focused on content accuracy and missed the verbal tics entirely. That single insight changed his next recording dramatically — he dropped to 11 filler words by his third attempt.

Pacing analysis is also strong. The AI identifies when advisors rush through complex financial concepts (like portfolio rebalancing rationale) or linger too long on basics their audience already understands. It detects pacing shifts that correlate with discomfort — when someone speeds up, it often means they're not confident in what they're saying.

Eye contact tracking works well for the binary question: is the advisor looking at the camera or reading from notes? It caught several advisors who had scripts taped next to their webcam, and their gaze kept drifting left. On video, that reads as evasiveness.

Content structure analysis — whether the video has a clear opening, body, and close — is solid. It flags videos that just... start talking without framing the topic, or that trail off without a defined ending.

The body language question

This is where things get more nuanced. Gemini can identify obvious body language — crossed arms, leaning away from the camera, hand gestures. It's decent at spotting when someone's posture shifts significantly during a recording.

But it struggles with subtle confidence cues. Nervous energy that manifests as slight fidgeting, micro-expressions, the difference between authoritative stillness and frozen discomfort. These are things an experienced human coach reads intuitively. The AI isn't there yet.

It also sometimes over-indexes on a single dimension. I saw it ding an advisor for slow pacing during a section where the deliberate, measured delivery was actually the right call — she was walking through a complex tax-loss harvesting scenario and her audience needed the slower speed.

Where human review still wins

Cultural communication styles, humor that lands versus falls flat, the emotional resonance of a personal story — these remain human territory. The AI can tell you that someone told an anecdote at the 3:20 mark. It can't reliably tell you whether it landed.

This is why the system was never designed to run autonomously. Which brings me to the most important architectural decision in the whole build.

The Draft Workflow: Why AI Feedback Needs a Human Layer

AI generates, humans approve

Every AI coaching report starts as a draft. The AI generates raw feedback — scores across all 8 dimensions, timestamped observations, a recommended best take with rationale, and specific improvement suggestions.

This draft goes to the senior partner. Not to the advisor.

The partner reviews the AI's analysis instead of watching the full video. They can approve, edit, or override any feedback point. They can add context the AI can't know ("this advisor just lost a major client last week, go easy on the confidence score"). They can flag when the AI got something wrong.

This is the same pattern I use across my systems — AI that rejects its own bad work before it reaches the end user. In this case, the AI also scores its own confidence on each dimension. Eye contact: high confidence. Humor effectiveness: low confidence. Low-confidence scores get flagged automatically so the reviewer knows where to focus their attention.

Turning scores into coaching

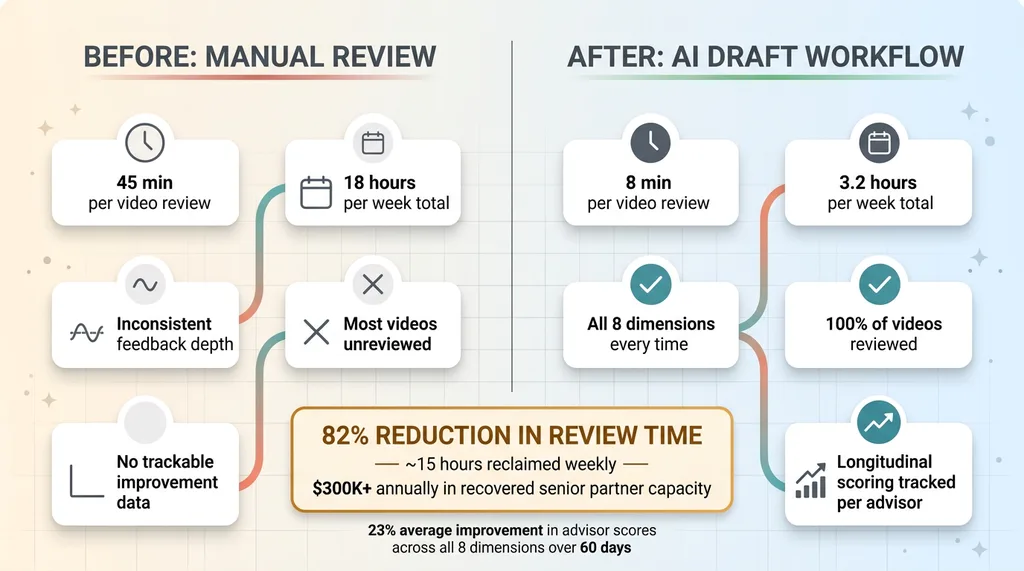

The math on time savings is striking. Twelve advisors, two recordings per week each. That's 24 videos. At 45 minutes of manual review per video, the senior partner was spending 18 hours per week just watching recordings. With the AI draft workflow, he reviews the AI's output in about 8 minutes per video. That's 3.2 hours per week.

Before vs After: Manual Review vs AI Draft Workflow

Before vs After: Manual Review vs AI Draft Workflow

Nearly 15 hours reclaimed every week for a senior partner whose time is worth $400+ per hour. That's $6,000 per week in recovered capacity. Over $300,000 annually.

But the quality improvement matters just as much. The AI evaluates all 8 dimensions every single time. Humans tend to focus on whatever stands out and miss patterns. The feedback is now consistent across all 12 advisors — no more favorites getting extra attention. And every score gets logged, creating a trackable record of improvement over time.

Advisors get feedback with specific timestamps: "At 2:14, you broke eye contact for 8 seconds while discussing the fee structure. This is a common trust erosion point — clients are most attentive to nonverbal cues during discussions about money."

That's coaching. Not just scoring.

Results After 60 Days

After two months in production, the numbers are clear enough to share.

Review time for the senior partner dropped 82%. Average advisor scores across the 8 dimensions improved 23% from first recording to most recent. Feedback consistency — previously all over the map — is now standardized. Every advisor gets the same depth of analysis every time.

Adoption surprised me. After initial skepticism (several advisors called it "creepy" during the first week), 10 of 12 advisors now voluntarily record practice takes before client-facing content. They're using the AI as a personal coach on their own time, without being asked.

One specific outcome stands out. An advisor who was being considered for a performance improvement plan showed enough improvement through the AI coaching cycle that the PIP was cancelled. The firm estimates this retained a $2M book of business. That single save more than covered the entire cost of building the system.

I want to be measured here. This is one deployment, early results, still being tuned. But the signal is clear: AI video analysis isn't theoretical. It's producing measurable business outcomes today.

The Businesses That Figure This Out First Will Own Their Markets

Beyond coaching: compliance, sales, training

The coaching application is just the first use case I built. The same pattern — structured AI analysis of video against defined rubrics — applies everywhere video exists in business.

Compliance teams could review recorded client meetings for regulatory adherence. Did the advisor disclose the required risk factors? Did they make any prohibited promises about returns? Today, compliance officers spot-check maybe 5% of recordings. AI could review 100%.

Sales teams could analyze demo recordings to identify what actually works. Which reps close more deals, and what do they do differently in the first 90 seconds? Training departments could evaluate whether instructors are effective, not just whether they showed up.

The pattern is identical: take video that currently gets watched by expensive humans — or more likely, never gets reviewed at all — and run it through AI that provides structured, consistent analysis.

The businesses that move first win

Most businesses I talk to haven't even considered AI video processing as an option. They're still focused on chatbots and content generation. Meanwhile, they're sitting on hundreds of hours of video that's never been analyzed because human review doesn't scale.

Finding these non-obvious opportunities is exactly what a Chief AI Officer actually does. It's not about chasing the flashiest technology. It's about finding the $400/hour bottleneck that nobody else has noticed and building a system that eliminates it.

If your business creates or reviews video in any capacity — sales, training, compliance, marketing, coaching — this technology is ready now. Not next year. Not when it's "more mature." The model capabilities exist today, and the systems to make them practical in a business context are buildable today.

The question is whether you'll find these opportunities before your competitors do.

Thinking About AI for Your Business?

If anything in this piece made you think about video (or any other process) sitting unreviewed in your company, let's talk. I do free 30-minute discovery calls where we look at your actual operations and figure out where AI could make a real difference — not in theory, but in the next 90 days.

Get AI insights for business leaders

Practical AI strategy from someone who built the systems — not just studied them. No spam, no fluff.

Ready to automate your growth?

Book a free 30-minute strategy call with Hodgen.AI.

Book a Strategy Call