IDOR Protection: The Vulnerability AI Code Creates by Default

IDOR protection for web apps is critical — especially when AI writes your code. Real examples from auditing 12 projects and the patterns that fix it.

By Mike Hodgen

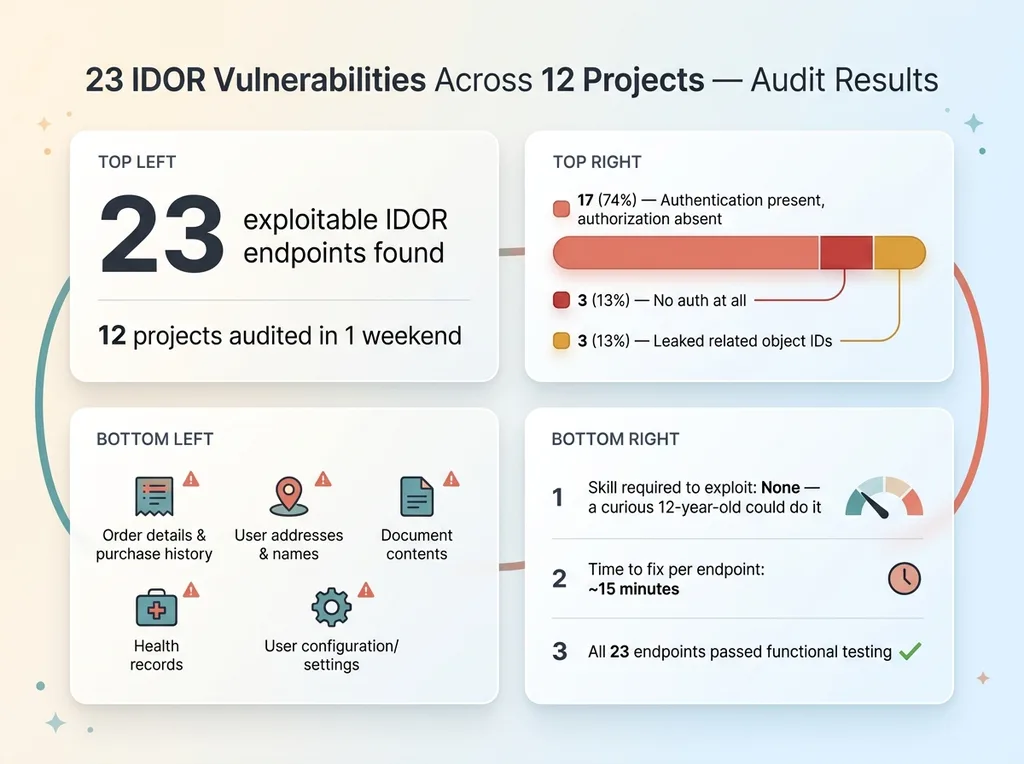

I found 23 IDOR vulnerabilities across 12 projects in one weekend. Not theoretical risks on a slide deck. Not hypothetical attack vectors from a security whitepaper. Real, exploitable endpoints in production and near-production code where any authenticated user could access another user's data by changing a number in the URL.

I Found 23 IDOR Vulnerabilities Across 12 Projects in One Weekend

A few months ago I decided to run a security audit across everything I'd built or helped build — my DTC fashion brand's backend, client projects, internal tools. Twelve codebases total, most with significant AI-assisted code. I was specifically looking for IDOR protection failures in web apps, and what I found kept me up that night.

IDOR Audit Results Data Visualization

IDOR Audit Results Data Visualization

The pattern was the same almost every time. An endpoint like /api/orders/[id] that would happily return any user's order if you supplied a valid ID. A /api/documents/[id] route serving documents belonging to other users. Settings endpoints exposing another user's configuration. In one case, a health-related endpoint where incrementing the ID in the URL returned another person's records.

Twenty-three endpoints across twelve projects. Every single one followed the same pattern: the code correctly fetched a resource by its ID, and at no point verified whether the person asking for it had any right to see it.

Let me make this concrete. This isn't like a cross-site scripting vulnerability where an attacker needs to craft a malicious payload and trick someone into clicking it. This is: I log into your app, I see my order is /api/orders/847, I change it to /api/orders/846, and now I'm looking at someone else's order. Their name, their address, their purchase history. No tools required. No technical sophistication. A curious twelve-year-old could do it.

The scariest part: every one of these endpoints passed functional testing. They all worked exactly as coded. The code did what it was supposed to do — it just didn't do what it was supposed to prevent.

And the majority of this code was AI-generated.

What an Insecure Direct Object Reference Actually Is

The Anatomy of an IDOR

An insecure direct object reference — IDOR — is one of those security terms that sounds complicated but describes something painfully simple. Your app exposes an internal identifier (a database ID, a filename, a record number) in a URL or API request, and it doesn't check whether the person making the request actually owns that resource.

Vulnerable vs Secure Code Comparison

Vulnerable vs Secure Code Comparison

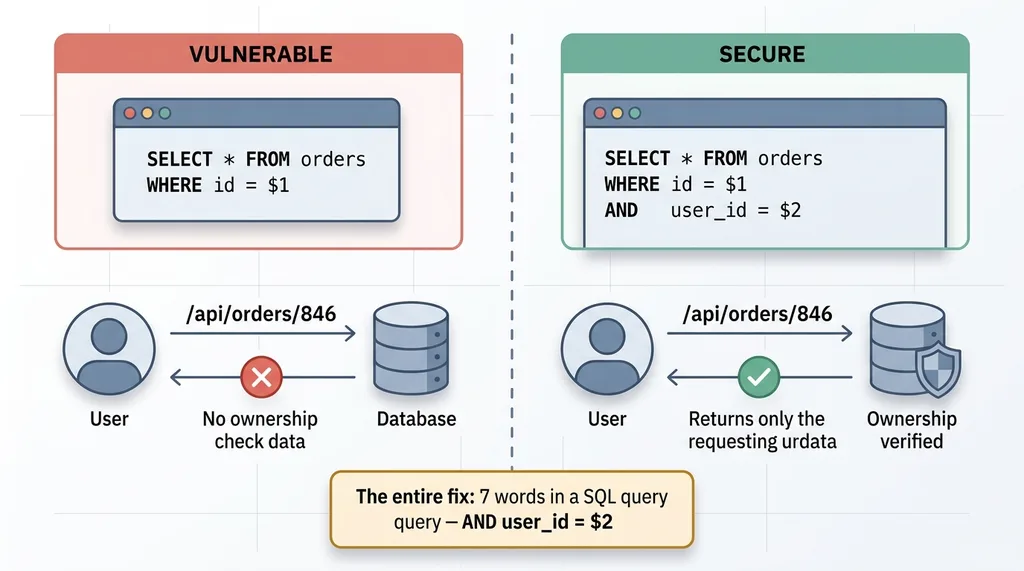

Here's the vulnerable version:

// VULNERABLE: fetches any order by ID

app.get('/api/orders/:id', async (req, res) => {

const order = await db.query('SELECT * FROM orders WHERE id = $1', [req.params.id]);

return res.json(order);

});

And here's the secure version:

// SECURE: fetches order only if the authenticated user owns it

app.get('/api/orders/:id', async (req, res) => {

const order = await db.query(

'SELECT * FROM orders WHERE id = $1 AND user_id = $2',

[req.params.id, req.user.id]

);

if (!order) return res.status(404).json({ error: 'Not found' });

return res.json(order);

});

The difference is seven words in a SQL query: AND user_id = $2. That's it. That's the entire vulnerability.

Why IDORs Are Different From Other Vulnerabilities

SQL injection is about malformed input — someone puts '; DROP TABLE users;-- in a form field. XSS is about script injection — someone gets malicious JavaScript to run in another user's browser. These are bugs in what the code does.

IDOR is a bug in what the code doesn't do. The code functions correctly. It fetches the record. It returns valid JSON. It handles errors gracefully. It just never asks the question: "Should this user be seeing this?"

That's what makes IDORs invisible to most testing. Your unit tests pass. Your integration tests pass. Everything works. Because you're always testing with data you own.

OWASP ranks broken access control as the number one vulnerability in their Top 10 list (2021), up from fifth place in their previous ranking. IDORs are the most common form of this. It's not a niche concern. It's the single most prevalent security flaw in modern web applications.

Why AI Assistants Produce IDOR-Vulnerable Code by Default

The Happy Path Problem

When you prompt Claude, GPT, Copilot, or any AI assistant with "build me an API endpoint that returns an order by ID," it does exactly that. Faithfully. Correctly. And vulnerably.

The AI writes code that works. It handles the happy path — an authenticated user requests their own data, the database returns it, the response is formatted properly. In development and testing, this code is flawless because you're always testing with your own user account and your own data.

I've written about this broader pattern in the security debt that accumulates with AI-assisted development. IDOR is the single most dangerous manifestation of it, because the code looks and feels complete.

AI Optimizes for Function, Not Authorization

AI assistants are trained on millions of code examples — tutorials, Stack Overflow answers, documentation, open-source repos. The vast majority of this training data demonstrates functionality, not security. A tutorial teaching you how to build a REST API shows how to fetch a record by ID. It doesn't include authorization logic because that's not the point of the tutorial.

Authentication vs Authorization Gap

Authentication vs Authorization Gap

The AI reproduces this pattern with perfect fidelity.

Here's what an AI generated for a document retrieval endpoint on one of my projects:

# What the AI wrote

@app.get("/api/documents/{doc_id}")

async def get_document(doc_id: int, current_user: User = Depends(get_current_user)):

document = await db.fetch_one("SELECT * FROM documents WHERE id = :id", {"id": doc_id})

if not document:

raise HTTPException(status_code=404)

return document

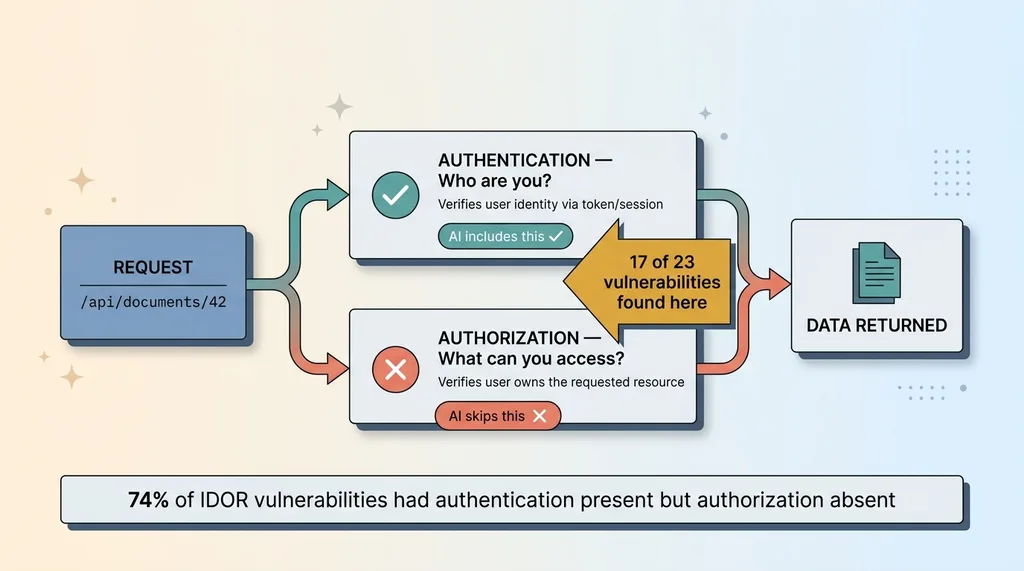

Notice something? It does authenticate the user — current_user is there. It just never uses it. The AI included authentication (verifying who you are) but skipped authorization (verifying what you can access). This is the most insidious pattern because it looks secure at a glance.

Here's what it should have been:

# What it should have been

@app.get("/api/documents/{doc_id}")

async def get_document(doc_id: int, current_user: User = Depends(get_current_user)):

document = await db.fetch_one(

"SELECT * FROM documents WHERE id = :id AND user_id = :uid",

{"id": doc_id, "uid": current_user.id}

)

if not document:

raise HTTPException(status_code=404)

return document

I saw this exact pattern — authentication present, authorization absent — in 17 of the 23 IDOR vulnerabilities I found. The AI was smart enough to include auth middleware. It just wasn't trained to think about what comes after authentication.

A configuration endpoint on another project had the same issue. The AI generated a perfectly functional settings retrieval route that accepted a config_id parameter. Any logged-in user could read any other user's configuration. The AI even added helpful error handling and input validation. It validated the format of the ID but not the ownership.

This isn't the AI being dumb. It's being literal. You asked for function. It delivered function. Security is a separate concern, and one that the training data almost never demonstrates in context.

The Ownership Verification Pattern That Fixes Everything

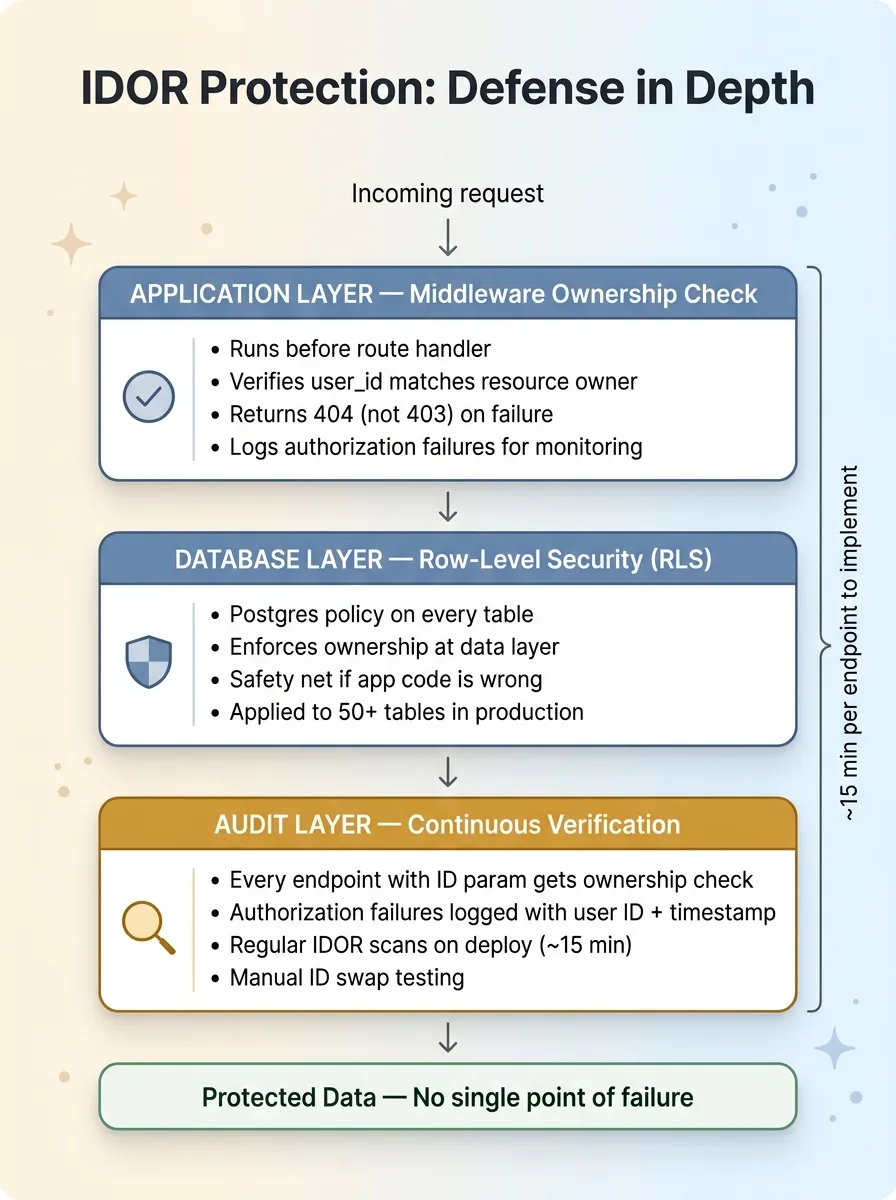

The fix is a single, unbreakable rule: never return data without verifying the requesting user owns it. Implementation happens at three layers.

Middleware-Level Checks

Before any route handler runs, verify the authenticated user has a relationship to the requested resource:

async def verify_resource_ownership(resource_type: str, resource_id: int, user_id: int):

query = f"SELECT id FROM {resource_type} WHERE id = :id AND user_id = :uid"

result = await db.fetch_one(query, {"id": resource_id, "uid": user_id})

if not result:

raise HTTPException(status_code=404) # 404, not 403

return result

This runs before your business logic. If the user doesn't own the resource, they get a 404 — not a 403. I'll explain why that matters in a moment.

Database-Level Checks With RLS

Row-Level Security in Postgres (and Supabase, which I use heavily) acts as a second line of defense at the data layer itself:

CREATE POLICY "Users can only see their own orders"

ON orders FOR SELECT

USING (user_id = auth.uid());

With RLS enabled, even if your application code is wrong — even if someone deploys a new endpoint without an ownership check — the database itself will not return unauthorized rows. The data layer enforces what the application layer should.

I've implemented this across all 50+ tables in my production systems. The full implementation guide is in my RLS playbook covering that entire architecture.

The Belt-and-Suspenders Approach

Use both layers. Application-level checks give you clear error messages, logging, and the ability to track authorization failures (which often indicate probing attacks). Database-level RLS is the safety net that catches what the application misses.

Belt-and-Suspenders Defense Layers

Belt-and-Suspenders Defense Layers

Here's the checklist I use for every project:

- Every endpoint that accepts an ID parameter gets an ownership check. No exceptions.

- Every database table with user data gets RLS policies. No exceptions.

- Authorization failures are logged with the requesting user's ID, the resource they tried to access, and a timestamp.

- Return 404 for unauthorized resources, not 403. A 403 tells an attacker "this resource exists, you just can't have it." A 404 reveals nothing.

This takes about 15 minutes per endpoint to implement properly. For a project with 30 endpoints, that's under 8 hours. Compare that to the cost of a data breach.

How to Audit Your Own Codebase in an Afternoon

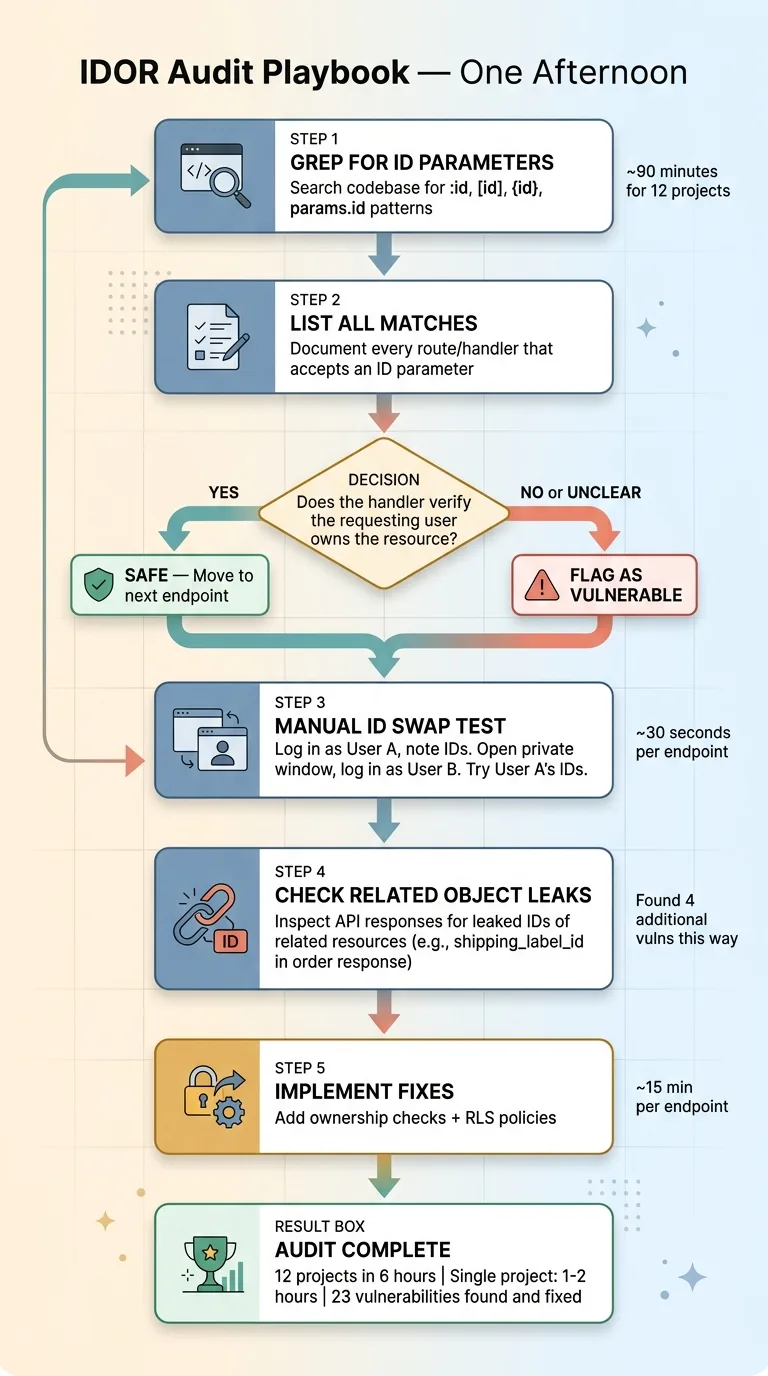

IDOR Audit Process Flowchart

IDOR Audit Process Flowchart

The grep Approach

Start with a search across your codebase for every route or API handler that takes an ID parameter. In most frameworks, this means searching for patterns like :id, [id], {id}, or params.id.

List every match. For each one, answer one question: does this handler verify the requesting user owns the resource before returning it? If the answer is no, or if you have to read the code three times to figure out the answer, flag it.

In my audit, this step took about 90 minutes across 12 projects and surfaced 20 of the 23 vulnerabilities.

Manual Testing With ID Swapping

This is the most effective and lowest-tech approach. Log in as User A. Navigate through the app and note the IDs that appear in URLs and API responses. Open a private browser window. Log in as User B. Try every one of User A's IDs.

If User B can see User A's data, you have an IDOR vulnerability.

Of the 23 IDORs I found, 19 were exploitable with simple ID swapping that took under 30 seconds each. The other 4 required looking at API responses that leaked IDs of related objects — for example, an order response that included a shipping_label_id that could be used to access someone else's shipping label through a different endpoint.

Automated Scanning

For more sophisticated testing, tools like Burp Suite can automate ID parameter fuzzing across your entire API surface. But honestly, for most codebases under 50 endpoints, the manual approach is faster and more thorough.

My 12-project audit took about 6 hours total. A single project with 20-30 endpoints takes 1-2 hours. You can do this on a Saturday morning. Given what's at stake, you should.

Beyond IDOR: The Full API Security Stack

IDOR protection is the most critical access control fix most AI-generated codebases are missing, but it's one layer in a stack that needs several.

Input validation with Zod or Pydantic prevents malformed data from reaching your logic. Rate limiting stops enumeration attacks — where someone scripts through sequential IDs to harvest data. Content Security Policy headers prevent the downstream exploitation of any data an attacker does manage to exfiltrate. And proper error handling ensures your API doesn't leak information about resource existence or structure.

I cover CSP hardening, Zod validation, and rate limiting as the complementary security layers that sit alongside IDOR protection. Together, they form a defense-in-depth approach where no single failure is catastrophic.

Security is layers. IDOR protection is the foundation most codebases are missing. But it's not the ceiling.

Every AI-Generated Endpoint Needs a Security Review

AI writes code faster than any human. That's the point. I've written 22,000+ lines of AI-assisted Python, and it's the backbone of a business that's seen a 38% increase in revenue per employee and a 42% reduction in manual operations time. I'm not arguing against AI-generated code. I'm arguing for reviewing it.

The fix isn't to slow down. It's to build security review into the development workflow, not after it. Every endpoint gets an ownership check before it merges. Every table gets RLS before it goes to production. Every deploy gets a quick IDOR scan — 15 minutes with curl and two browser sessions.

This is the kind of operational discipline that separates systems that scale from systems that end up on a breach notification. The code AI writes is good. The code AI writes without human security review is a liability.

I've solved these problems across 12+ production systems. The patterns are repeatable. The audit process is documented. The architecture works at scale.

Thinking About AI for Your Business?

If this resonated — whether you're building with AI-generated code and not sure what's lurking in your endpoints, or you're scaling fast and want systems that don't become liabilities — I'd like to talk. I do free 30-minute discovery calls where we look at your operations and figure out where AI could actually move the needle, and where it might be creating risk you haven't seen yet.

Get AI insights for business leaders

Practical AI strategy from someone who built the systems — not just studied them. No spam, no fluff.

Ready to automate your growth?

Book a free 30-minute strategy call with Hodgen.AI.

Book a Strategy Call