AI Education App Development: Building a Morse Code Trainer

How I built an AI education app that grades Morse code with voice input, adaptive learning, and 142 FCC exam questions. Real architecture decisions inside.

By Mike Hodgen

A friend of mine — a ham radio mentor, which in the amateur radio world they call an "Elmer" — came to me with a straightforward problem. He wanted to pass his FCC Technician license exam and couldn't find a study app that wasn't terrible. The exam pulls 35 random questions from a public pool of 142 across 10 subelements. Every existing app treats this like a flashcard problem. Memorize, repeat, hope for the best. No adaptation, no audio training, and critically, no Morse code practice that actually listens to your attempts and tells you what you got wrong.

That last part is where AI education app development gets interesting.

Morse code proficiency — real proficiency, not just recognition — requires the Koch method. You learn characters at full speed (20 words per minute), starting with just two. When you hit 90% accuracy, you add a third. It's a proven pedagogical approach, but it was designed for human teachers who could listen to a student's keying, hear the mistakes, and adjust the next exercise. No app was doing that. They'd play a character, let you tap an answer, and tell you right or wrong. That's a quiz, not a tutor.

I saw a gap where AI could do something a static curriculum physically cannot: listen to a student's actual Morse code attempt, grade the timing and accuracy, identify specific confusion patterns, and adjust the difficulty in real time. Not AI bolted onto content. Content built around what AI makes possible.

So I built it.

Why Most AI Education Apps Are Just Flashcards With a Chat Wrapper

The Static Curriculum Problem

I looked at about a dozen ham radio study apps before building mine. Every single one followed the same pattern: present content, quiz the user, show a score. A few had added a chatbot where you could "ask questions about the material." That's not adaptive learning. That's a search bar with personality.

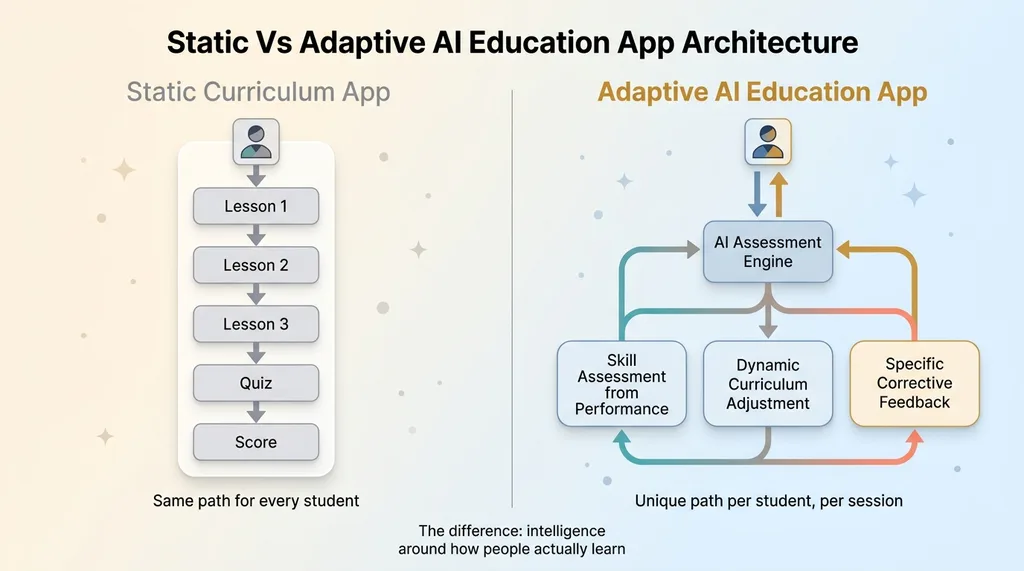

The fundamental problem is that static curricula teach to the average student. They present material in a fixed sequence, at a fixed pace, with fixed feedback. If you're faster than average on electrical fundamentals but slower on radio wave propagation, too bad. The app doesn't know and doesn't care.

This is true beyond ham radio. It's true for language learning apps, compliance training platforms, music education tools — basically any skill-based learning product that ships content instead of building intelligence around how people actually learn.

What Adaptive Actually Means

Real adaptive learning requires three capabilities that most "AI-powered" education apps don't have:

Static vs Adaptive AI Education App Architecture

Static vs Adaptive AI Education App Architecture

- Skill assessment from actual performance. Not self-reporting ("How well do you know this topic?"), not quiz scores on multiple choice. The system needs to observe what the student does and infer what they know.

- Dynamic curriculum adjustment. The next exercise changes based on the last one. Not at the end of a module. Every single time.

- Specific, corrective feedback. Not "incorrect, try again." Feedback that identifies what went wrong and why.

Here's a concrete example from Morse code. The character D is dah-dit-dit. The character N is dah-dit. Students confuse them constantly because D is just N with an extra dit. A static app sees two wrong answers and replays the same lesson. An adaptive AI system identifies the D/N confusion pattern, generates targeted discrimination exercises that alternate D and N at increasing speeds, and doesn't move on until the student can reliably distinguish them.

The Koch method already embodies progressive difficulty — it was brilliantly designed. But it was designed for a human tutor sitting next to you. AI can implement it at a per-student, per-character granularity that no human tutor could match across hundreds of users simultaneously.

That's the thesis for AI education app development done right. Not AI as a gimmick. AI as the implementation layer for proven teaching methods, running at scale.

The Architecture: Voice Input, AI Grading, and the Koch Method

Capturing Audio and Converting Morse Attempts

The user interface is deceptively simple. You hear a Morse code character. You tap a key or speak "dit" and "dah" to reproduce it. The app captures your attempt — timing, sequence, spacing.

This was harder than it sounds. Voice input latency varies wildly by device. An iPhone 14 processes audio differently than a four-year-old Android phone. A dit is supposed to be one time unit, a dah is three. But "supposed to" goes out the window when you're dealing with 80-200ms of device-specific audio processing lag.

I had to build a tolerance window for timing that adapts to the user's device and speed. The first few attempts calibrate the baseline. After that, the system knows that your dits average 120ms and your dahs average 340ms, and it grades relative to your established pattern rather than some theoretical ideal. This alone made a massive difference in grading accuracy.

Building the AI Grading Pipeline

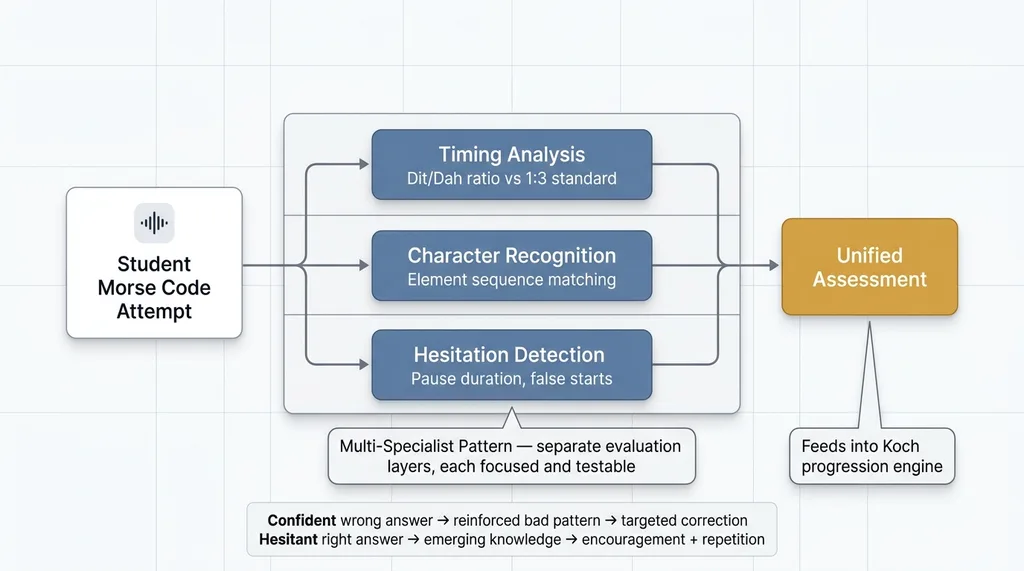

The grading isn't just right or wrong. Every attempt gets scored across three dimensions:

AI Grading Pipeline Architecture

AI Grading Pipeline Architecture

- Timing accuracy: How close were your dit/dah ratios to the standard 1:3?

- Character recognition: Did the sequence of elements match the target character?

- Hesitation patterns: How long did you pause before starting? Did you false-start?

This matters because a confident wrong answer and a hesitant right answer indicate very different things about what the student knows. The confident mistake means a reinforced bad pattern that needs targeted correction. The hesitant correct answer means emerging knowledge that needs encouragement and repetition.

The architecture follows a multi-specialist pattern rather than sending everything through a single monolithic prompt. The timing analysis, character matching, and hesitation detection are separate evaluation layers that feed into a unified assessment. This keeps each component focused and testable.

Implementing Koch Method Progression

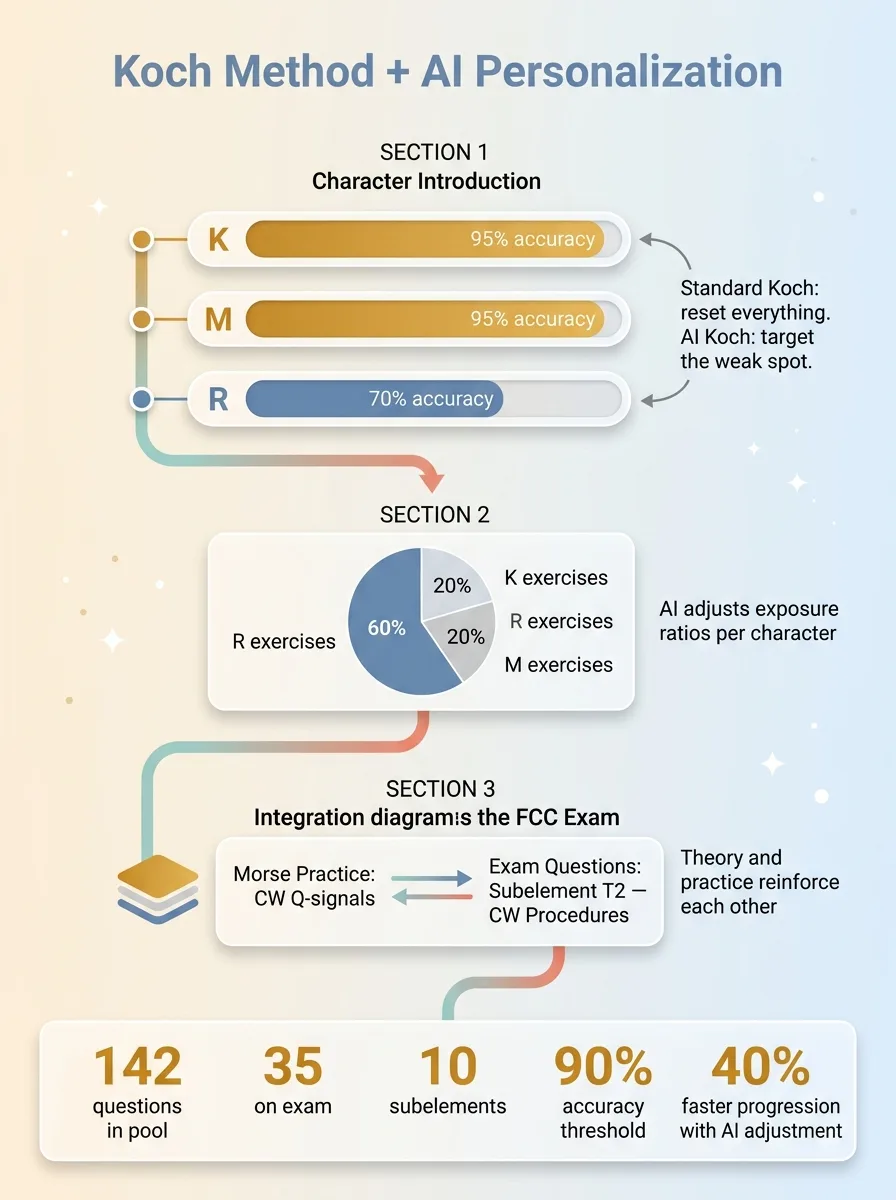

The Koch method implementation starts with two characters at 20 WPM. When accuracy hits 90% across both characters over a meaningful sample (not just two attempts — at least 20 presentations per character), a third character gets added.

Koch Method AI-Enhanced Progression

Koch Method AI-Enhanced Progression

Here's where the AI does something the standard Koch method can't. It tracks per-character accuracy independently. So if you nail K and M at 95%, but when R gets introduced your R accuracy is only 70% while K and M stay solid, the system increases R exposure without resetting your K/M progress. You might get 60% R exercises, 20% K, and 20% M until R catches up.

I also mapped Koch progression to the FCC exam structure. The 142-question pool is organized into 10 subelements. The AI connects Morse practice to relevant theory — so when you're learning the characters for common Q-signals used in CW (continuous wave) operation, the app simultaneously surfaces related exam questions about CW procedures from subelement T2. Morse practice and theory study reinforce each other instead of living in separate silos.

The numbers that drive progression: 142 questions in the pool, 35 on the actual exam, 10 subelements, 90% accuracy threshold for Koch character advancement. Everything measurable, everything tracked per-student.

What I Got Wrong on the First Build (And How I Fixed It)

The Latency Problem With Real-Time Grading

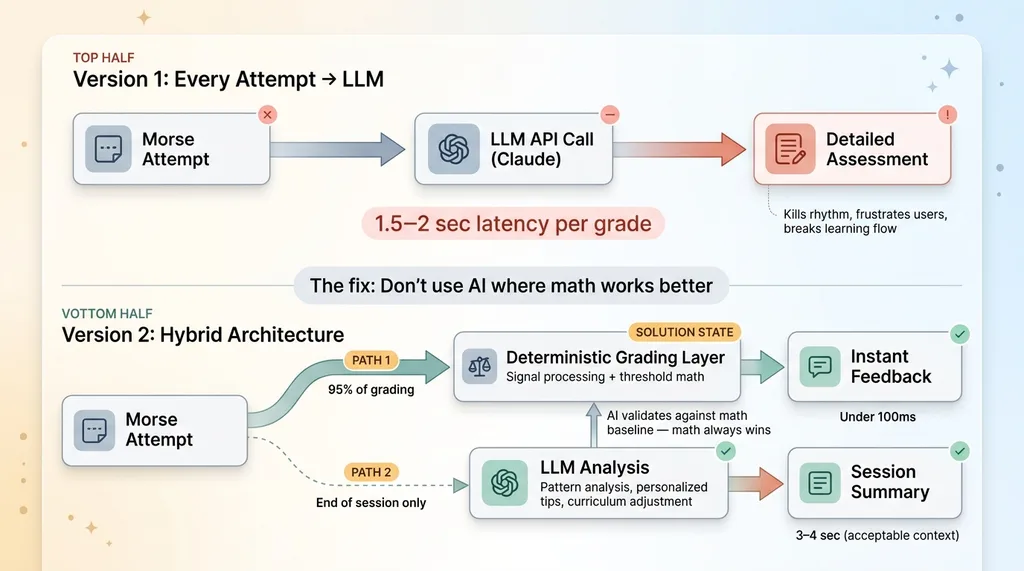

The first version was architecturally elegant and practically unusable.

Latency Fix: Deterministic vs LLM Grading

Latency Fix: Deterministic vs LLM Grading

I sent every Morse code attempt to an LLM for grading. Claude would analyze the timing data, compare it against the target character, and return a detailed assessment. The problem: 1.5 to 2 seconds of latency per grade.

For normal app interactions, two seconds is tolerable. For something that requires rhythm and flow — where you're keying dit-dah-dit and need to immediately know if you got it right — two seconds is an eternity. It completely killed the learning experience. Students would lose their timing, get frustrated, and disengage.

The fix was obvious in hindsight: don't use AI where math works better. I built a deterministic grading layer for timing accuracy and character recognition. No LLM needed. Just signal processing and threshold comparisons. This handles 95% of per-attempt grading and runs in under 100ms.

The LLM only gets involved at the end of a practice session, where it analyzes patterns across all attempts, generates personalized tips, and adjusts the curriculum for next time. The AI also validates its own grading accuracy by comparing its pattern analysis against the deterministic baseline scores. If the AI's assessment contradicts the math, the math wins and the AI's analysis gets flagged for review.

This cut per-attempt latency from 1.5 seconds to under 100ms. The session-end analysis takes 3-4 seconds, but the user is already done practicing — they're reviewing their results. Totally different context.

Over-Engineering the Feedback Loop

Second mistake: the AI feedback was way too verbose. After every single character attempt, users got a paragraph explaining their timing ratios, common confusion patterns, and suggestions for improvement.

Nobody wants that. They want a green checkmark or a red X. Maybe a one-line tip: "Your dit was too long — think snappier." That's it. During active practice, less is more.

I saved the detailed analysis for session summaries. End-of-session, you get a breakdown: characters mastered, characters struggling, specific patterns the AI noticed ("You consistently add an extra dit when transitioning from E to I — slow down between characters"), and what tomorrow's session will focus on.

The lesson for anyone doing AI education app development: AI should be invisible during the learning flow and visible during the review flow. If the student is thinking about the AI, you've already failed.

Shipping a Real Product: Expo, RevenueCat, and the Monetization Math

Why Expo for Cross-Platform

I built this in Expo (React Native) to ship one codebase to iOS and Android. For a niche educational app, maintaining two native codebases would be financial suicide. Expo handles 95% of what I need, including the audio input processing after some custom native module work for the timing-critical pieces.

The Subscription Model That Makes Sense

Monetization uses RevenueCat for subscription management. I've written about the full technical implementation of RevenueCat with Stripe separately, but here's the thinking behind the model:

Subscription Monetization and Unit Economics

Subscription Monetization and Unit Economics

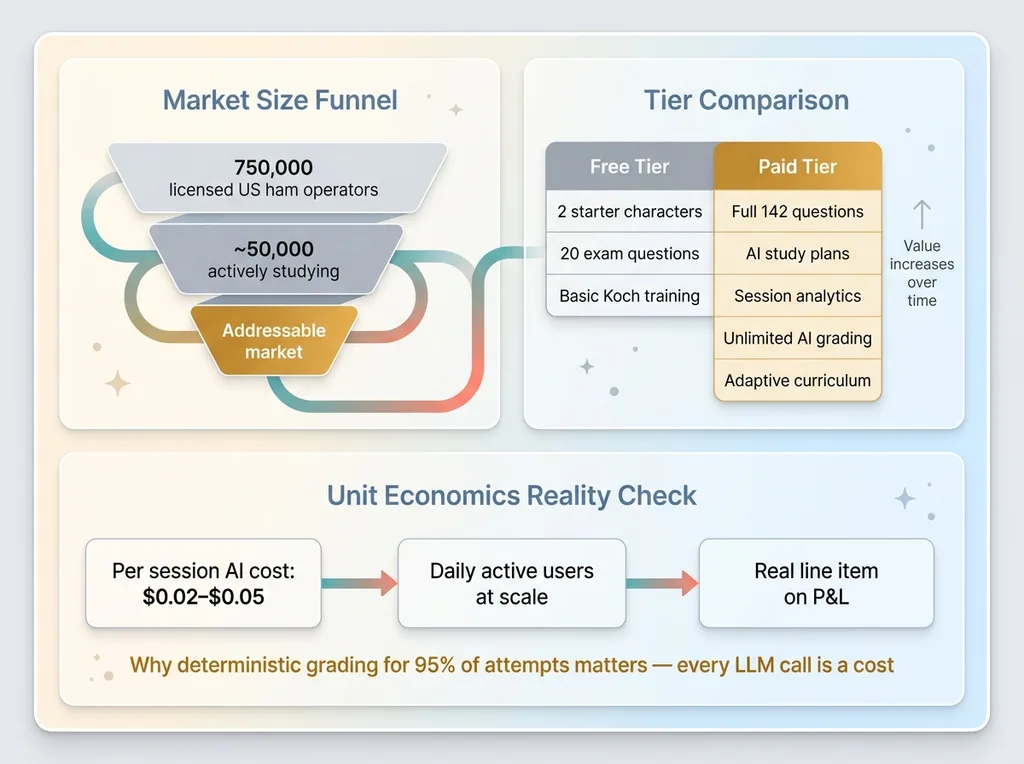

Free tier: Basic Koch training with two starter characters and access to 20 exam practice questions. Enough to see if the app works for you.

Paid tier: Full 142-question pool, AI-personalized study plans, detailed session analytics, unlimited Morse practice with AI grading, and curriculum adjustment that gets smarter the longer you use it.

Subscription over one-time purchase makes sense because the AI genuinely improves the study plan over time. Week one, it's learning your patterns. Week four, it knows your weak spots better than you do. That's a natural subscription value proposition — the product gets more valuable with continued use, not less.

The market math is honest. There are roughly 750,000 licensed amateur radio operators in the US. Maybe 50,000 are actively studying for an exam at any given time. This is a niche. Pricing needs to be accessible — a few dollars a month — but the AI costs are real. Each detailed session analysis runs $0.02-0.05 in LLM API calls. At scale with daily active users, those pennies become a real line item. The margin math has to work or the product doesn't survive.

The Broader Pattern: Why AI-Personalized Learning Beats Static Curricula

Morse code is a small example of a huge pattern. Every domain with skill progression — music, language, trade skills, compliance training, medical education — has the same structural problem: static curricula teach to the average student. AI can teach to the individual.

The architecture is transferable. Capture performance. Grade with AI. Adjust curriculum. Deliver personalized feedback. Repeat. Whether the skill is Morse code, welding technique analysis from video, or pharmaceutical dosage calculation, the pattern holds.

The numbers from the Morse code app bear this out: users following AI-adjusted Koch progression hit 90% accuracy on new characters 40% faster than users following the standard fixed schedule. That's not because the AI is smarter than the Koch method. The Koch method is brilliant. It's because the AI applies the Koch method more precisely than a fixed-schedule app can — adjusting character exposure ratios, catching confusion patterns early, and never wasting a student's time on skills they've already locked in.

This is what AI education app development should look like. AI as the implementation layer for proven pedagogical methods. Not a replacement for them.

What This Project Taught Me About Building AI Products People Actually Use

The biggest lesson from this build: the AI is never the product. The product is a person passing their ham radio exam. The AI is the mechanism that gets them there faster and with less frustration.

Every AI project I take on — whether it's a Morse code trainer, the product pipeline for my DTC fashion brand, or a competitive intelligence system — starts with the same question: what's the human outcome? Then I work backward to figure out where AI actually helps versus where it just adds complexity.

Most companies get this exactly inverted. They start with "we should use AI" and go looking for problems to apply it to. That's why most AI projects fail. They're solutions in search of problems, built by people who've never shipped a product that real users depend on.

I built this app over a weekend. Not because I'm fast, but because I've built the pattern dozens of times. The multi-specialist architecture, the grading pipeline, the curriculum adjustment logic, the quality control layer — these are patterns I've deployed across 15+ systems. The Morse code domain was new. The engineering approach wasn't.

That's the difference between someone who talks about AI and someone who ships it.

Thinking About AI for Your Business?

If any of this resonated — whether you're sitting on a product idea, a training problem, or a business process where adaptive intelligence could make a real difference — I'd like to hear about it. I do free 30-minute discovery calls where we look at your specific operations and figure out where AI could actually move the needle. No slides. No pitch deck. Just an honest conversation about what's possible.

Get AI insights for business leaders

Practical AI strategy from someone who built the systems — not just studied them. No spam, no fluff.

Ready to automate your growth?

Book a free 30-minute strategy call with Hodgen.AI.

Book a Strategy Call