The 5 Biggest Fears CEOs Have About AI (And Why 4 Are Wrong)

CEO fears about AI are common but mostly misplaced. I break down the 5 biggest concerns with real data and explain which one you should actually worry about.

By Mike Hodgen

I've had some version of the same conversation about 40 times now.

A CEO or COO gets on a call with me. They're smart, skeptical, and usually a little frustrated. They know AI is changing things. Their board is asking about it. A competitor just launched something that made them nervous. But when I ask what's holding them back, the same five fears come up almost every time.

These aren't irrational fears. The World Economic Forum and recent industry surveys put hard numbers on them: 72% of business leaders cite a knowledge gap as their primary barrier to AI adoption. 60% worry about implementation errors. 60% worry about job displacement. 55% say cost is the blocker. And 52% flag data privacy concerns.

CEO fears about AI are rational because the market has earned them. There's been a flood of overpromising vendors, half-baked pilot projects, and consulting firms that deliver slide decks instead of working systems. If you've been burned — or watched a peer get burned — skepticism is the sane response.

But here's what I've learned building 29 AI systems for my own DTC fashion brand: four of these fears are based on outdated assumptions or bad information. They made sense in 2022. They don't hold up in 2025.

The fifth fear — data privacy — is the one most CEOs actually underweight. And it's the one that can sink your company.

This isn't a dismissive piece. I'm not going to tell you everything is fine and you should just trust the technology. I'm going to tell you what I've seen after deploying AI across product creation, pricing, SEO, customer service, and content — and let you decide which fears deserve your attention and which ones are costing you by keeping you stuck.

If you're not sure where your company stands, a good starting point is assessing whether your business is actually ready for AI.

Fear #1: AI Costs Too Much (55% of CEOs)

What CEOs Think AI Costs

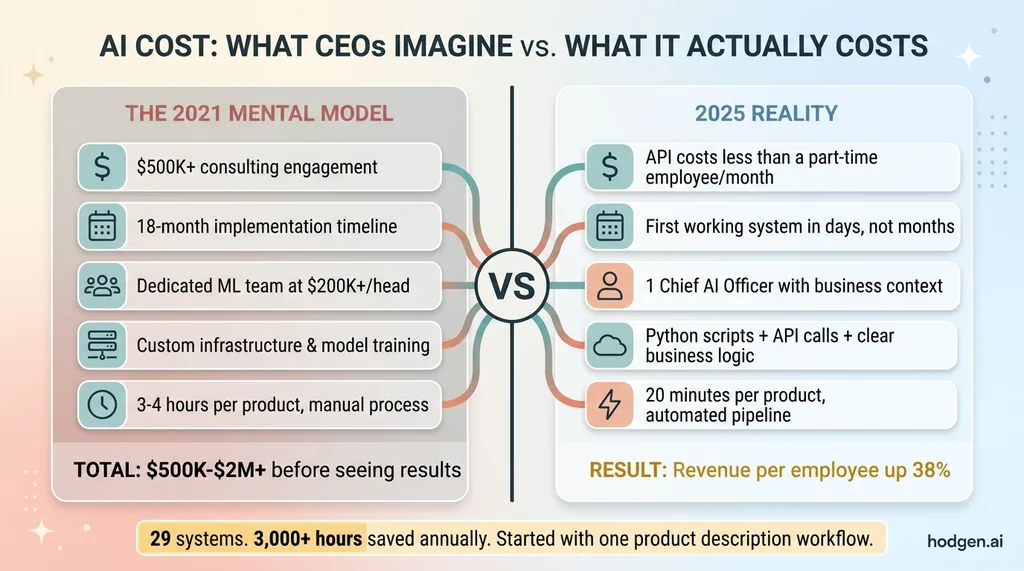

The mental model most CEOs carry is enterprise AI circa 2021. Half-million dollar consulting engagements. Eighteen-month timelines. A dedicated machine learning engineering team burning $200K+ per head. Custom model training on proprietary data sets. Infrastructure costs that make your CTO cry.

That was real. And for Fortune 500 companies building custom foundation models, some of those costs still exist. But that's not what AI adoption looks like for a $5M-$50M company in 2025.

What It Actually Costs

My entire multi-model architecture — Claude for content and reasoning, Gemini for image generation, custom chaining for cost efficiency — runs on API calls that cost less per month than a single part-time employee. Not less than a senior engineer. Less than a part-time admin.

AI Cost: 2021 Mental Model vs. 2025 Reality

AI Cost: 2021 Mental Model vs. 2025 Reality

The product creation pipeline at my DTC fashion brand went from 3-4 hours per product to 20 minutes. Concept, description, SEO optimization, pricing classification, live on the site. That system wasn't a $500K project. It was Python scripts, API calls, and clear business logic.

I built an AI-powered sales pipeline in a weekend. Not a proof of concept — a working system.

The real cost question in 2025 isn't "can we afford AI?" It's "can we afford not to use it?"

After deploying AI across my operations, revenue per employee went up 38%. For a $10M company, leaving that kind of productivity improvement on the table means you're effectively burning $3.8M in potential output every year. Not because you're doing anything wrong — but because your processes are running at a fraction of what they could.

You don't need a six-figure budget to start. You need clarity on which process to fix first. I wrote a whole piece on the AI systems every small business should build first — most of them cost $0-500/month in tooling.

Fear #2: We Don't Have the Expertise (72% of CEOs)

The Knowledge Gap Is Real — But It's Not What You Think

This is the most common fear by a wide margin, and it's the most misunderstood.

CEOs think they need to hire ML engineers. Build a data science team. Understand transformer architecture, fine-tuning, RAG pipelines, vector databases. They picture a whiteboard covered in math they haven't seen since college.

They don't need any of that.

The expertise gap isn't technical — it's strategic. You don't need to know how a language model works any more than you need to know how a combustion engine works to run a logistics company. What you need is someone who can look at your operations, identify where AI creates real value, and then build and integrate those systems into your existing workflow.

That's what a Chief AI Officer does. Not a consultant who hands you a strategy document and disappears. Someone who sits inside your operations and builds. I wrote about what that role actually looks like if you're curious.

Here's a concrete example. My AI toolkit has 22,000+ lines of custom Python in production. That wasn't written by a team of engineers at a software company. It was written by one person — me — with deep business context and the right AI tools accelerating the development. I know what each system needs to accomplish because I understand the business problems they're solving.

The knowledge gap closes fast when you have someone who speaks both languages: business operations and AI implementation. Without that bridge, yeah, it feels impossible. With it, you go from "we don't know where to start" to a working system in weeks, not quarters.

The risk isn't that your team doesn't know AI. The risk is that you wait for perfect knowledge before acting. Perfect knowledge doesn't exist in a field that changes every three months. What exists is experienced judgment about what works, what doesn't, and where to start.

Fear #3: We'll Implement It Wrong (60% of CEOs)

Why This Fear Feels True

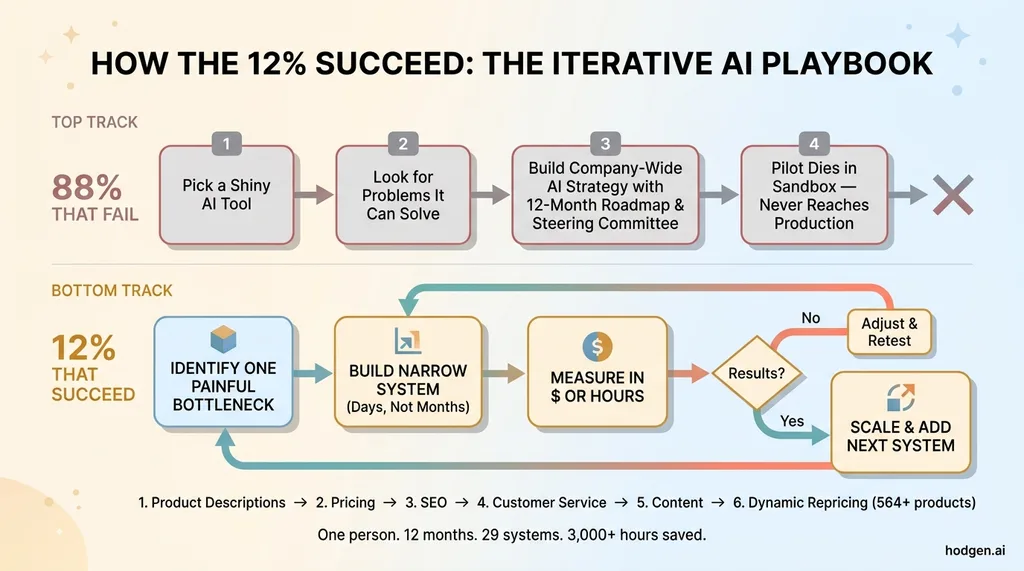

This fear has the most evidence behind it. 88% of AI projects fail. That's not a scare tactic — that's the data. If you want the full breakdown on why, I wrote a deep dive on what separates the 12% that succeed from the 88% that don't.

So yes — most companies do implement AI wrong. The fear is earned.

But the failure pattern isn't "AI doesn't work." It's "companies approach AI backwards." They pick a shiny tool, then look for a problem it can solve. Or they outsource to a consultancy that delivers an impressive pilot in a sandbox environment that never survives contact with real operations. Or they try to boil the ocean — "let's build an AI strategy for the whole company" — and get paralyzed by scope.

What the 12% Do Differently

The companies that succeed treat AI implementation like any other operational improvement: hypothesis, test, measure, scale.

The 12% Success Pattern: Iterative AI Implementation

The 12% Success Pattern: Iterative AI Implementation

My first AI system wasn't a grand strategy. It was automating product descriptions. One painful, repetitive task that ate hours every week. Once that worked, I moved to pricing. Then SEO. Then customer service triage. Then content generation. Then dynamic repricing across 564+ products.

Twenty-nine systems later, I'm saving 3,000+ hours annually. But it started with one system solving one problem.

The implementation fear is overblown not because implementation is easy — it's genuinely hard to do well. It's overblown because the right approach dramatically reduces the risk. Start with a specific operational bottleneck. Build a narrow system. Measure the result in dollars or hours. Then decide whether to expand.

The companies that fail are the ones who try to "do AI" as a company-wide transformation initiative with a 12-month roadmap and a steering committee. The ones that succeed ship something in two weeks and iterate from there.

Fear #4: AI Will Replace Our People (60% of CEOs)

What Actually Happens When You Deploy AI

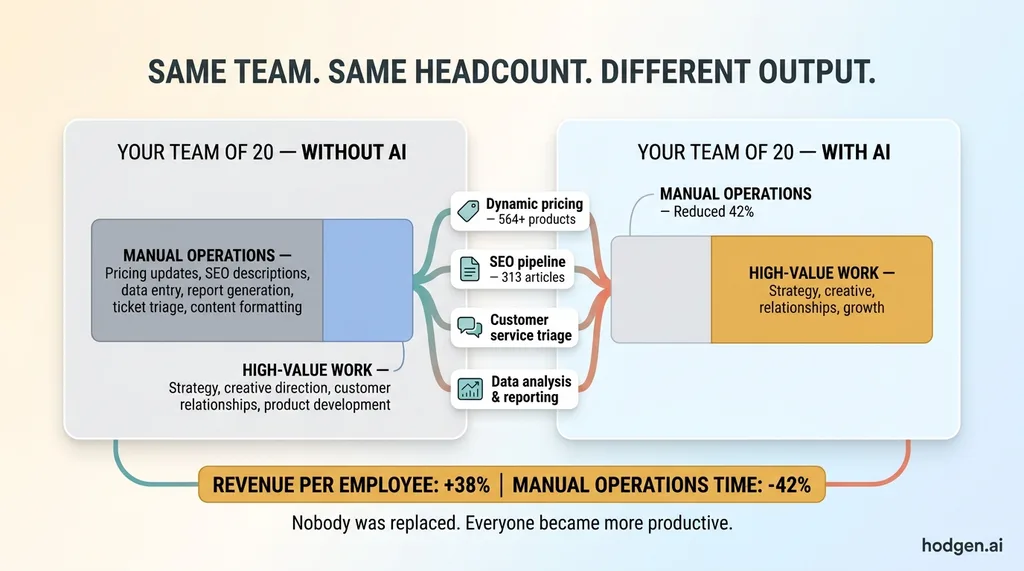

This is the most emotionally charged fear and the most publicly discussed. It's also almost entirely backwards for companies in the $1M-$50M range.

AI Augmentation: Same Team, Multiplied Output

AI Augmentation: Same Team, Multiplied Output

At my DTC brand, AI didn't replace a single person. What it did was make the existing team dramatically more productive. Revenue per employee went up 38%. Manual operations time dropped 42%. The same people are doing higher-value work — creative direction, customer relationships, product strategy — while AI handles the repetitive tasks nobody wanted to do anyway.

Nobody misses manually updating 564 product prices. Nobody misses writing the same SEO meta descriptions for the 200th time. Nobody misses triaging routine customer service tickets that have obvious answers.

For small and mid-market companies, the real threat isn't that AI replaces your team. It's that your competitors use AI to do more with the same headcount, and you can't keep up.

Think about it this way. Your team of 20 people is competing against a rival's team of 20 people. But their team has AI handling pricing, content, customer service triage, data analysis, and reporting. Their people are spending 42% less time on manual operations and 42% more time on the work that actually grows the business. Who wins in 18 months?

The displacement conversation is a macro-economic question for governments and policy makers. It's a real conversation worth having at that level. But for a CEO running a $5M-$50M company, the practical question is different: how do you multiply the output of the team you already have?

My AI handles dynamic pricing for 564+ products, manages the SEO pipeline for 313 blog articles, and triages customer service requests. That's not replacing people. That's removing the bottleneck so people can focus on product, relationships, and growth — the things that actually require human judgment.

Fear #5: Data Privacy and Security (52% of CEOs)

CEO Fear vs. Actual Risk Matrix

CEO Fear vs. Actual Risk Matrix

This Is the One You Should Actually Worry About

Here's the twist. The fear that the fewest CEOs cite — 52% — is the one that's most justified. And it's not just justified. It's dangerously underweighted.

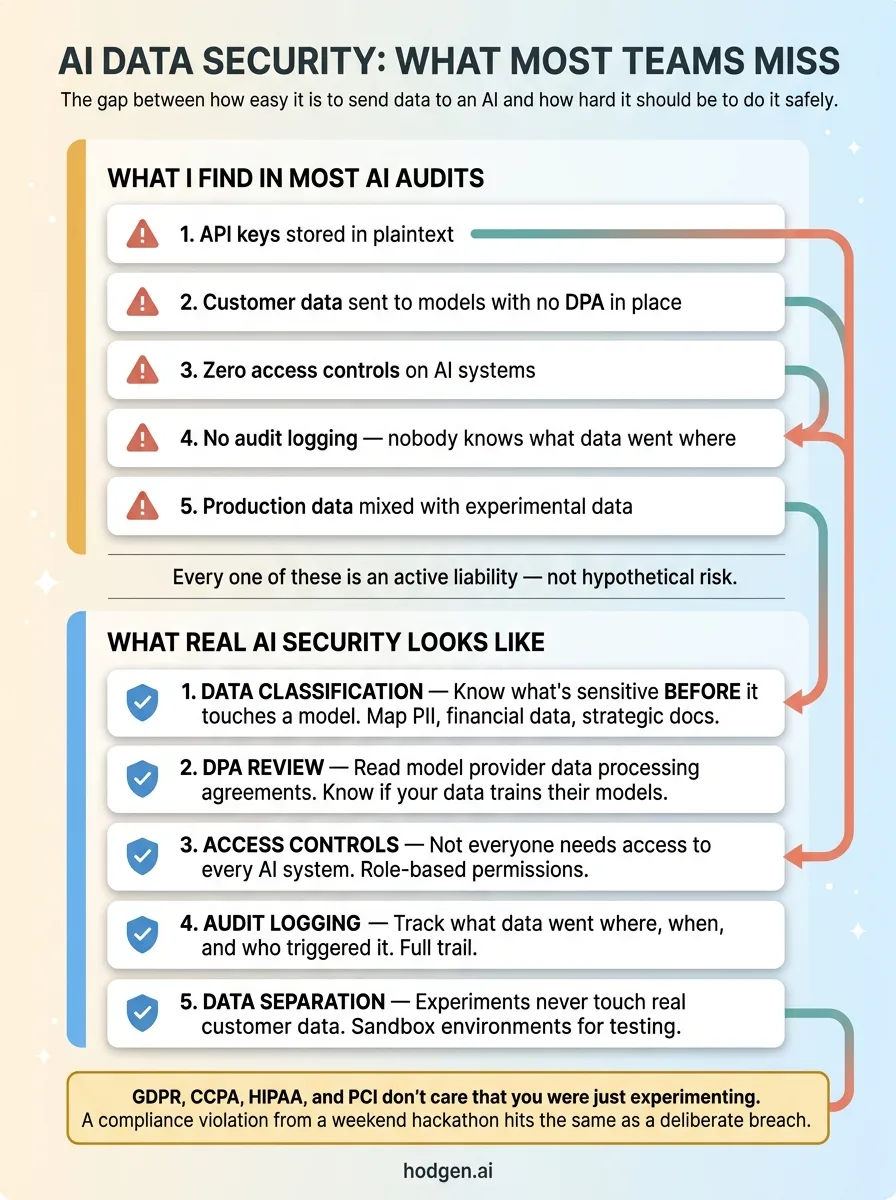

When you deploy AI, your company's data flows through third-party APIs and models. Customer data. Pricing strategies. Internal communications. Strategic documents. Financial records. Most teams don't think about where that data goes, how it's stored, whether it's used for model training, or what happens when — not if — there's a breach.

I've seen this firsthand. When I've audited vibe-coded projects and quick AI implementations, security was an afterthought in nearly every one. API keys stored in plaintext. Customer data sent to models with no data processing agreements in place. Zero access controls. No audit logging. Production data mixed with experimental data.

This isn't hypothetical risk. It's active liability.

What Real AI Security Looks Like

GDPR, CCPA, and industry-specific regulations like HIPAA and PCI don't care that you were "just experimenting with AI." A compliance violation hits the same whether it came from a deliberate strategy or a weekend hackathon that accidentally sent customer PII to a model provider's training pipeline.

AI Security Checklist: What Most Teams Miss

AI Security Checklist: What Most Teams Miss

Proper AI security means:

- Data classification before deployment — know what's sensitive before it touches a model

- Model provider DPA review — read the data processing agreements, not just the pricing page

- Access controls — not everyone in the company needs access to every AI system

- Audit logging — know what data went where, when, and who triggered it

- Separation of training and production data — your experiments shouldn't touch real customer information

This is where you need real expertise, not a YouTube tutorial. The technical barrier to sending data to an API is essentially zero. The governance barrier should be significant.

The irony of CEO fears about AI is that the ones keeping executives up at night — cost, job loss, implementation risk — are manageable problems with clear solutions. A data breach or compliance violation? That can end your company. And it's the one getting the least attention.

What I Tell CEOs Who Are Still on the Fence

The fears are natural. They come from a market that's been oversold and under-delivered by vendors who care more about closing contracts than delivering results. Your skepticism has been earned.

But waiting isn't a neutral choice. Every month of delay is a month your competitors are compounding their AI advantage. And AI advantages compound fast — each system you build creates data and workflows that make the next system easier.

The playbook is simple. Start with one painful process. Build a narrow AI system to fix it. Measure the result in hours saved or dollars generated. Don't boil the ocean. Don't hire a consulting firm to write a strategy document. Build something real and let the results speak.

I started with product descriptions. Twelve months later, I had 29 systems saving 3,000+ hours a year. That's not magic. That's disciplined execution with the right tools and clear business context.

If you're a CEO reading this and recognizing your own fears in this list, that's a good sign. It means you're thinking seriously about AI adoption, not just reacting to hype. The question isn't whether to move forward — it's where to start and how to do it without the mistakes that kill 88% of AI projects.

The next step is a real conversation about what AI actually looks like for your specific business. Not a generic pitch. Not a capabilities deck. A real assessment of your operations, your bottlenecks, and where the highest-impact opportunities are.

Thinking About AI for Your Business?

If any of this resonated, let's talk. I do free 30-minute discovery calls where we look at your operations and identify where AI could actually move the needle. No pitch deck, no pressure — just an honest conversation about what's possible and what's not.

Get AI insights for business leaders

Practical AI strategy from someone who built the systems — not just studied them. No spam, no fluff.

Ready to automate your growth?

Book a free 30-minute strategy call with Hodgen.AI.

Book a Strategy Call