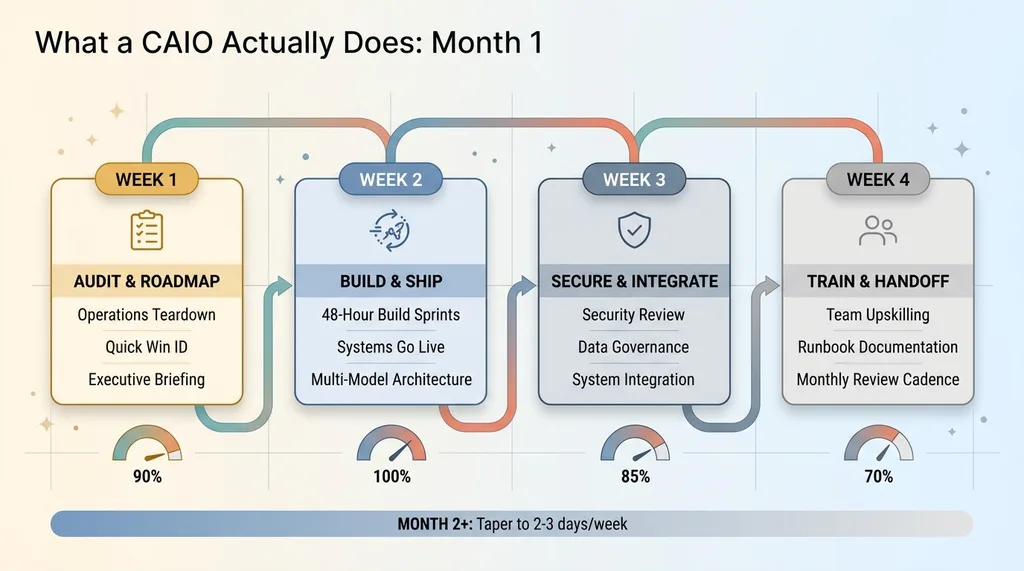

What a CAIO Actually Does: Week-by-Week Breakdown

What does a CAIO do day-to-day? A real week-by-week breakdown of strategy sessions, builds, deployments, and team training from a working Chief AI Officer.

By Mike Hodgen

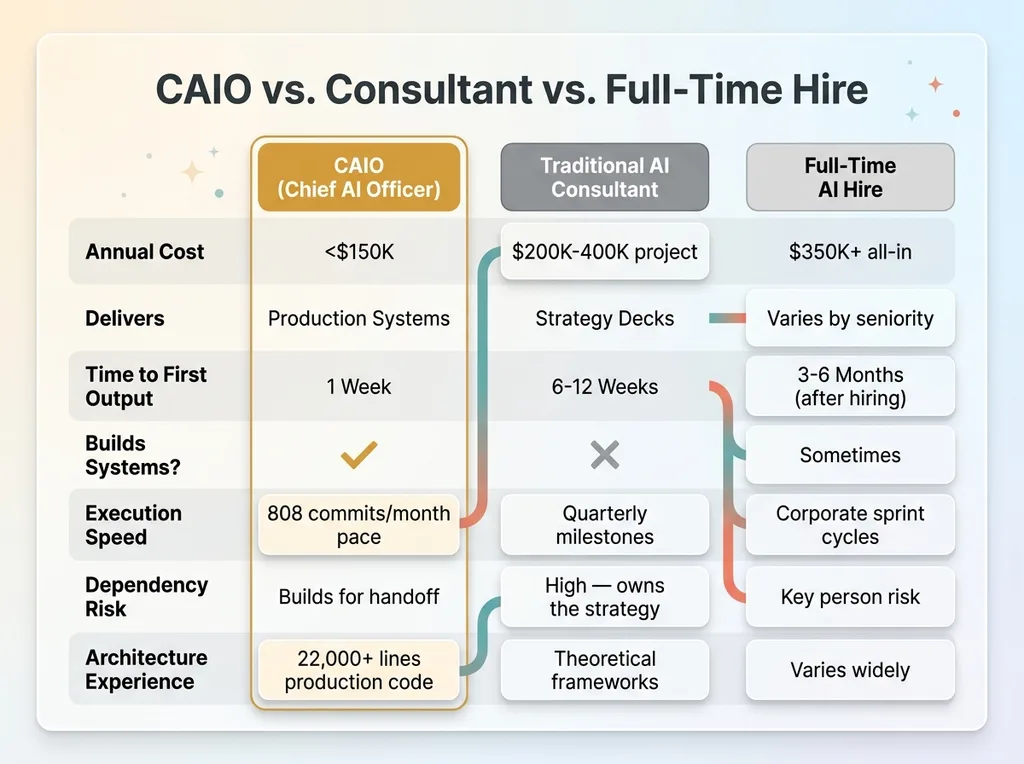

Most people hear "CAIO" and picture someone who shows up for a few hours a week, drops some advice, and disappears. That's not what a CAIO actually does. At least not a good one. The term "fractional" throws people off — it implies partial effort, divided attention, someone who's not fully in it. The reality is the opposite. You get concentrated, senior-level execution without the $350K+ all-in cost of a full-time hire.

Think of it like a surgeon. They don't spend 40 hours a week with one patient. But the 4 hours they spend in the operating room are the most consequential hours that patient will ever experience. That's the model. Every hour is output. No meetings about meetings. No corporate drag. No waiting for approval chains.

I run my own DTC fashion brand in San Diego while simultaneously building AI systems for clients. Across my own business alone, I manage 29 AI-powered automation modes, 564+ dynamically priced products, and a content pipeline spanning 313 blog articles. That's not theory — it's production infrastructure I maintain alongside client work. The concentrated model forces discipline. When you're billing for outcomes instead of hours in a chair, you learn fast what actually moves the needle.

So here's what a typical first month looks like, week by week, when you bring on a CAIO. Not a pitch deck version. The actual work.

Week 1: Audit, Teardown, and the AI Roadmap

CAIO Week-by-Week Timeline

CAIO Week-by-Week Timeline

Monday–Tuesday: The Operations Audit

The first two days are a deep dive into how your business actually runs. Not how it looks in org charts — how work actually gets done. What tools are people using? Where are they copying data from one system into another? Where is information trapped in someone's inbox or a spreadsheet that nobody else can access?

This isn't a survey I send you. I need hands-on access: your project management tools, your CRM, your Slack or Teams, your dashboards, your fulfillment workflow. I've done this enough times to spot the patterns fast. The repetitive data entry that eats 15 hours a week. The customer service bottleneck where three people are answering the same types of questions. The reporting process that takes someone an entire Friday to compile.

For context, the first AI systems every business should build are almost always in these areas — they're where manual effort is highest and automation complexity is lowest. Most companies I audit are at zero in terms of AI infrastructure. My own brand runs a 14-skill AI platform. The gap between those two points is where the roadmap lives.

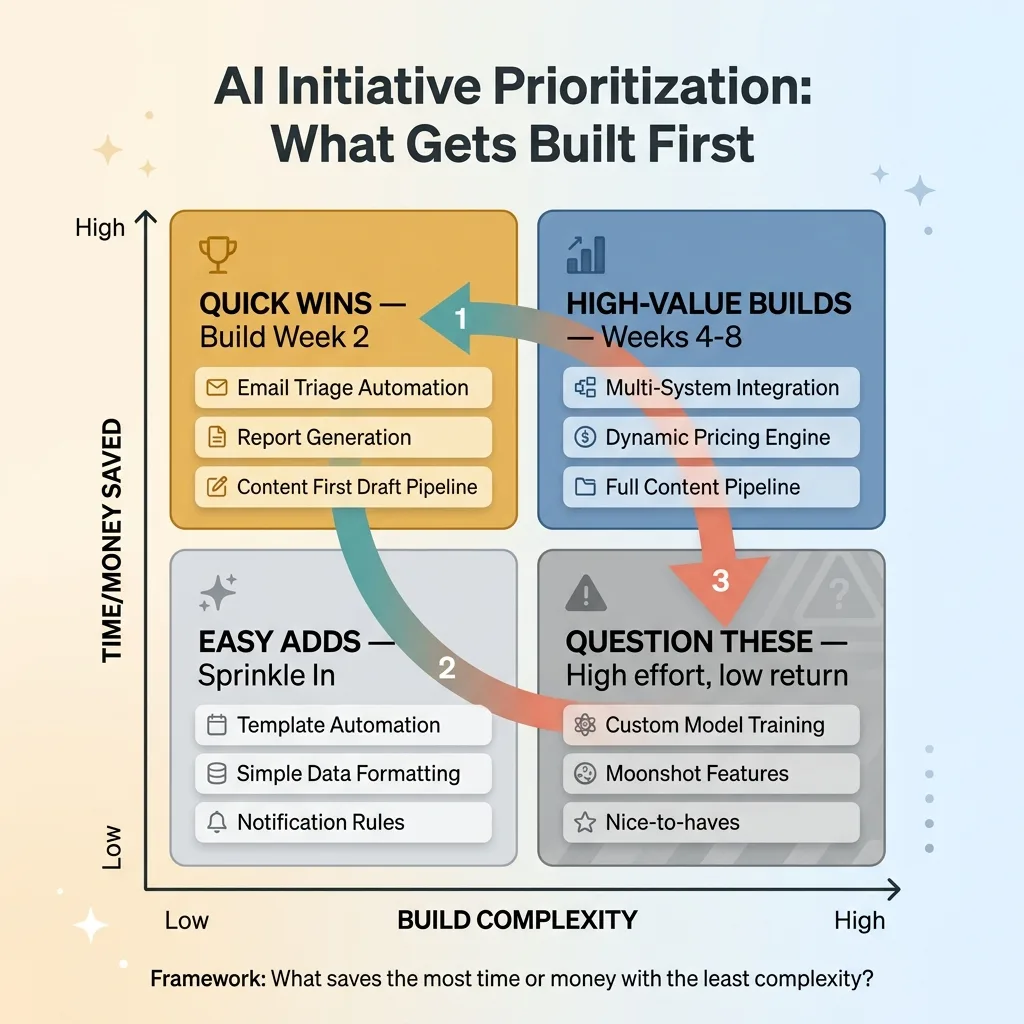

Wednesday–Thursday: Roadmap and Quick Wins

With the audit complete, I build a prioritized roadmap using a simple framework: what saves the most time or money with the least complexity? That's it. No fancy methodology. Just math.

AI Prioritization Framework

AI Prioritization Framework

I identify 2-3 quick wins that can ship the following week. These are usually things like automated email triage for a team drowning in 150+ messages a day, a report that currently takes someone 4 hours to build manually, or a basic content system that eliminates the blank-page problem for marketing. The quick wins serve a dual purpose — they deliver immediate value, and they build internal confidence that this approach actually works.

The rest of the roadmap stretches out 8-12 weeks, with each initiative ranked by estimated ROI, build complexity, and dependency on other systems.

Friday: The Executive Briefing

Friday is a presentation to the leadership team. Not a 60-slide deck — a clear, concise document showing three things: where you are now, where you should be, and the estimated ROI for each initiative along with the build order.

Real numbers. When I ran this process on my own DTC brand, the audit phase identified a target of 42% reduction in manual operations time. We hit it. That number didn't come from a consultant's aspirational slide. It came from mapping every manual process, estimating the time cost, and calculating what automation could realistically absorb.

The executive briefing ends with alignment on priorities. What gets built first. What gets measured. What success looks like in 30, 60, and 90 days.

Week 2: First Builds Ship to Production

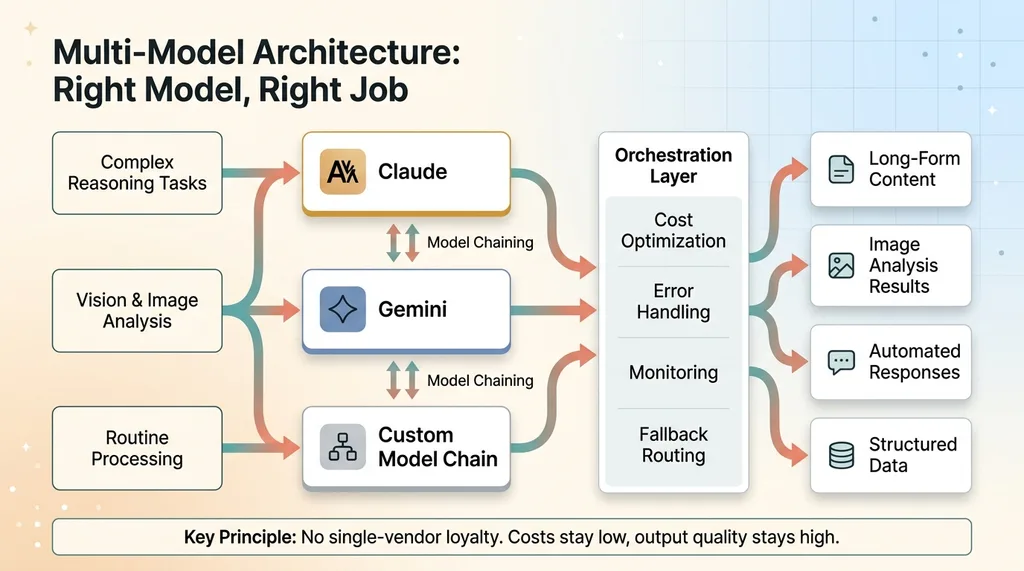

Multi-Model Architecture Decision Map

Multi-Model Architecture Decision Map

The 48-Hour Build Sprint

This is where the CAIO role diverges completely from a consultant. Consultants hand you a strategy document. A CAIO ships working systems. I don't just advise on AI — I build it, and that distinction matters more than anything else in this article.

Week 2 is focused build time. The quick wins identified in Week 1 go from concept to production. A real example: standing up an AI email triage system for a team processing 200+ emails a day. The system reads incoming messages, classifies them by urgency and type, drafts responses for the routine ones, and flags the ones that need human judgment. That's not a proof of concept sitting in a sandbox. It goes live. People use it on Tuesday.

Another common Week 2 build: a content pipeline that takes a topic brief and produces a structured first draft with SEO metadata, internal linking suggestions, and brand voice calibration. In my own brand, this pipeline — combined with other automations — compressed product creation from 3-4 hours down to 20 minutes per product.

This is also where multi-model architecture decisions happen. I'm not loyal to any single AI vendor. Claude handles complex reasoning and long-form content. Gemini handles vision tasks and image analysis. Custom model chaining keeps costs down while maximizing output quality. The right model for the right job, every time.

Each build ships with monitoring, error handling, and a handoff document so your team can operate it immediately.

Why Speed Matters More Than Perfection

In AI, the landscape shifts monthly. A 6-month implementation timeline means you're building on yesterday's models with yesterday's capabilities. The CAIO model compresses this to days. Ship, measure, iterate.

CAIO vs Consultant vs Full-Time Hire Comparison

CAIO vs Consultant vs Full-Time Hire Comparison

During one 30-day stretch building systems for my own brand, I pushed 808 commits. That's not recklessness — it's the pace required to stay ahead of model deprecations, API changes, and the constant improvement cycle that AI demands. Perfection on day one is impossible when the underlying technology evolves this fast. What matters is getting functional systems into production quickly, then refining them with real usage data.

Week 3: Architecture Review and Risk Management

Security and Data Governance

Week 3 is where the unglamorous but critical work happens. Everything built in Week 2 gets reviewed for security vulnerabilities, data handling compliance, and integration stability.

Most AI projects fail not because the idea was bad, but because the architecture was fragile. This is the week I address the things most AI vendors skip entirely: rate limiting so a runaway process doesn't blow through your API budget in an hour. Input validation so malformed data doesn't produce garbage outputs. Error handling for when models hallucinate, refuse to process certain content due to safety filters, or simply go down — because they do, more often than people realize.

Governance gets established here too. Who has access to what AI systems? How are AI-generated decisions logged? What happens when the AI is wrong? For any system that touches financial data, customer information, or decision-making that could have legal exposure, I build deterministic guardrails. The AI handles the 80% of routine cases. The edge cases get routed to humans with full context. No black boxes.

Integration With Existing Systems

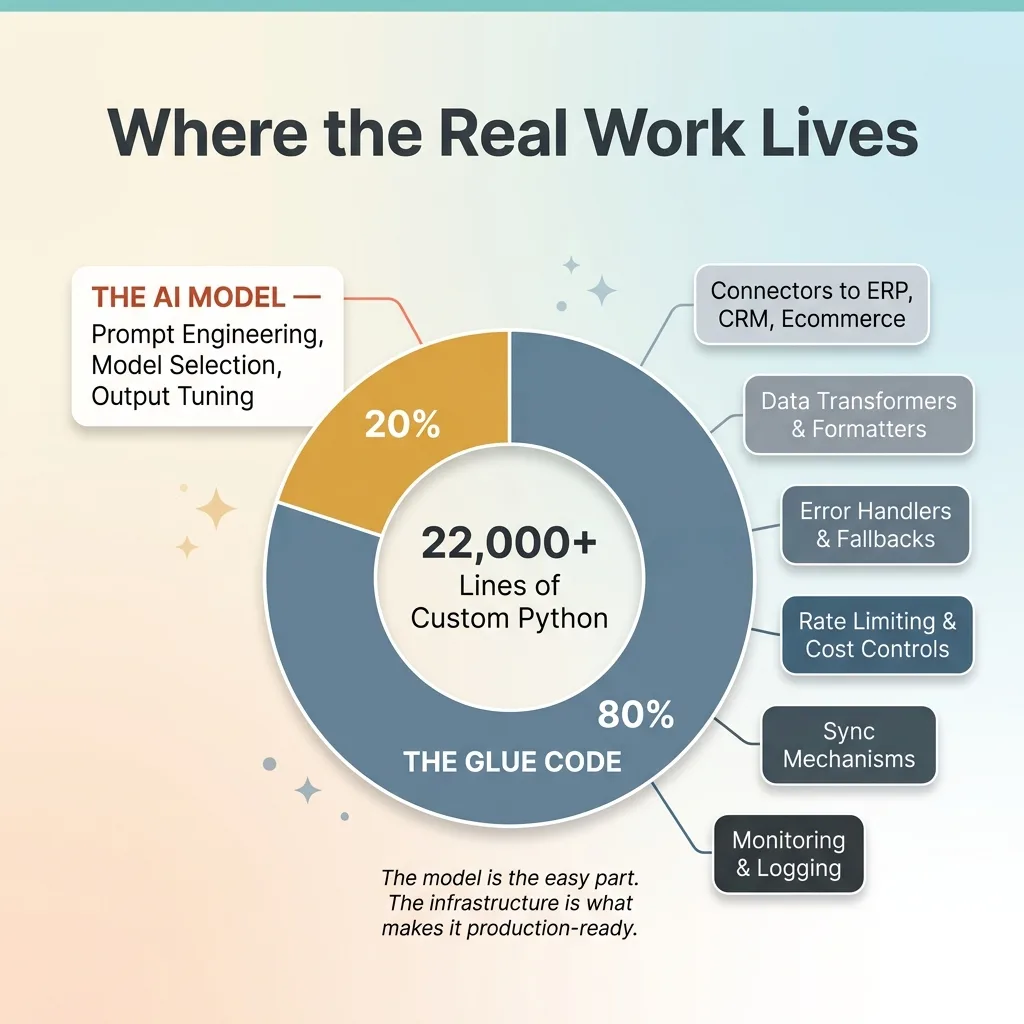

The other major focus of Week 3 is connecting AI systems to your existing stack — your ERP, CRM, ecommerce platform, accounting software, whatever you're running. This is where the real complexity lives. The AI model itself is maybe 20% of the work. The other 80% is the glue code that makes it function inside your business.

The 80/20 AI Build Reality

The 80/20 AI Build Reality

This is where my 22,000+ lines of custom Python come from. Not from fancy algorithms — from the connectors, data transformers, error handlers, and sync mechanisms that make AI systems reliable in production. It's not glamorous. It's the difference between a demo and a deployed system.

Week 3 also includes the first architecture review with your CTO or head of engineering, if you have one. I walk through everything that's been built, how it works, where the failure points are, and how to maintain it. The goal is transparency, not mystique. If your technical team doesn't understand what I've built, I've failed.

Week 4: Team Training and the Handoff Protocol

Upskilling Without the Buzzwords

The goal is never dependency. It's capability transfer. If I build systems that only I can operate, I've created a problem, not solved one.

Week 4 focuses on making your team self-sufficient on everything that's been deployed. This isn't a webinar. It's sitting with the ops person who now runs the email triage system and showing them how to adjust the classification rules when a new category of inquiries starts coming in. It's teaching the marketing lead how to modify content pipeline prompts when the brand voice needs to shift for a seasonal campaign. It's giving the CEO a dashboard that shows AI system performance in terms they actually care about — hours saved, cost reduced, error rates — and making sure they know how to read it.

The 3,000+ hours I save annually across my own systems only hold because I built them to run without constant intervention. The same principle applies to everything I build for clients. If the team can't operate the systems without calling me every day, the deployment is incomplete.

Documentation That Actually Gets Used

Every system gets a runbook. Not a 40-page manual that lives in a shared drive and never gets opened. A concise document with three sections: here's how it works, here's how to fix it when it breaks, here's when to call me.

I also establish a monthly review cadence during Week 4. What metrics to track. What thresholds trigger a review — like if the email triage accuracy drops below 92%, or if the content pipeline starts producing outputs that need more than minor edits. How to measure AI ROI over time so the CFO can see the numbers, not just hear about them.

This is where the engagement model becomes clear. Month 1 is the most intensive — the full audit, build, secure, and train cycle. Subsequent months are lighter but continuous.

What Month 2 and Beyond Looks Like

Month 2 shifts to the next phase: new builds from the roadmap, performance optimization of existing systems, and adapting to new model releases. A system built on one model in January might benefit from a newer, cheaper, or more capable model by March. Staying current is part of the job.

The cadence typically drops from 4-5 days per week to 2-3 days per week as systems stabilize and the team absorbs more operational responsibility. What a Chief AI Officer costs in 2026 breaks down the financial model in detail, but the short version is: the investment front-loads and then tapers as your internal capability grows.

Some companies run 3-month engagements. Some go 6 months or longer. The determining factor is the size of the roadmap and the team's ability to absorb new systems at a reasonable pace. I'll be honest — some companies only need 8-12 weeks. If a CAIO tells you they need to be embedded forever, they're building dependency, not capability.

How to Know If This Model Fits Your Business

This isn't for everyone. Here's who it works for:

- Companies doing $1M-$50M in revenue where the CEO knows they're falling behind on AI but doesn't have the technical bench to catch up. You've seen competitors move faster. You know the gap is growing.

- Companies that hired a junior "AI person" and got underwhelming results. Not because the person was bad — because they didn't have the architectural experience to build production systems, or the executive context to know what to build first.

- CEOs who are personally experimenting with ChatGPT but can't figure out how to turn that into something systematic across the org. You know AI works. You just can't bridge the gap between individual productivity and organizational capability.

It doesn't work for companies that want a strategy deck and nothing built. It doesn't work if you're not willing to give system access — I can't audit what I can't see. And it doesn't work if you expect magic without changing any processes. AI amplifies your operations. If the operations are broken, AI amplifies the brokenness faster.

Ready to Bring AI Leadership Into Your Company?

I work with a small number of companies at a time. That's deliberate — the model requires deep context in your business, not surface-level familiarity spread across 20 clients. If you're serious about deploying AI systems that actually run in production and deliver measurable results, apply to work together. I review every application personally.

Get AI insights for business leaders

Practical AI strategy from someone who built the systems — not just studied them. No spam, no fluff.

Ready to automate your growth?

Book a free 30-minute strategy call with Hodgen.AI.

Book a Strategy Call